If there was a textbook on building safe ASI with instructions that are sufficiently straightforward to execute, people would tend to build safe ASI rather than an extinction/disempowerment ASI. Some AI safety efforts could be thought of as contributions to this hypothetical textbook, making it marginally more real.

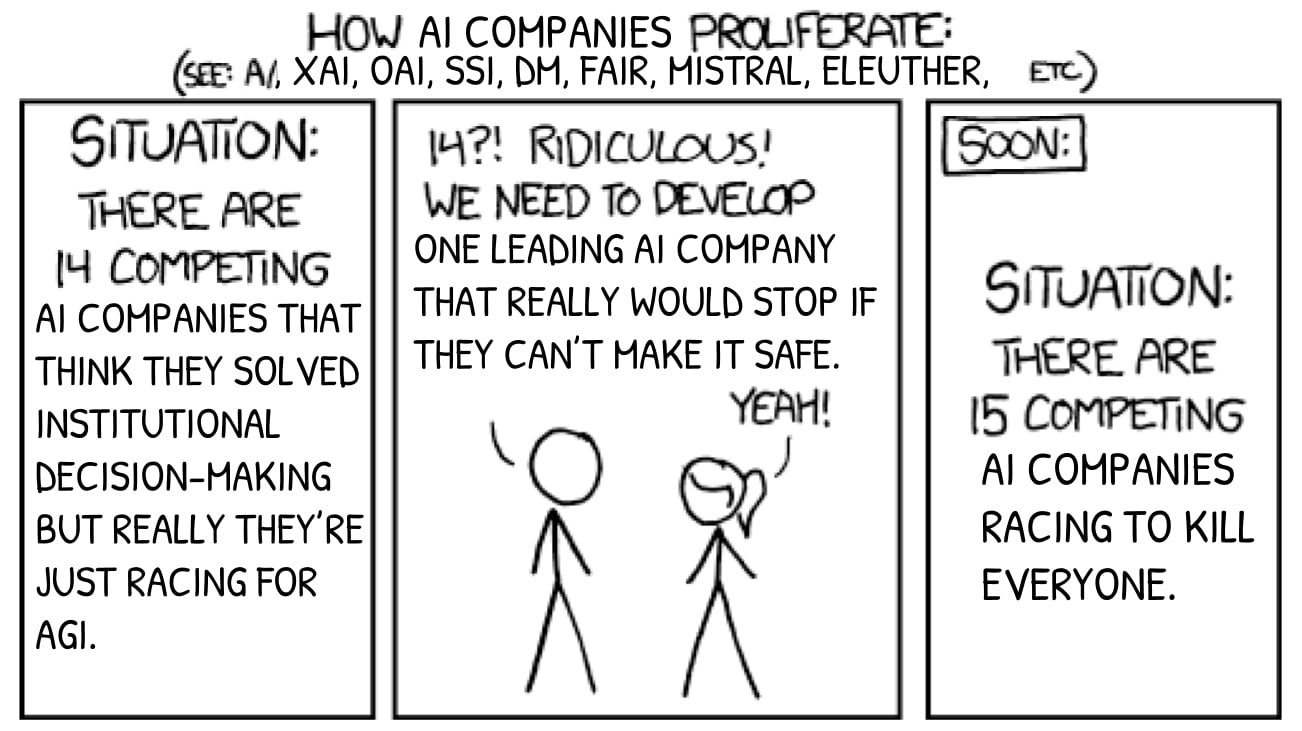

unsafe ASI is vastly easier to build than controlled ASI, and is on the same tech path

The point of an ASI ban/pause is to create the time to reduce this gap, until it's sufficiently narrow that competent people can walk across without falling through. If an unsafe ASI is artificially delayed despite technological feasibility, there is time to write the textbook, to make safe ASI about as easy to implement. And similarly for some AI safety efforts that don't involve an ASI ban/pause, which attack the gap from the other end.

(Scalable oversight agenda hopes AIs can write the textbook on their own, sufficiently quickly to win the race against the technical feasibility of unsafe ASI. I would feel a lot better about this plan if alignment of Mythos-level systems was pursued for 30 years before going further, and somehow there was a guarantee that Mythos instances won't be founding a country and declaring sovereignty in the meantime. This guarantee gets more believable if there are no Mythos-level systems at all yet.)

Sometimes people make various suggestions that we should simply build "safe" artificial Superintelligence (ASI), rather than the presumably "unsafe" kind.[1]

There are various flavors of “safe” people suggest.

Now I could argue at lengths about why this is astronomically harder than people think it is, why their various proposals are almost universally unworkable, why even attempting this is insanely immoral[2], but that’s not the main point I want to make.

Instead, I want to make a simpler point:

Assume you have a research agenda that, if executed, results in a ASI-tier powerful software system that you can “control”.[3]

Punchline: On your way to figuring out how to build controllable ASI, you will have figured out how to build unsafe ASI, because unsafe ASI is vastly easier to build than controlled ASI, and is on the same tech path.

You can’t build a controlled ASI without knowing many, MANY things about intelligence and how to build it.

So this then bottlenecks the dual technical problems of “how to find an agenda that results in controllable ASI” and “how to execute on such an agenda” on “even if you had such an agenda, how do you execute it without accidentally, or due to some asshole leaving the project or reading your papers, building unsafe ASI along the way?”

No one I know pursuing various agendas of this type has answers to these questions. And lets be crystal clear: This is the fundamental question any sensible “safe ASI” project needs to answer before even being worth considering.

You would need to either have:

This means that the primary prerequisite to even considering starting to work on a safe ASI plan is to have a global ASI ban and powerful enforcement already in place.[4]

I’m assuming you already accept that “unsafe” ASI would be really, really bad. If not, this is not the post for you to read.

In short: If you unilaterally try to build ASI, you are directly and openly threatening the world with violent conquest. This is sometimes called a “pivotal action”, which is code word for “(insanely violent) unilateral action that forces the world into a state I think is good.”

For some hopefully meaningful definition of the word “control”

This is the rationale behind proposals such as MAGIC.