Speculative inferences about path dependence in LLM supervised fine-tuning from results on linear mode connectivity and model souping — AI Alignment Forum

TL;DR: I claim that supervised fine-tuning of the existing largest LLMs is likely path-dependent (different random seeds and initialisations have an impact on final performance and model behaviour), based on the fact that when fine-tuning smaller LLMs, models pretrained closer to convergence produce fine-tuned models with similar mechanisms while this isn’t the case for models pretrained without being close to convergence; this is analogous to current LLMs that are very far from convergence at the end of training. This is supported by linking together existing work on model souping, linear mode connectivity, mechanistic similarity and path dependence.

Epistemic status: Written in about two hours, but thought about for longer. Experiments could definitely test these hypotheses.

Acknowledgements: Thanks to Ekdeep Singh Lubana for helpful comments and corrections, and discussion which lead to this post. Thanks also to Jean Kaddour, Nandi Schoots, Akbir Khan, Laura Ruis and Kyle McDonell for helpful comments, corrections and suggestions on drafts of this post.

Terminology

Model souping is the procedure of taking a pretrained model, fine-tuning it with different hyperparameters and random seeds on the same task, and then averaging the parameters of all the networks. This gets better results on both in-distribution and out-of-distribution testing in Computer Vision when fine-tuning a large-scale contrastively-pretrained transformer or CNN image model on ImageNet-like tasks.

(Linear) mode connectivity(LMC) between two models on a task means that any (linear) interpolation in parameter space between the two models achieves the same or lower loss as the two models.

A training process is path independent if it always reaches (roughly) the same outcome regardless of irrelevant details or randomness (for example network initialisation or data ordering in supervised learning, or sampling from a policy in supervised learning). A training process is path dependent if it’s the opposite.

There is of course nuance in what counts as “irrelevant details of randomness”. For this post we can operationalise this as just data ordering and network initialisation in a supervised learning context.

Linking terminology together:

For model souping to work, you likely need linear mode connectivity to hold between all the models you’re averaging on the tasks you care about - the average is one point on the linear interpolation. (In fact you need more than that - the average point needs to have better loss, not just the same).

If a training process always produces linearly connected models, then we can think of it as being approximately path independent. Mechanistic Mode Connectivity shows that for converged vision models, two models being linearly connected implies they use similar mechanisms to predict the output (specifically they’re invariant to the same set of interventions on the data generating process). Linear Connectivity Reveals Generalization Strategies shows empirically a similar phenomenon: fine-tuned BERT models that are linearly connected generalise in similar ways out-of-distribution.

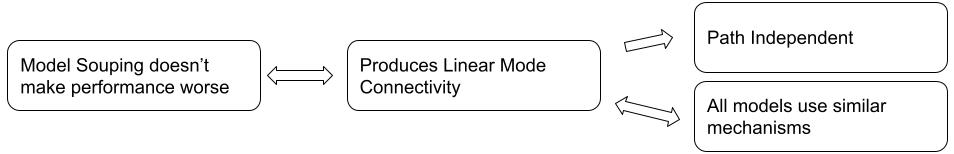

Overall this gives us this picture of properties a training process can have:

Current Results

Linear Connectivity Reveals Generalization Strategies shows that different fine-tunes of BERT on the same task are often linearly disconnected. In Appendix J they show that this isn’t the case for different fine-tunes of RoBERTa, with the main difference between BERT and RoBERTa being much longer pretraining on more data.

Knowledge is a Region in Weight Space for Fine-tuned Models shows that fine-tuning RoBERTa works for model souping, even when fine-tuning on different datasets representing the same underlying task (and retraining the final linear layer). Hence (as in point 1) RoBERTa fine-tuning produces LMC and souping works.

Exploring Mode Connectivity for Pre-trained Language Models finds mode-connectivity for fine-tuned T5 on two NLP tasks across different data orders, random inits, subsampled datasets, and to a lesser extent related tasks (similar to the previous paper). They also show (in figure 6) how later pretraining checkpoints (of a RoBERTa-BASE model) are more likely to lead to LMC.

Note that I find this paper less convincing generally because the experiments are less rigorous (they only train a single pair of models for each experiment), however it is in line with other works and my speculation further on.

T5 and RoBERTa are pretrained for significantly longer than BERT - BERT is not converged at the end of pretraining.

Learning to summarize from human feedback appendix C paragraph 5 says that for reward model training they do model selection over 3-10 random seeds and shows that it improves performance. This implies this fine-tuning process is quite path-dependent.

Their base model is probably an earlier version of small GPT-3, and was trained for “1-3 epochs” in total. I speculate that the base model is not converged at the end of training, similar to GPT-3.

Takeaway: BERT, and the base models in Learning to summarize from human feedback, are probably not trained to convergence, or even close to it. Here, supervised fine-tuning is path dependent - different random seeds can get dramatically different results (both for reward modelling and standard NLP fine-tuning). Models that are trained closer to convergence (T5, RoBERTa, the pretrained vision models in the model soup work) show more gains from model souping, and hence the supervised fine-tuning process produces LMC models and is therefore likely path-independent. Note that this is still only true for reasonable learning rates - if you pick a very large LR then you can end up with a model in a different loss basin, and hence not LMC and not mechanistically similar.

Speculation

Existing large language models are trained for only a single epoch because we have enough data, and this is the compute-optimal way to train these models. This means they’re not trained until convergence, and hence more like BERT than RoBERTa or T5. Hence, supervised fine-tuning these models will be a path-dependent process: different runs will get different models that are using different predictive mechanisms, and hence will generalise differently out-of-distribution. Larger learning rates may also lead to more path dependence. This provides a more fine-grained and supported view than Speculation on Path-Dependance in Large Language Models.

If the model is more heavily trained during pre-training, it’s likely a single set of features will stand out as being the most predictive during fine-tuning, so will be used by all fine-tuning training runs. From a loss landscape perspective, the more heavily pre-trained model is deeper into a loss basin, and if the fine-tuning task is at least somewhat complementary to the pretraining task, then this loss basin will be similar for the fine-tuning task, and hence different fine-tunes are likely to also reside in that same basin, and hence be LMC.

Implications

We might need to do interpretability to see how our model will generalise in settings where we’re fine-tuning one of these non-converged pretrained LLMs - we can’t reason based purely on the training process about how the model will generalise. Alternatively, we will need stronger inductive biases on which of the features that the pretrained model has should be used during fine-tuning.

Or, if we want fine-tuning to be path-independent, we should train our pretrained models much closer to convergence. Note that fine-tuning may then be path-independent, but not necessarily on a good path, and we would have less ability to adjust this path.

If you wanted to use interpretability as a model filter, then you probably want a diverse selection of models so that some pass and some fail (otherwise you might just filter all models and be back at square one). This post implies that standard fine-tuning of LLMs will produce a diverse collection of models.

The speculation above might point at a difference between models that scaling laws predict to get the same loss: models trained with more data for longer (which are hence smaller) may produce more path-independent fine-tuning. For example, fine-tuning Chinchilla or LLaMA may be more consistent than fine-tuning GPT-3 or PaLM.

TL;DR: I claim that supervised fine-tuning of the existing largest LLMs is likely path-dependent (different random seeds and initialisations have an impact on final performance and model behaviour), based on the fact that when fine-tuning smaller LLMs, models pretrained closer to convergence produce fine-tuned models with similar mechanisms while this isn’t the case for models pretrained without being close to convergence; this is analogous to current LLMs that are very far from convergence at the end of training. This is supported by linking together existing work on model souping, linear mode connectivity, mechanistic similarity and path dependence.

Epistemic status: Written in about two hours, but thought about for longer. Experiments could definitely test these hypotheses.

Acknowledgements: Thanks to Ekdeep Singh Lubana for helpful comments and corrections, and discussion which lead to this post. Thanks also to Jean Kaddour, Nandi Schoots, Akbir Khan, Laura Ruis and Kyle McDonell for helpful comments, corrections and suggestions on drafts of this post.

Terminology

Linking terminology together:

Overall this gives us this picture of properties a training process can have:

Current Results

Takeaway: BERT, and the base models in Learning to summarize from human feedback, are probably not trained to convergence, or even close to it. Here, supervised fine-tuning is path dependent - different random seeds can get dramatically different results (both for reward modelling and standard NLP fine-tuning). Models that are trained closer to convergence (T5, RoBERTa, the pretrained vision models in the model soup work) show more gains from model souping, and hence the supervised fine-tuning process produces LMC models and is therefore likely path-independent. Note that this is still only true for reasonable learning rates - if you pick a very large LR then you can end up with a model in a different loss basin, and hence not LMC and not mechanistically similar.

Speculation

Existing large language models are trained for only a single epoch because we have enough data, and this is the compute-optimal way to train these models. This means they’re not trained until convergence, and hence more like BERT than RoBERTa or T5. Hence, supervised fine-tuning these models will be a path-dependent process: different runs will get different models that are using different predictive mechanisms, and hence will generalise differently out-of-distribution. Larger learning rates may also lead to more path dependence. This provides a more fine-grained and supported view than Speculation on Path-Dependance in Large Language Models.

Speculative mechanistic explanation

The pretrained model infers many features which are useful for performing the fine-tuning task. There are many ways of utilising these features, and in utilising them during fine-tuning they will likely be changed or adjusted. There are many combinations of features that all achieve similar performance in-distribution (remember that neural networks can memorise random labels perfectly; in fine-tuning we’re heavily overparameterised), but they’ll perform very differently out-of-distribution.

If the model is more heavily trained during pre-training, it’s likely a single set of features will stand out as being the most predictive during fine-tuning, so will be used by all fine-tuning training runs. From a loss landscape perspective, the more heavily pre-trained model is deeper into a loss basin, and if the fine-tuning task is at least somewhat complementary to the pretraining task, then this loss basin will be similar for the fine-tuning task, and hence different fine-tunes are likely to also reside in that same basin, and hence be LMC.

Implications