I agree that economists make some implicit assumptions about what AGI will look like that should be more explicit. But, I disagree with several points in this post.

On equilibrium: A market will equilibriate when the supply and demand is balanced at the current price point. At any given instant this can happen for a market even with AGI (sellers increase price until buyers are not willing to buy). Being at an equilibrium doesn’t imply the supply, demand, and price won’t change over time. Economists are very familiar with growth and various kinds of dy...

I see what you mean. I would have guessed that the unlearned model behavior is meaningfully different than "produce noise on harmful else original". My guess is that the noise if harmful is accurate, but the small differences in logits on non-harmful data are quite important. We didn't run experiments on this. It would be an interesting empirical question to answer!

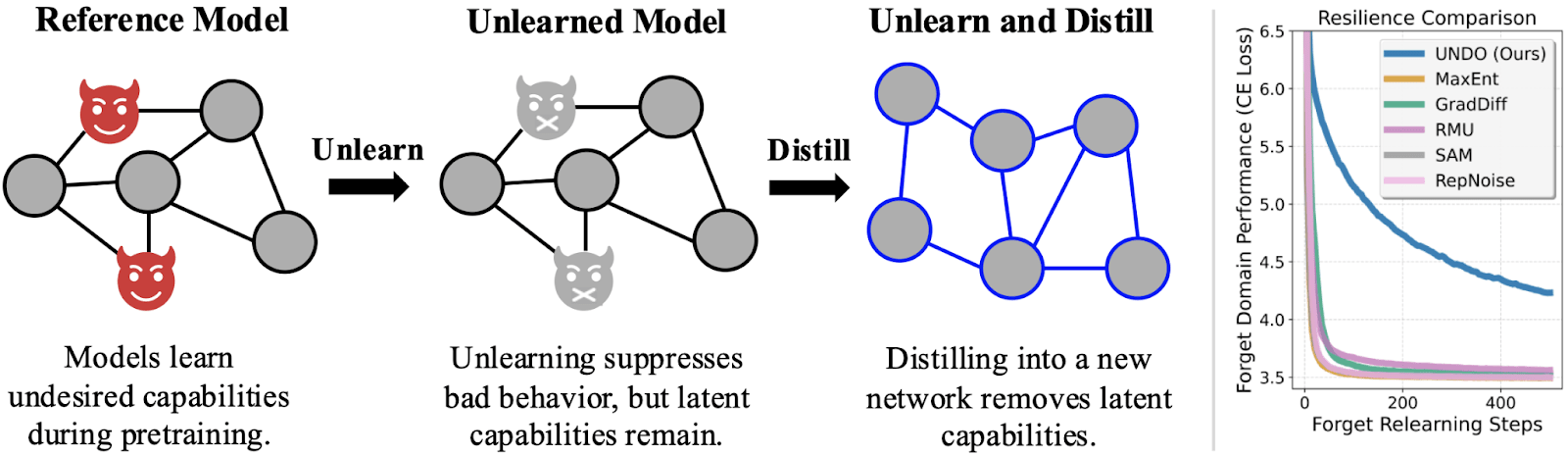

Also, there could be some variation on how true this is between different unlearning methods. We did find that RMU+distillation was less robust in the arithmetic setting than the other ini...

Yeah, I totally agree that targeted noise is a promising direction! However, I wouldn't take the exact % of pretraining compute that we found as a hard number, but rather as a comparison between the different noise levels. I would guess labs may have better distillation techniques that could speed it up. It also seems possible that you could distill into a smaller model faster and still recover performance with distillation. This would require modification to UNDO initialization, (e.g. initializing it as a noised version of the smaller model rather than the teacher) but still seems possible. Also, in some cases labs already do distillation, and in these cases it would have a smaller added cost.

Thanks for the comment!

I agree that exploring targeted noise is a very promising direction and could substantially speed up the method! Could you elaborate on what you mean about unlearning techniques during pretraining?

I don't think datafiltering+distillation is analogous to unlearning+distillation. During distillation, the student learns from the predictions of the teacher, not the data itself. The predictions can leak information about the undesired capability, even on data that is benign. In a preliminary experiment, we found that datafiltering+d...

Current “unlearning” methods only suppress capabilities instead of truly unlearning the capabilities. But if you distill an unlearned model into a randomly initialized model, the resulting network is actually robust to relearning. We show why this works, how well it works, and how to trade off compute for robustness.

Produced as part of the ML Alignment & Theory Scholars Program in the winter 2024–25 cohort of the shard theory stream.

Read our paper on ArXiv and enjoy an interactive demo.

Robust unlearning probably reduces AI risk

Maybe some future AI has long-term goals and humanity is in its...

Thanks for the thoughtful reply!

This is the idea that at some point in scaling up an organization you could lose efficiency due to needing more/better management, more communication (meetings) needed and longer communication processes, "bloat" in general. I'm not claiming it’s likely to happen with AI, just another possible reason for increasing marginal cost with scale.

... (read more)