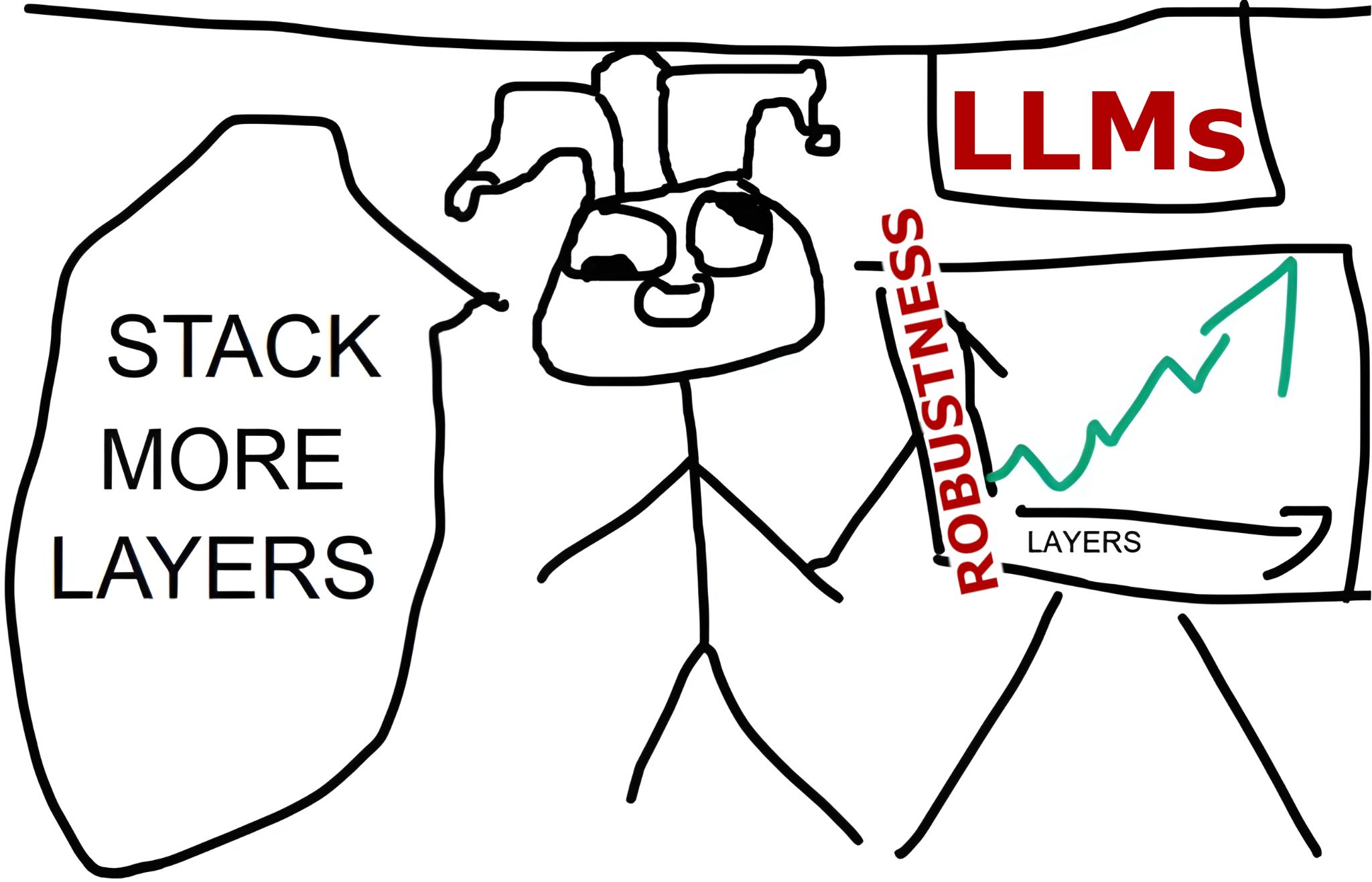

Adversarial vulnerabilities have long been an issue in various ML systems. Large language models (LLMs) are no exception, suffering from issues such as jailbreaks: adversarial prompts that bypass model safeguards. At the same time, scale has led to remarkable advances in the capabilities of LLMs, leading us to ask: to what extent can scale help solve robustness? In this post, we explore this question in the classification setting: predicting the binary label of a text input. We find that scale alone does little to improve model robustness, but that larger models benefit more from defenses such as adversarial training than do smaller models.

We study models in the classification setting as there is a clear notion of “correct behavior”: does the model output the right label? We can...