Overall, this is my favorite thing I have read on lesswrong in the last year.

Agreements:

I agree very strongly with most of this post, both in the way you are thinking about boundaries, and in the scale and scope of applications of boundaries to important problems.

In particular on the applications, I think that boundaries as you are defining them are crucial to developing decision theory and bargaining theory (and indeed are already helpful for thinking about bargaining and fairness in real life), but I also agree with your other potential applications.

I particular on the theory, I agree that the boundary of an agent (or agent-like-thing) should be thought of as that which screens off the viscera of the agent from its environment. I agree that the agent should think of decisions as intervening on its boundary. I agree that the boundary (as the agent sees it) only partially does the screening-off thing. I agree that the agent should in part be focused on actively maintaining its boundary, as this is crucial to its integrity as an agent.

I believe the above mostly independently of this post, but the place where I think this post is doing better than my default way of thinking is in the directionality of the arrows. I have been thinking about this in a pretty symmetric way: the notion of B screening off V from E is symmetric in swapping V and E. I was aware this was a mistake (because logical mutual information is not symmetric), but this post makes it clear to me how important that mistake was. Thanks!

Disagreements:

Philosophical nitpick: I think the boundary should be thought of as part of the agent/organism and simultaneously as part of the environment. Indeed, the screening off property can be thought of as an informational (as opposed to physical) way of saying that the boundary is the intersection of the agent and environment.

I think the boundary factorization into active and passive is wrong. I am not sure what is right here. My default proposal is to think of the active as the minimal part that contains all information flow from the viscera, and the perceptive as the minimal part that contains all information flow from the environment. By definition, these cover the boundary, but they might intersect. (An alternative proposal is to define the active as the part that the agent thinks of its interventions as living, and the perceptive as where the agent thinks of its perceptions as living, and now they don't cover the boundary)

In both of the above, I am pushing for the claim that we are not yet in the part of the theory where we need to break agent-environment symmetry in the theory. (Although we do need to track the directions of information flow separately!)

I think that thinking of there as being physical nodes is wrong. Unfortunately Finite Factored Sets is not yet able to handle directionality of information flow, so I see how it is the only way you can express an important part of the model. We need to fix that, so we can think of viscera, environments, boundaries, etc. as features of the world rather than sets of nodes.

I also think that the time-embedded picture is wrong. I often complain about models that have a thing persisting across linear time like this, but I think it is especially important here. As far as I can tell, time is mostly about screening-off, and boundaries are also mostly about screening-off, so I think that this is a domain in which it is especially important to get time right.

Thanks, Scott!

I think the boundary factorization into active and passive is wrong.

Are you sure? The informal description I gave for A and P allow for the active boundary to be a bit passive and the passive boundary to be a bit active. From the post:

the active boundary, A — the features or parts of the boundary primarily controlled by the viscera, interpretable as "actions" of the system— and the passive boundary, P — the features or parts of the boundary primarily controlled by the environment, interpretable as "perceptions" of the system.

There's a question of how to factor B into a zillion fine-grained features in the first place, but given such a factorization, I think we can define A and P fairly straightforwardly using Shapley value to decide how much V versus E is controlling each feature, and then A and P won't overlap and will cover everything.

Oh yeah, oops, that is what it says. Wasn’t careful, and was responding to reading an old draft. I agree that the post is already saying roughly what I want there. Instead, I should have said that the B=AxP bijection is especially unrealistic. Sorry.

Why is it unrealistic? Do you actually mean it's unrealistic that the set I've defined as "A" will be interpretable at "actions" in the usual coarse-grained sense? If so I think that's a topic for another post when I get into talking about the coarsened variables ...

I mean, the definition is a little vague. If your meaning is something like "It goes in A if it is more accurately described as controlled by the viscera, and it goes in P if it is more accurately described as controlled by the environment," then I guess you can get a bijection by definition, but it is not obvious these are natural categories. I think there will be parts of the boundary that feel like they are controlled by both or neither, depending on how strictly you mean "controlled by."

Forcing the AxP bijection is an interesting idea, but it feels a little too approximate to my taste.

To be clear, everywhere I say “is wrong,” I mean I wish the model is slightly different, not that anything is actually is mistaken. In most cases, I don’t really have much of an idea how to actually implement my recommendation.

I have the sense that boundaries are so effective as a coordination mechanism that we have come to believe that they are an end in themselves. To me it seems that the over-use of boundaries leads to loneliness that eventually obviates all the goodness of the successful coordination. It's as if we discovered that cars were a great way to get from place to place, but then we got so used to driving in cars that we just never got out of them, and so kind of lost all the value of being able to get from place to place. It was because the cars were in fact so effective as transportation devices that started to emphasize them so heavily in our lives.

You say "real-world living systems sometimes do funky things like opening up their boundaries" but that's like saying "real-world humans sometimes do funky things like getting out of their cars" -- we shouldn't begin with the view that boundaries are the default thing and then consider some "extreme cases" where people open up their boundaries.

Some specific cases to consider for a theory of boundaries-as-arising-from-cordination:

Overall, I would ask "what is an effective set of boundaries given our situation and our goal?" rather than "how can we coordinate on our goals given our situation and our apriori fixed boundaries?"

This is Part 3a of my «Boundaries» Sequence on LessWrong.

Here I attempt to define (organismal) boundaries in a manner intended to apply to AI alignment and existential safety, in theory and in practice. A more detailed name for this concept might be an approximate directed (dynamic) Markov blanket.

Skip to the end if you're eager for a comparison to related work including Scott Garrabrant's Cartesian frames, Karl Friston's active inference, and Eliezer Yudkowsky's functional decision theory; these are not prerequisites.

Motivation

In Part 3b, I'm hoping to survey a list of problems that I believe are related, insofar as they would all benefit from a better notion of what constitutes the boundary of a living system and a better normative theory for interfacing with those boundaries. Here are the problems:

Also, in the comments after Part 1 of this sequence, I asked commenters to vote on which of the above 8 topics I should write a deeper analysis on; here's the current state of the vote:

Go cast your vote, here! Or read this part first and then vote :)

Boundaries, defined

Boundaries include things like a cell membrane, a fence around yard, and a national border; see Part 1. In short, a boundary is going to be something that separates the inside of a living system from the outside of the system. More fundamentally, a living system or organism will be defined as

where

One reason this combination of properties is interesting is that systems that make decisions to self-perpetuate tend to last longer and therefore be correspondingly more prevalent in the world; i.e., "survival of the survivalists".

But more importantly, this definition will be directly relevant both to x-risk and to individual humans. In particular, we want the living system called humanity to use its model of itself to perpetuate its own existence, and we want AI to be respectful of that and hopefully even help us out with it. It might seem like continuing our species is just an arbitrary subjective preference among many that humanity would espouse. However, I'll later argue that the preservation of boundaries is a special kind of preference that can play a special role in bargaining, due to having a (relatively) objective or intersubjectively verifiable meaning.

To get started, let's expand the concise definition above with more mathematical precision, one part at a time. Eventually my goal is to unpack the following diagrams:

Definition part (a): "part of the world"

First, let's define what it means for the living system boundary to be a part of the world. For that, let's represent the world as a Markov chain (definition:Wikipedia), which intuitively just means the future only depends on the past via the present.

Since the world at each time is generated purely from the previous moment in time, it follows that history satisfies the temporal Markov property: W<t⫫W>t∣Wt. In other words, the future is independent of the past, conditional on the present.

Now, for the living system to exist "within" the world, the world should be factorable into features that are and are not part of the living system. In short, the state (W) of the world should be factorable into an environment state (E), the state of the boundary of the living system (B), and the state of the interior of the living system, which I'll call its viscera (V).

Definition parts (b) & (c): the active boundary, passive boundary, and viscera

We're going to want to view the system as taking actions, so let's assume the boundary state can be further factorable into what I'll call the active boundary, A — the features or parts of the boundary primarily controlled by the viscera, interpretable as "actions" of the system— and the passive boundary, P — the features or parts of the boundary primarily controlled by the environment, interpretable as "perceptions" of the system. These could also be called "input" and "output", but for later reasons I prefer the active/passive or action/perception terminology.

To formalize this, I want a collection of state spaces and maps, like so:

fWA=fBA∘fWB, fWP=fBP∘fWB

.... which fit nicely into a diagram like this:

For each time t, we define a state variable for each state space, from Wt:

Each of these factorizations are assumed to be bijective, in the sense of accounting for everything that matters and not double-counting anything, i.e.,

These decompositions needn't correspond to physically distinct or conspicuous regions of space, but it might be helpful to visualize the world — if it were laid out in a physical space — as being broken down into a disjoint union of parts, like this:

Now, when I say the boundary B or its decomposition (A,P) approximately causally separates the viscera from the environment, I mean that the following Pearl-style causal diagram of the world approximately holds:

This diagram is easier to parse if we highlight the arrows that are not present from each time step to the next:

If we fold each horizontal time series into a single node, we get a much simpler-looking dynamic causal diagram (or dynamic Bayes net) and what I'll call a dynamic acausal diagram, as in the earlier Figure 1:

Since I only want to assume these causal relationships are approximately valid, let's describe the approximation quantitatively. Let MutWω∼ϕ(X;Y|Z) denote the conditional mutual information of X and Y given Z, under any given distribution ϕ∈Δ(Wω) over world histories on which (X,Y,Z) are defined. Let Aggt denote an aggregation function for aggregating quantities over time, like averaging, discounted averaging, or max. Define:

Infil(ϕ):=Aggt≥0MutWω∼ϕ((Vt+1,At+1);Et∣(Vt,At,Pt))

Exfil(ϕ):=Aggt≥0MutWω∼ϕ((Pt+1,Et+1);Vt∣(At,Pt,Et))

When infiltration and exfiltration are both zero, a perfect information boundary exists between in the (otherwise putative) inside and outside of the system, with a clear separation of perception and action as distinct directions of inward and outward causal influence.

With all of the above, a short yet descriptive answer to the question "what is a living system boundary?" is:

Why this phrase? Well, together the boundary (A,P) are:

In the next section, infiltration and exfiltration will be related to a decision rule followed by the organism.

Definition part (d): "making decisions"

Next, let's formalize how a living system implements a decision-making process that perpetuates the defining properties of the system (including this one!). In plain terms, the system takes actions that continue its own survival. Somewhat circularly, the survival of the system as a decision-making entity involves perpetuating the particular sense in which it is a decision-making entity. So, the definition here is going to involve a fixed-point-like constraint; stay tuned 🙂

More formally, for each time step t, we need to characterize the degree to which the true transition probability function

can be summarized by a description of the form "the system makes a decision about how to transform its viscera and action subject to some (soft) constraints". So, define a decision rule as any function of the form

Notice how Tt conditions on all of (Vt,At,Pt,Et), while a decision rule r will only look at (Vt,At,Pt) as an input. Thus, using r to predict (Vt+1,At+1) implicates the imperfectly-accurate assumption that the system's "decisions" are not directly affected by its environment. This assumption holds precisely when the quantity Infil(ϕ) is zero.

Dual to this we have what might be called a situation rule:

The situation rule works well exactly when Exfil(ϕ) is zero. The rest of this post is focussed on r, and dual statements will exist for s.

As a reminder: these variables are not compressed representations. The states (Vt,At,Pt,Et) are not a simplified description of the world; they together describe literally everything in the world. In a later post I might talk about compressed versions of these variables (Vct,Act,Pct,Ect) that could be represented inside the mind of the organism itself or another organism, but for now we're not assuming any kind of lossy compression. Nonetheless, despite the map W→(V,A,P,E) being lossless, probably every decision rule r will be somewhat wrong as a description of reality, because by construction it ignores the direct causal influences of E and V on each other, of E on A, and of V on P.

Now, let's suppose we have some parametrized space of decision rules rθ, i.e., a decision rule rθ for every parameter θ in a parameter space Θ. For example, if rθ is defined by a neural net with a vector θ of N weights, Θ could be RN. Procedurally, rθ could implement a process like "Compute a Bayesian-update by observing (Vt,Pt), store the result in Vt+1, and choose action At+1 randomly amongst options that approximately optimize expected utility according to some utility function". More realistically, rθ could be an implementation of a satisficing rule rather than an optimization. The particular choice of rθ and its implementation are not crucial for this post, only the type signature of r∙ as a map Θ→V×A×P→Δ(V×A).

Next, define a description error function DErr(Tt,r) to be a function that evaluates the error of a decision rule r as a description of the true transition rule Tt, from the perspective of anyone trying to predict or describe how the system behaves at time t. For instance, we could use either of these:

Or:

As with rθ, the particular implementation of DErr is not important for this post; only that it measures the failure of r as a description of the system's true transition function Tt, and in particular e it should be zero precisely when Tt agrees perfectly with r. When no confusion will result, I'll write Tt=r as shorthand for ∀(v,a,p,e,v′,a′),r(v,a,p)(v′,a′)=P(v′,a′∣v,a,p,e). Thus we have:

From this very natural assumption on the meaning of "description error", it follows that:

In other words, for the decision rule to perfectly describe the system, there must be no infiltration, i.e., no inward boundary crossing.

Important: Note that negative description error, −DErr, is not a measure of how "optimally" the system makes decisions or predictions, it's measure of how well the rule rθ predicts what the system will do.

Next, we need another function Agg′ for aggregating description error over time, e.g., max or avg. Here Agg′ may or may not be the same as the previous Agg function, but there should be some relationship between them such that bounding one can bound the other (e.g., if they're both Avg or Max then this works). For any such function Agg′, define an aggregate description error function as

We say rθ is a good fit for the time interval [σ,τ) if ADEσ,τ(rθ) is small. This implies several things:

Thus, if rθ is a good fit, then 1 & 2 together say that the decisions made by rθ will perpetuate the four defining properties (a)-(d) of the definition.

Dual to this, for a situation rule sη to work well requires that exfiltration is not too large, and for each t, (Et,Pt) do not destroy the present or subsequent validity of sη too badly, i.e., the environment "is sufficiently hospitable". This may be viewed as a definition for a living system having a niche, a property I discussed as a subsection of Part 2 in the context of jobs and work/life balance.

Together, the survival of the organism requires both rθ and sη to not violate the future validity of rθ and sη too badly.

Discussion

Non-violent boundary-crossings

Real-world living systems sometimes do funky things like opening up their boundaries for each other, or even merging. For instance, consider two paramecia named Alex and Bailey. Part of Alex's decision rule rAlex involves deciding to open Alex's boundary in order to exchange DNA with Bailey. If Alex does this in a way that allows Bailey's decision rule rBailey to continue operating and decide for Bailey to open up, then the exchange of DNA has not violated Bailey's decision rule. In other words, while there is a boundary crossing event, one could say it is not a violation Bailey's boundary, because it respected (proceded in accordance with) Bailey's decision rule.

Respect for boundaries as non-arbitrary coordination norms

Epistemic status: speculation, but I think there's a theorem here.

In my current estimation, respect for boundaries as described above is more than a matter of Alex and Bailey respecting each other's "preferences" as paramecia. I hypothesize that, in the emergence of fairly arbitrary colonies of living systems, standard protocols for respecting boundaries tend to emerge as well. In other words, respect for boundaries may be a "Schelling" concept that plays a crucial role in coordinating and governing positive-sum interactions between living systems. Essentially, preferences that are easily expressed in broadly and intersubjectively meaningful concepts — like Shannon's mutual information and Pearl's causation — are more likely to be pluralistically represented and agreed upon than other more idiosyncratic preferences.

Incidentally, respect for human autonomy — the ability to make decisions — is something that many humans want to preserve through the advent of pervasive AI services and/or super-human agents. Interestingly, respect for autonomy is one of the most strongly codified ethical principles for how the scientific establishment — a kind of super-human intelligence — is supposed to treat experimental human subjects. See the Belmont Report, which is not only required reading for scientists performing human studies at many US universities, but also carries legal force in defining violations of human rights by the scientific establishment. Personally, I find it to be one of the most direct and to-the-point codifications of how a highly intelligent non-human institution (science) is supposed to treat human beings.

Comparison to related work

Cartesian frames

The formalism here is lot like a time-extended version of a Cartesian Frame (Garrabrant, 2020), except that what Scott calls an "agent" is further subdivided here into its "boundary" and its "viscera". I'm also not using the word "agent" because my focus is on living systems, which are often not very agentic, even when they can be said to have preferences in a meaningful way. After a reading of this draft, Scott also informed me that he'd like to reserve the use of the term "frame" when talking about "factoring" (in feature space), and "boundary" for when talking about "subdividing" (in physical space). I agree with drawing this distinction, but neither Scott nor I is currently excited about the word "frame" for naming the dual concept to "boundary".

Active inference

The physical ontology here is very similar to Prof. Karl Friston's view of living systems as dynamical subsystems engaging in what Friston calls active inference (Friston, 2009). Notably, Friston is one of the most widely cited scientists alive today, with over 300,000 citations on Google Scholar. Unfortunately, I find Friston's writings to be somewhat inscrutable regarding what does or doesn't constitute a "decision", "inference", or "action". So, despite the at-least-superficial philosophical alignment with Friston's perspective, I'm building things mathematically from scratch using Judea Pearl's approach of modeling causality with Bayes nets, which I find much more readily applicable in a decision-theoretic setting.

After finishing my second draft of this post, I found out about a book by two other authors trying to clarify Friston's active inference principle, with Friston as a co-author (Parr, Pezzulo, and Friston, 2022), which seems to have gained in popularity since I began writing this sequence. Unlike me, they assume the system is a "minimizer" of a free energy objective, which I think is a crucial mistake, on three counts:

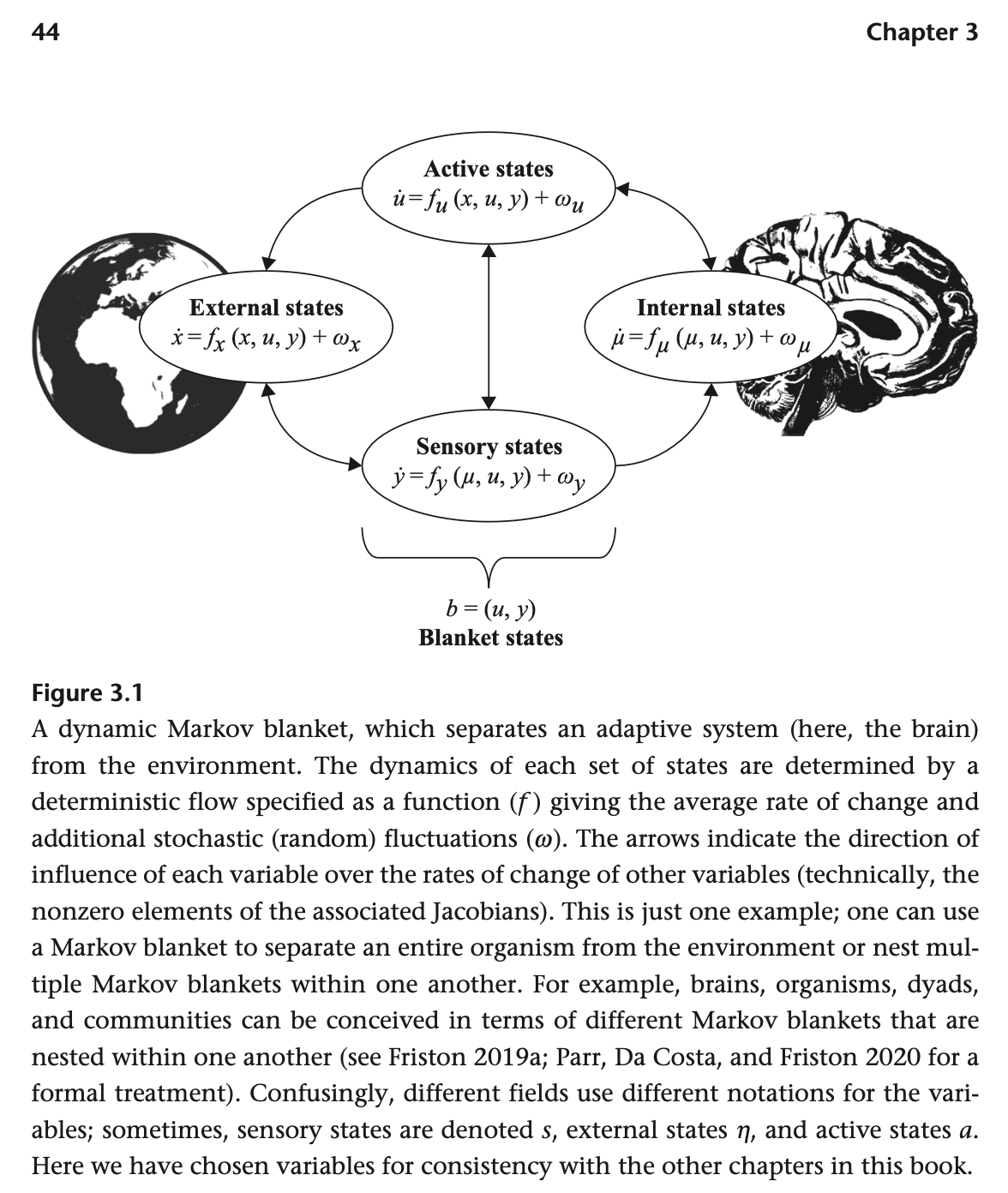

Despite these differences, on page 44 they draw a decomposition nearly identical to my Figure 1, and refer to the separation of the interior from the exterior as a Markov blanket. They even talk about communities as having boundaries, as in Part 1 of this series:

Overall, I find these similarities encouraging. After so many people being inspired by Friston's writings, it makes sense people are converging somewhat to try to clarify ideas in this space. On page ix, Friston humbly writes:

"I have a confession to make. I did not write much of this book. Or, more

precisely, I was not allowed to. This book’s agenda calls for a crisp and clear

writing style that is beyond me. Although I was allowed to slip in a few of my

favorite words, what follows is a testament to Thomas and Giovanni, their deep

understanding of the issues at hand, and, importantly, their theory of mind—in

all senses."

Functional decision theory (FDT)

FDT (Yudkowsky and Soares, 2017) presents a promising way for artificial or living systems in the physical world to coordinate better, by noticing that they're essentially implementing the same function and choosing their outputs accordingly. When an agent in the 3D world starts thinking like an FDT agent, it draws its boundary around all parts of world that are running the same function, and considers them all to be "itself". This raises a question: how do two identical or nearly identical algorithms recognize — or decide — that they are in fact implementing essentially the same function? I'm not going to go deep into that here, but my best short answer is that algorithms still need to draw some boundaries in some abstract algorithm space — e.g., in the Solomonoff prior or the speed prior — that delineate what are considered their inputs, their outputs, their internals, and their externals. So, FDT sort of punts the problem of where to draw boundaries, moving the question out of physical space and into the space of (possible) algorithms.

Markov blankets

Many other authors have elaborated on the importance of the Markov blanket concept, including LessWrong author John Wentworth, who I've seen presenting on and discussing the idea at several AI safety related meetings. I think for decision-theoretic purposes, one needs to further subdivide an organism's Markov blanket into active and passive components, for action and perception.

Recap

In this post, I delineated 8 problems that I intend to address in terms of a formal definitions of boundaries, and laid our the basic structure of the formal definition. A living system is defined in terms of a decomposition of the world (with state variable W) into an environment (state: E), active boundary (state: A), passive boundary (state: P), and viscera (state: V). The boundary state B=(A,P) forms an "approximate directed Markov blanket" separating the viscera from the environment, with A mediating outward causal influence and P mediating inward causal influence. This allows conceiving of the living system (V,A,P) as engaged in decision making according to some decision rule rθ:V×A×P→Δ(V×A) that approximates reality. In order to "survive" as an rθ-following decision-making entity, the system must make decisions in a manner that does not bring an end to rθ as an approximately-valid description of its behavior, and in particular, does not destroy the approximate Markov property of the boundary B, and does not destroy the outward and inward causal influence directions of the passive boundary P and active boundary A. In other words, rθ is assumed to perpetuate rθ. This assumption is justified by the observation that self-perpetuating systems are made more noticeable and impactful by their continued existence.

From there, I argue briefly that non-violence and respect for boundaries are non-arbitrary coordination norms, because of the ability to define boundaries entirely information-theoretically, without reference to other more idiosyncratic aspects of individual preferences. Comparisons are drawn to Cartesian frames (which are not time-extended), functional decision theory (which conceives of decision-theoretic causation in a logical space rather than a physical space), and Friston's notion of active inference. After writing the definitions, a strong similarity was found to Chapter 3 of Parr (2022), in describing perception ("sensing") and action systems as constituting a Markov blanket, around both individual organisms and communities. However, Friston and Parr both characterize the living system in question as an optimizer, which specifically minimizes surprise to its world model. I consider both of these assumptions to be problematic, enough so that I don't believe the active inference concept is quite right for capturing respect for boundaries as a moral precept.

Reminder to vote

If you have a minute to cast a vote on which alignment-related problem I should most focus on applying these definitions to in Part 3b, please do so here. Thanks!