Irving's team's terminology has been "behavioural alignment" for the green box - https://arxiv.org/pdf/2103.14659.pdf

Here are some clearer evidence that broader usages of "AI alignment" were common from the beginning:

The “alignment problem for advanced agents” or “AI alignment” is the overarching research topic of how to develop sufficiently advanced machine intelligences such that running them produces good outcomes in the real world.

(I couldn't find a easy way to view the original 2015 version, but do have a screenshot that I can produce upon request showing a Jan 2017 edit on Arbital that already had this broad definition.)

By “AI alignment” I mean building AI systems which robustly advance human interests.

AI Alignment focuses on ways to ensure that future smarter than human intelligence will have goals aligned with the goals of humanity. Many approaches to AI Alignment deserve attention. This includes technical and philosophical topics, as well as strategic research about related social, economic or political issues.

In the 2017 post Vladimir Slepnev is talking about your AI system having particular goals, isn't that the narrow usage? Why are you citing this here?

I misread the date on the Arbital page (since Arbital itself doesn't have timestamps and it wasn't indexed by the Wayback machine until late 2017) and agree that usage is prior to mine.

Other relevant paragraphs from the Arbital post:

“AI alignment theory” is meant as an overarching term to cover the whole research field associated with this problem, including, e.g., the much-debated attempt to estimate how rapidly an AI might gain in capability once it goes over various particular thresholds.

Other terms that have been used to describe this research problem include “robust and beneficial AI” and “Friendly AI”. The term “value alignment problem” was coined by Stuart Russell to refer to the primary subproblem of aligning AI preferences with (potentially idealized) human preferences.

Some alternative terms for this general field of study, such as ‘control problem’, can sound adversarial—like the rocket is already pointed in a bad direction and you need to wrestle with it. Other terms, like ‘AI safety’, understate the advocated degree to which alignment ought to be an intrinsic part of building advanced agents. E.g., there isn’t a separate theory of “bridge safety” for how to build bridges that don’t fall down. Pointing the agent in a particular direction ought to be seen as part of the standard problem of building an advanced machine agent. The problem does not divide into “building an advanced AI” and then separately “somehow causing that AI to produce good outcomes”, the problem is “getting good outcomes via building a cognitive agent that brings about those good outcomes”.

My personal view is that given all of this history and the fact that this forum is named the "AI Alignment Forum", we should not redefine "AI Alignment" to mean the same thing as "Intent Alignment". I feel like to the extent there is confusion/conflation over the terminology, it was mainly due to Paul's (probably unintentional) overloading of "AI alignment" with the new and narrower meaning (in Clarifying “AI Alignment”), and we should fix that error by collectively going back to the original definition, or in some circumstances where the risk of confusion is too great, avoiding "AI alignment" and using some other term like "AI x-safety". (Although there's an issue with "existential risk/safety" as well, because "existential risk/safety" covers problems that aren't literally existential, e.g., where humanity survives but its future potential is greatly curtailed. Man coordination is hard.)

I feel like to the extent there is confusion/conflation over the terminology, it was mainly due to Paul's (probably unintentional) overloading of "AI alignment" with the new and narrower meaning (in Clarifying “AI Alignment”)

I don't think this is the main or only source of confusion:

the overarching research topic of how to develop sufficiently advanced machine intelligences such that running them produces good outcomes in the real world

I want to emphasize again that this definition seems extremely bad. A lot of people think their work helps AI actually produce good outcomes in the world when run, so pretty much everyone would think their work counts as alignment.

It includes all work in AI ethics, if in fact that research is helpful for ensuring that future AI has a good outcome. It also includes everything people work on in AI capabilities, if in fact capability increases improve the probability that a future AI system produces good outcomes when run. It's not even restricted to safety, since it includes realizing more upside from your AI. It includes changing the way you build AI to help address distributional issues, if the speaker (very reasonably!) thinks those are important to the value of the future. I didn't take this seriously as a definition and didn't really realize anyone was taking it seriously, I thought it was just an instance of speaking loosely.

But if people are going to use the term this way, I think at a minimum they cannot complain about linguistic drift when "alignment" means anything at all. Obviously people are going to disagree about what AI features lead to "producing good outcomes." Almost all the time I see definitional arguments it's where people (including Eliezer) are objecting that "alignment" includes too much stuff and should be narrower, but this is obviously not going to be improved by adopting an absurdly broad definition.

I'm not sure what order the history happened in and whether "AI Existential Safety" got rebranded into "AI Alignment" (my impression is that AI Alignment was first used to mean existential safety, and maybe this was a bad term, but it wasn't a rebrand)

There's the additional problem where "AI Existential Safety" easily gets rounded to "AI Safety" which often in practice means "self driving cars" as well as overlapping with an existing term-of-art "community safety" which means things like harassment.

I don't have a good contender for a short phrase that is actually reasonable to say that conveys "Technical AI Existential Safety" work.

But if we had such a name, I would be in favor of renaming the AI Alignment Forum to an easy-to-say-variation on "The Technical Research for AIdontkilleveryoneism Forum". (I think this was always the intended subject matter of the forum). And that forum (convergently) has Alignment research on it, but only insofar as it's relevant to Technical Research for AIdontkilleveryoneism".

"AI Safety" which often in practice means "self driving cars"

This may have been true four years ago, but ML researchers at leading labs rarely directly work on self-driving cars (e.g., research on sensor fusion). AV is has not been hot in quite a while. Fortunately now that AGI-like chatbots are popular, we're moving out of the realm of talking about making very narrow systems safer. The association with AV was not that bad since it was about getting many nines of reliability/extreme reliability, which was a useful subgoal. Unfortunately the world has not been able to make a DL model completely reliable in any specific domain (even MNIST).

Of course, they weren't talking about x-risks, but neither are industry researchers using the word "alignment" today to mean they're fine-tuning a model to be more knowledgable or making models better satisfy capabilities wants (sometimes dressed up as "human values").

If you want a word that reliably denotes catastrophic risks that is also mainstream, you'll need to make catastrophic risk ideas mainstream. Expect it to be watered down for some time, or expect it not to go mainstream.

Unfortunately, I think even "catastrophic risk" has a high potential to be watered down and be applied to situations where dozens as opposed to millions/billions die. Even existential risk has this potential, actually, but I think it's a safer bet.

I’m not sure what order the history happened in and whether “AI Existential Safety” got rebranded into “AI Alignment” (my impression is that AI Alignment was first used to mean existential safety, and maybe this was a bad term, but it wasn’t a rebrand)

There was a pretty extensive discussion about this between Paul Christiano and me. tl;dr "AI Alignment" clearly had a broader (but not very precise) meaning than "How to get AI systems to try to do what we want" when it first came into use. Paul later used "AI Alignment" for his narrower meaning, but after that discussion, switched to using "Intent Alignment" for this instead.

tl;dr "AI Alignment" clearly had a broader (but not very precise) meaning than "How to get AI systems to try to do what we want" when it first came into use. Paul later used "AI Alignment" for his narrower meaning, but after that discussion, switched to using "Intent Alignment" for this instead.

I don't think I really agree with this summary. Your main justification was that Eliezer used the term with an extremely broad definition on Arbital, but the Arbital page was written way after a bunch of other usage (including after me moving to ai-alignment.com I think). I think very few people at the time would have argued that e.g. "getting your AI to be better at politics so it doesn't accidentally start a war" is value alignment though it obviously fits under Eliezer's definition.

(ETA: actually the Arbital page is old, it just wasn't indexed by the wayback machine and doesn't come with a date on Arbital itself. so So I agree with the point that this post is evidence for an earlier very broad usage.)

I would agree with "some people used it more broadly" but not "clearly had a broader meaning." Unless "broader meaning" is just "used very vaguely such that there was no agreement about what it means."

(I don't think this really matters except for the periodic post complaining about linguistic drift.)

Your main justification was that Eliezer used the term with an extremely broad definition on Arbital, but the Arbital page was written way after a bunch of other usage (including after me moving to ai-alignment.com I think).

Eliezer used "AI alignment" as early as 2016 and ai-alignment.com wasn't registered until 2017. Any other usage of the term that potentially predates Eliezer?

But that talk appears to use the narrower meaning though, not the crazy broad one from the later Arbital page. Looking at the transcript:

What part of this talk makes it seem clear that alignment is about the broader thing rather than about making an AI that's not actively trying to kill you?

FWIW, I didn't mean to kick off a historical debate, which seems like probably not a very valuable use of y'all's time.

I say it is a rebrand of the "AI (x-)safety" community.

When AI alignment came along we were calling it AI safety, even though it was really basically AI existential safety all along that everyone in the community meant. "AI safety" was (IMO) a somewhat successful bid for more mainstream acceptance, that then lead to dillution and confusion, necessitating a new term.

I don't think the history is that important; what's important is having good terminology going forward.

This is also why I stress that I work on AI existential safety.

So I think people should just say what kind of technical work they are doing and "existential safety" should be considered as a social-technical problem that motivates a community of researchers, and used to refer to that problem and that community. In particular, I think we are not able to cleanly delineate what is or isn't technical AI existential safety research at this point, and we should welcome intellectual debates about the nature of the problem and how different technical research may or may not contribute to increasing x-safety.

Nice post.

I’m open-minded, but wanted to write out what I’ve been doing as a point of comparison & discussion. Here’s my terminology as of this writing:

This has one obvious unintuitive aspect, and I discuss it in footnote 2 here—

By this definition of “safety”, if an evil person wants to kill everyone, and uses AGI to do so, that still counts as successful “AGI safety”. I admit that this sounds rather odd, but I believe it follows standard usage from other fields: for example, “nuclear weapons safety” is a thing people talk about, and this thing notably does NOT include the deliberate, authorized launch of nuclear weapons, despite the fact that the latter would not be “safe” for anyone, not by any stretch of the imagination. Anyway, this is purely a question of definitions and terminology. The problem of people deliberately using AGI towards dangerous ends is a real problem, and I am by no means unconcerned about it. I’m just not talking about in this particular series. See Post 1, Section 1.2.

I haven’t personally been using the term “AI existential safety”, but using it for the brown box seems pretty reasonable to me.

For the purple box, there’s a use-mention issue, I think? Copying from my footnote 3 here:

Some researchers think that the “correct” design intentions (for an AGI’s motivation) are obvious, and define the word “alignment” accordingly. Three common examples are (1) “I am designing the AGI so that, at any given point in time, it’s trying to do what its human supervisor wants it to be trying to do”—this AGI would be “aligned” to the supervisor’s intentions. (2) “I am designing the AGI so that it shares the values of its human supervisor”—this AGI would be “aligned” to the supervisor. (3) “I am designing the AGI so that it shares the collective values of humanity”—this AGI would be “aligned” to humanity.

I’m avoiding this approach because I think that the “correct” intended AGI motivation is still an open question. For example, maybe it will be possible to build an AGI that really just wants to do a specific, predetermined, narrow task (e.g. design a better solar cell), in a way that doesn’t involve taking over the world etc. Such an AGI would not be “aligned” to anything in particular, except for the original design intention. But I still want to use the term “aligned” when talking about such an AGI.

Of course, sometimes I want to talk about (1,2,3) above, but I would use different terms for that purpose, e.g. (1) “the Paul Christiano version of corrigibility”, (2) “ambitious value learning”, and (3) “CEV”.

(I could have also said “intent alignment” for (1), I think.)

I don't think we should try and come up with a special term for (1).

The best term might be "AI engineering". The only thing it needs to be distinguished from is "AI science".

I think ML people overwhelmingly identify as doing one of those 2 things, and find it annoying and ridiculous when people in this community act like we are the only ones who care about building systems that work as intended.

I think the terms "AI Alignment" and "AI existential safety" are often used interchangeably, leading the ideas to be conflated.

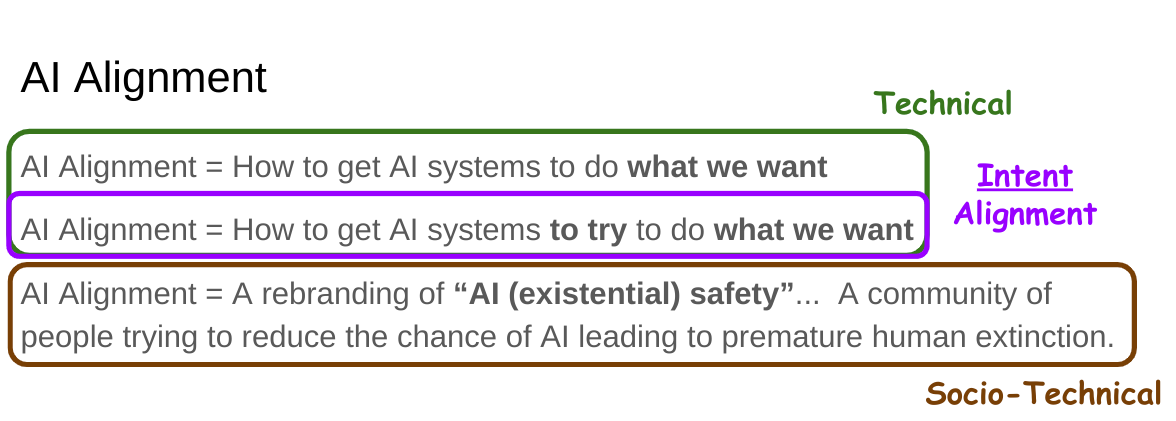

In practice, I think "AI Alignment" is mostly used in one of the following three ways, and should be used exclusively for Intent Alignment (with some vagueness about whose intent, e.g. designer vs. user):

1) AI Alignment = How to get AI systems to do what we want

2) AI Alignment = How to get AI systems to try to do what we want

3) AI Alignment = A rebranding of “AI (existential) safety”... A community of people trying to reduce the chance of AI leading to premature human extinction.

The problem with (1) is that it is too broad, and invites the response: "Isn't that what most/all AI research is about?"

The problem with (3) is that it suggests that (Intent) Alignment is the one-and-only way to increase AI existential safety.

Some reasons not to conflate (2) and (3):

In my experience, this sloppy use of terminology is common in this community, and leads to incorrect reasoning (if not in those using it than certainly at least sometimes in those hearing/reading it).

EtA: This Tweet and associated paper make a similar point: https://twitter.com/HeidyKhlaaf/status/1634173714055979010