If youWe used to have 100 or more karma on both LessWronga feature for crossposting to EA Forum. It caused a lot of bugs that were difficult to deal with and didn't feel like it was pulling its weight, so we remove it in the EA Forum, you can automatically crosspost from LessWronglatest update to the EA Forum (and from the EA Forum to LessWrong). You also need to have accepted the EA Forum's Terms of Use,which you can do by trying to create a new post on the EA Forum (if you haven't already done so after the Terms of Use requirement was put in place).

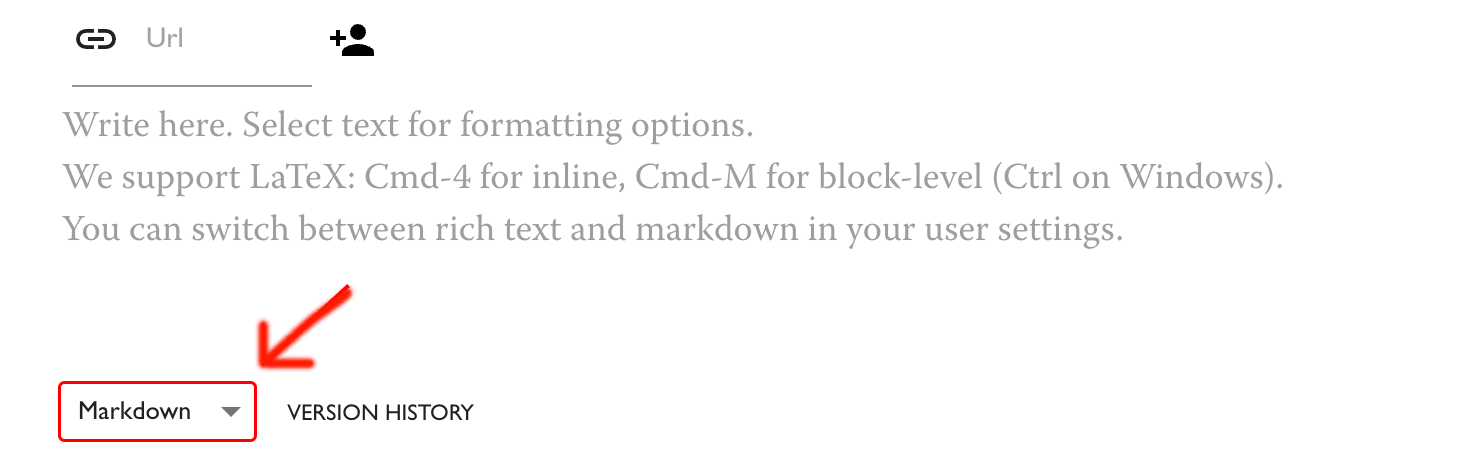

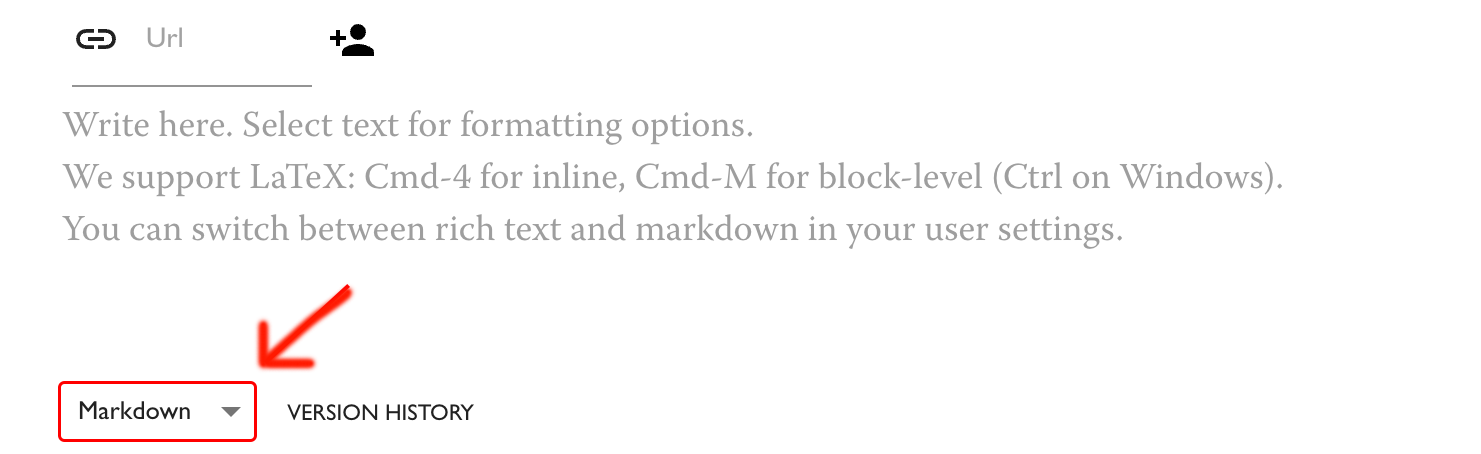

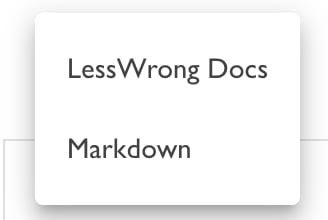

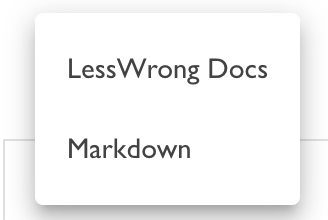

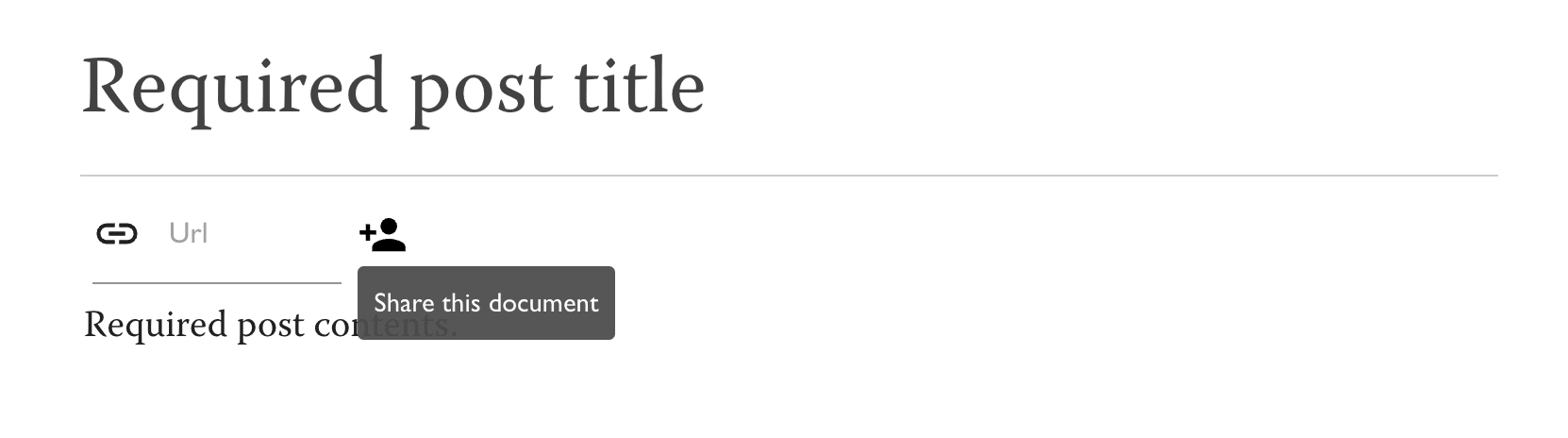

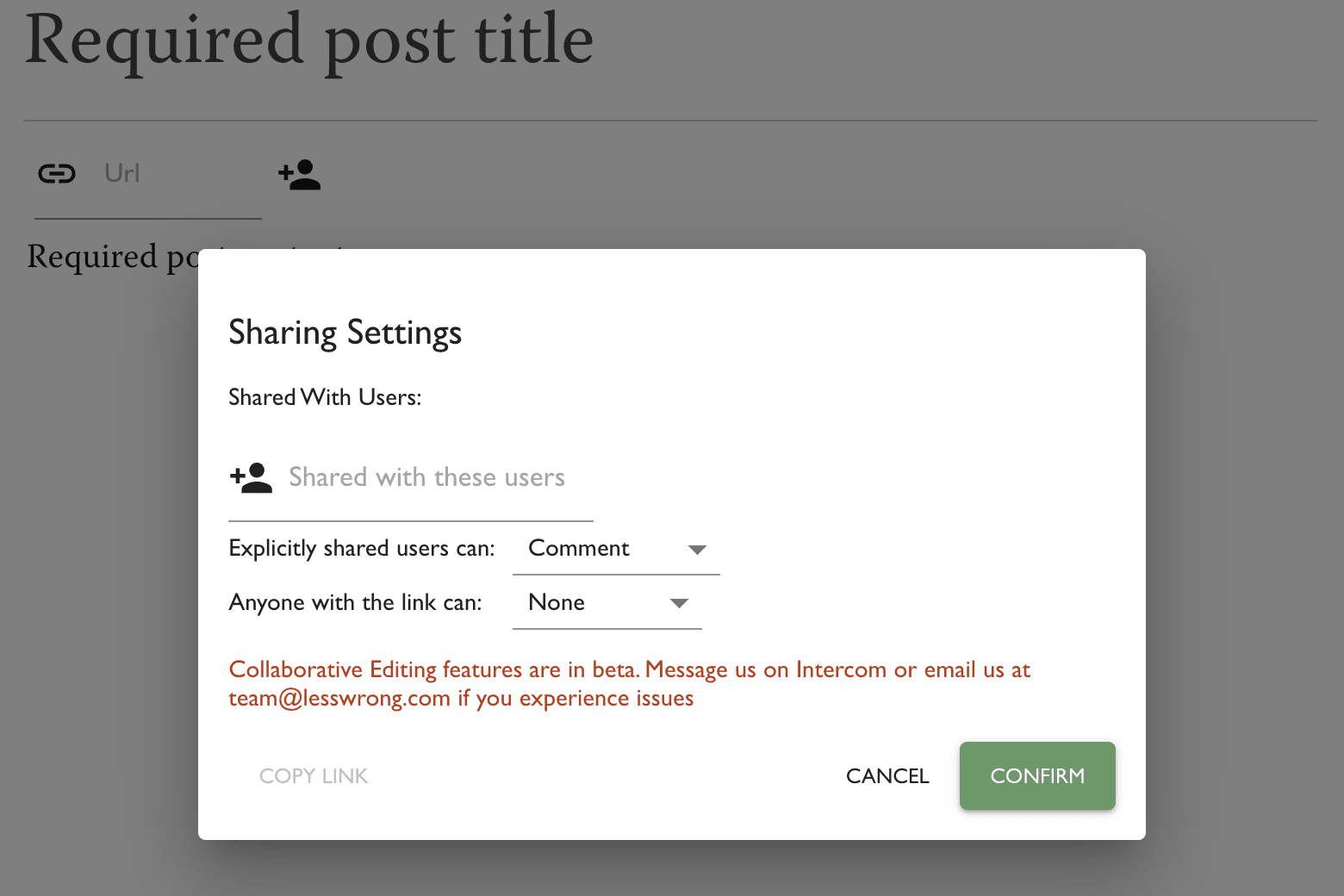

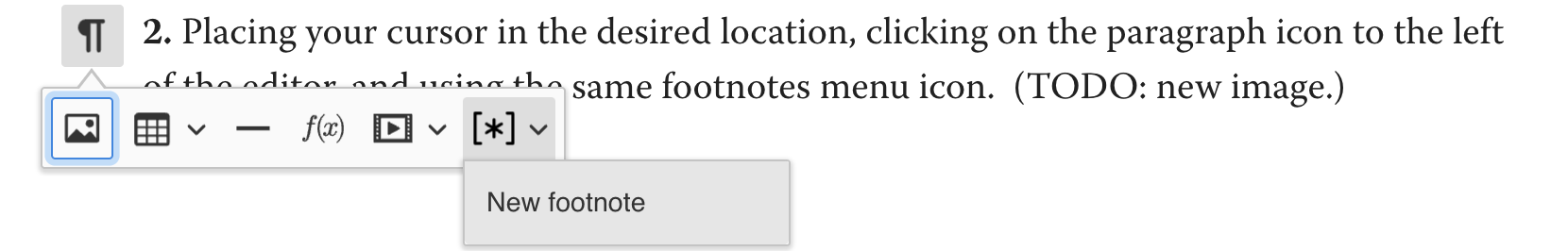

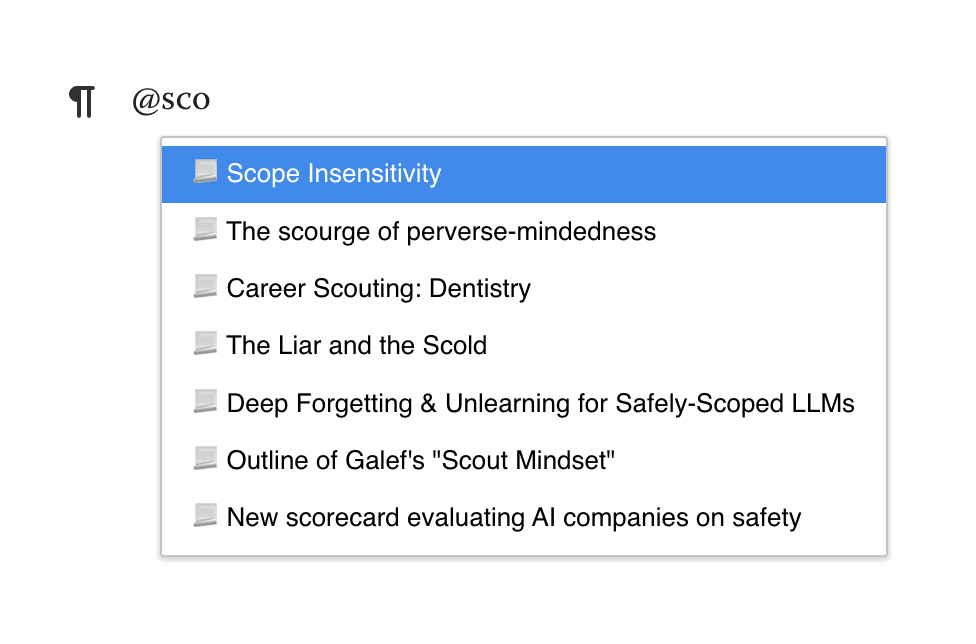

You should be logged in on both sites. To ensure that a post is crossposted after it's published, or to crosspost an already-published post, follow the authentication flow in the Options menu on the post editor page.

Eliezer Yudkowsky is a research fellow of the Machine Intelligence Research Institute, which he co-founded in 2001. He is mainly concerned with the obstacles and importance of developing a Friendly AI, such as a reflective decision theory that would lay a foundation for describing fully recursive self modifying agents that retain stable preferences while rewriting their source code. He also co-founded LessWrong, writing the Sequences, long sequences of posts dealing with epistemology, AGI, metaethics, rationality and so on... (read more)