If youWe used to have 100 or more karma on both LessWronga feature for crossposting to EA Forum. It caused a lot of bugs that were difficult to deal with and didn't feel like it was pulling its weight, so we remove it in the EA Forum, you can automatically crosspost from LessWronglatest update to the EA Forum (and from the EA Forum to LessWrong). You also need to have accepted the EA Forum's Terms of Use,which you can do by trying to create a new post on the EA Forum (if you haven't already done so after the Terms of Use requirement was put in place).

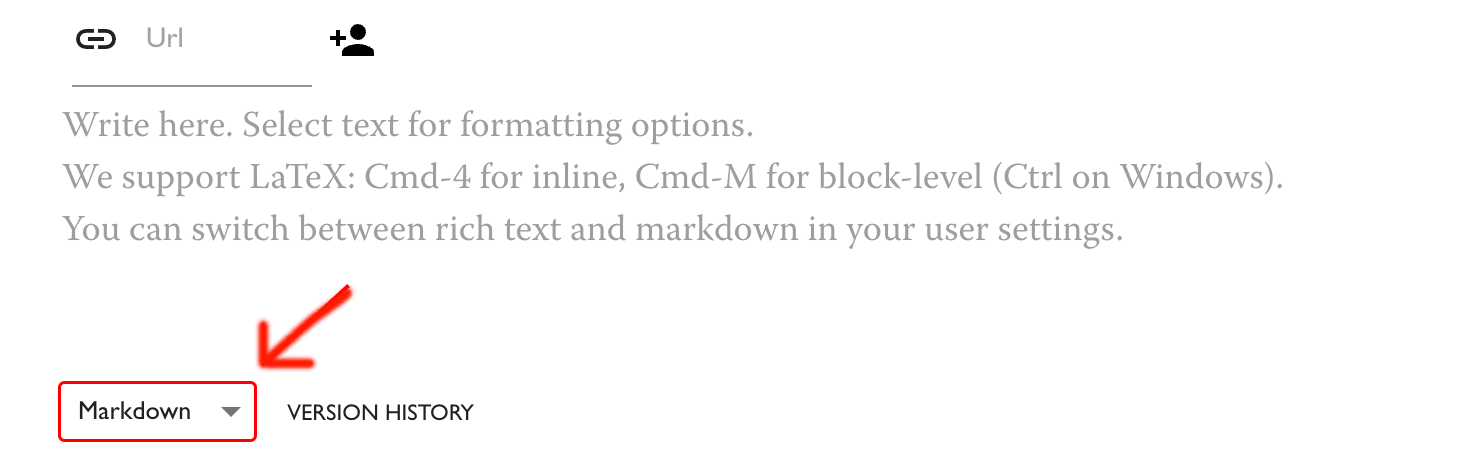

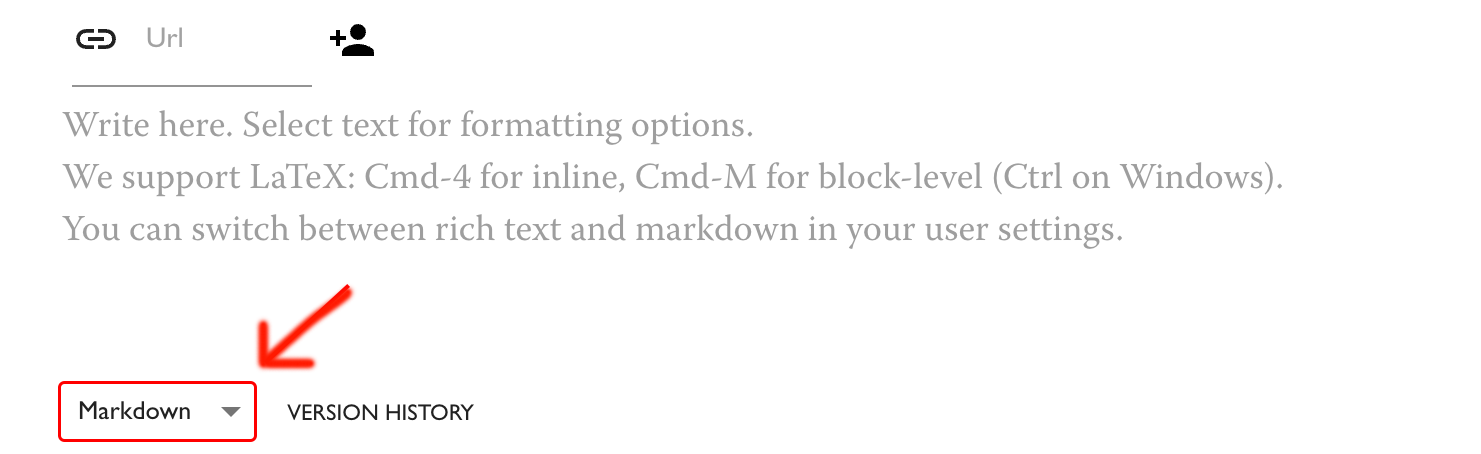

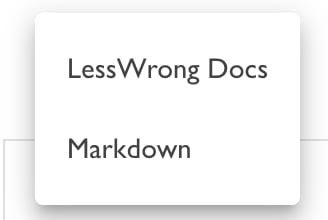

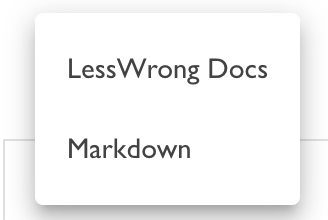

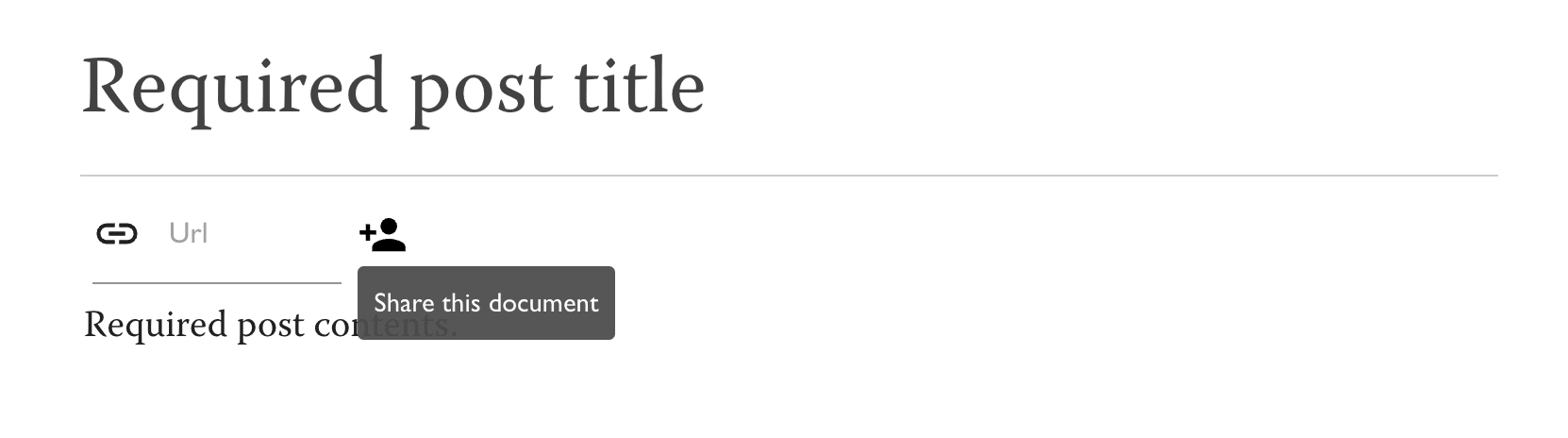

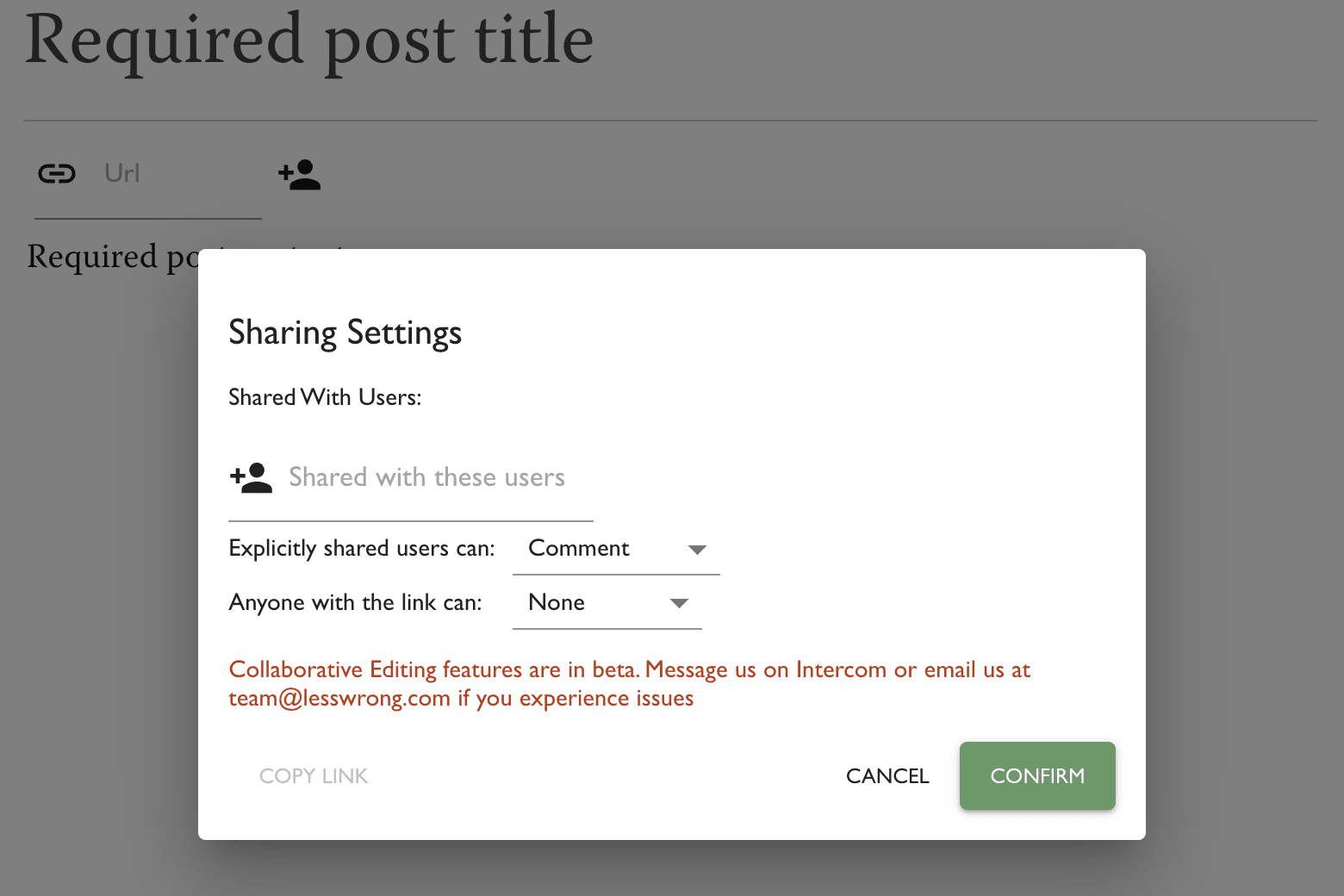

You should be logged in on both sites. To ensure that a post is crossposted after it's published, or to crosspost an already-published post, follow the authentication flow in the Options menu on the post editor page.

AI Control in the context of AI Alignment is a category of plans that aim to ensure safety and benefit from AI systems, even if they are goal-directed and are actively trying to subvert your control measures. From The case for ensuring that powerful AIs are controlled:.. (read more)