I agree that people can easily fail to fix alignment problems, and can instead paper over them, even given a long time to iterate. But I'm not really convinced about your analogy with single-hose air conditioners.

Physics:

The air coming out of the exhaust is often quite a bit hotter than the outside air. I've never checked myself, but just googling has many people reporting 130+ degree temperatures coming out of exhaust from single-hose units. I'm not sure how hot this unit's exhaust is in particular, but I'd guess it's significantly hotter than outside air.

If exhaust is 130 and you are trying to cool from 100 to 70 you'd then only be losing 50% efficiency. Most people won't be cooling by 30 degrees so the efficiency losses would be smaller. In practice I think the actual efficiency loss relative to a 2-hose unit is more like 25-30% (see stats on top wirecutter picks below).

Discourse:

I actually think that this factor(sucking in hot air from the outside) is probably already included in the SACC (seasonally adjusted cooling capacity) and hence CEER reported for this air conditioner. I don't really know anything about air conditioners but it's discussed extensively in the definition of the standards for SACC (e.g. start at page 27 here).

A 2-hose unit will definitely cool more efficiently, but I think for many people who are using portable units it's the right tradeoff with convenience. The wirecutter reviews both types of units together and usually end up preferring 1-hose units. Infiltration is discussed in the wirecutter article and in other articles advising people on whether to pick a 1-hose or 2-hose portable unit.

Meta:

Obviously the point about air conditioners doesn't matter, but I feel like the general lesson is relevant. It's important to be able to call the world on its bullshit (because I agree there is a lot of it), but that seems like it works better when coupled with discernment about what is and is not bullshit.

It's particularly jarring to notice a huge conflict between a clever argument and a lot of people's reported experiences, and then to have the confidence to not only believe the clever argument but to not even think the conflict is worth acknowledging or exploring.

My overall take on this post and comment (after spending like 1.5 hours reading about AC design and statistics):

Overall I feel like both the OP and this reply say some wrong things. The top Wirecutter recommendation is a dual-hose design. The testing procedure of Wirecutter does not seem to address infiltration in any way, and indeed the whole article does not discuss infiltration as it relates to cooling-efficiency.

Overall efficiency loss from going to dual to single is something like 20-30%, which I do think is much lower than I think the OP implied, though it also is quite substantial, and indeed most of the top-ranked Amazon listings do not use any of the updated measurements that Paul is talking about, and so consumers do likely end up deceived about that.

The top-rated AC Wentworth links to is really very weak if you take into account those losses, and I would be surprised if it adequately cooled people's homes.

My current model: Wirecutter is doing OK but really not great here (with an actively confused testing procedure), Amazon ratings are indeed performing quite badly, and basically display most of the problems that Wentworth talks about. It's unclear whether AC companies are optimizing for the Amazon or the Wirecutter case here, which would require me seeing a trend in SACC over time. I expect many, possibly most consumers, are indeed being fooled into thinking their AC is 20-30% more effective than they think it is, because of infiltration issues.

Separately, it's possible to me that consumers actually prefer a world where an AC doesn't cool their whole home, but creates a temperature gradient across the home. In the case of heating, I strongly prefer stoves and fireplaces that produce local heating (and substantially radiation heating), despite similar infiltration issues, because I like being able to move closer and farther away from the source of the heat to locally adjust temperature preferences, and I often live/work with people who have substantially different temperature preferences to me.

Overall, If I were to buy a portable AC today, I would choose Wirecutter's top pick, which happens to be a two-hose design. If that wasn't the case, I would probably still just go with the single-hose design, because I actually like temperature gradients. If I cared about cooling a whole building (which historically hasn't been the case), I would probably go with a dual-hose design. Reading Amazon reviews and ratings would have probably made my decision-making here marginally worse. Following the Wirecutter recommendation would have been OK but not great. It mostly would have pointed me towards the SACC as a better rating, and I probably would have figured out to buy an AC with a high SACC rating, and not historical BTU rating.

My current guess is that most consumers are probably still deceived by non-SACC BTU ratings, but the situation seems to be getting better due to regulations.

Update: I too have now spent like 1.5 hours reading about AC design and statistics, and I can now give a reasonable guess at exactly where the I-claim-obviously-ridiculous 20-30% number came from. Summary: the SACC/CEER standards use a weighted mix of two test conditions, with 80% of the weight on conditions in which outdoor air is only 3°F/1.6°C hotter than indoor air.

The whole backstory of the DOE's SACC/CEER rating rules is here. Single-hose air conditioners take center stage. The comments on the DOE's rule proposals can basically be summarized as:

- Single-hose AC manufacturers very much did not want infiltration air to be accounted for, and looked for any excuse to ignore it

- Electric companies very much did want infiltration air to be accounted for, and in particular wanted SACC to be measured at peak temperatures

- The DOE did its best to maintain a straight face in front of all this obvious bullshitting, and respond to it with legibly-reasonable arguments and statistics.

This quote in particular stands out:

De’ Longhi [an AC manufacturer] expressed concern that modifying the AHAM PAC-1-2014 method to account for infiltration air would disproportionately impact single-duct portable AC performance and subsequently cause the removal of such products from the market.

So the manufacturers themselves know perfectly well that these products are shit, and won't be viable in the market if infiltration is properly accounted for. However, the DOE responds:

However, as discussed further in section III.C.2, section III.C.3, and III.H of this final rule, the rating conditions and SACC calculation proposed in the November 2015 SNOPR mitigate De’ Longhi’s concerns. DOE recognizes that the impact of infiltration on portable AC performance is test-condition dependent and, thus, more extreme outdoor test conditions (i.e., elevated temperature and humidity) emphasize any infiltration related performance differences. The rating conditions and weighting factors proposed in the November 2015 SNOPR, and adopted in this final rule (see section III.C.2.a and section III.C.3 of this final rule), represent more moderate conditions than those proposed in the February 2015 NOPR. Therefore, the performance impact of infiltration air heat transfer on all portable AC configurations is less extreme. In consideration of the changes in test conditions and performance calculations since the February 2015 NOPR 31 and the test procedure established in this final rule, DOE expects that single-duct portable AC performance is significantly less impacted by infiltration air.

Ok, so how are the test conditions somehow making single-hose air conditioners not look blatantly shitty?

The key piece is that the DOE is using a weighted mix of two test conditions: one at outdoor temperature 95°F/35°C (representing "the hottest 750 hours of the year"), the other at outdoor temperature 83°F/28.3°C (representing some kind of average temperature during times when the AC is used at all). For both cases, the DOE assumes that the indoor temperature (i.e. the air which the single-hose air conditioner is taking in) is at 80°F/26.7°C. So for the average-outdoor-temperature case, they're assuming conditions where there is only a 3°F/1.6°C temperature difference between indoors and outdoors! Obviously infiltration has almost no effect under these conditions... but also do people even bother setting up a portable air conditioner only to bring the temperature from 83°F/28.3°C down to 80°F/26.7°C?

Now for the key question: what weights does the DOE use in the weighted mix of these two conditions? 20% weight on the 95°F/35°C outdoor condition, 80% weight on the 83°F/28.3°C outdoor condition.

Bottom line: the DOE's SACC/CEER rating puts about 80% of its weight on a test condition in which the indoor and outdoor temperatures are only 3°F/1.6°C apart.

This document has a good dense summary of the relevant formulas. In particular, and on page 74038 are the heats lost to infiltration under the two conditions. Those are incorporated into and respectively, which are then combined with the weights on page 74039.

One more interesting observation: if we assume that the efficiency loss from infiltration is basically-zero for the 83°F/28.3°C condition, then in order to get an overall efficiency loss of 20-30% for one-hose vs two, the efficiency under the 95°F/35°C condition would have to be somewhere between zero and negative. (Which is possible, given the way the test is setup - it would mean that running the AC in an 80°F/26.7°C house when it's 95°F/35°C outside makes the house hotter overall. In other words, the equilibrium temperature of the room with that AC cooling it is above 80°F/26.7°C.) I would guess that the efficiency under the 95°F/35°C condition is somewhat higher than this simple estimate implies (since there will be some losses under the the 83°F/28.3°C condition), but I think it is a reasonably-realistic ballpark estimate at the temperatures given.

I still the 25-30% estimate in my original post was basically correct. I think the typical SACC adjustment for single-hose air conditioners ends up being 15%, not 25-30%. I agree this adjustment is based on generous assumptions (5.4 degrees of cooling whereas 10 seems like a more reasonable estimate). If you correct for that, you seem to get to more like 25-30%. The Goodhart effect is much smaller than this 25-30%, I still think 10% is plausible.

I admit that in total I’ve spent significantly more than 1.5 hours researching air conditioners :) So I’m planning to check out now. If you want to post something else, you are welcome to have the last word.

SACC for 1-hose AC seems to be 15% lower than similar 2-hose models, not 25-30%:

- This site argues for 2-hose ACs being better than 1-hose ACs and cites SACC being 15% lower.

- The top 2-hose AC on amazon has 14,000 BTU that gets adjusted down to 9500 BTU = 68%. This similarly-sized 1-hose AC is 13,000 BTU and gets adjusted down to 8000 BTU = 61.5%, about 10% lower.

- This site does a comparison of some unspecified pair of ACs and gets 10/11.6 = 14% reduction.

I agree the DOE estimate is too generous to 1-hose AC, though I think it’s <2x:

The SACC adjustment assumes 5.4 degrees of cooling on average, just as you say. I’d guess the real average use case, weighted by importance, is closer to 10 degrees of cooling. I’m skeptical the number is >10—e.g. 95 degree heat is quite rare in the US, and if it’s really hot you will be using real AC not a cheap portable AC (you can’t really cool most rooms from 95->80 with these Acs, so those can't really be very common). Overall the DOE methodology seems basically reasonable up to a few degrees of error.

Still looks similar to my initial estimate:

I’d bet that the simple formula I suggested was close to correct. Apparently 85->80 degrees gives you 15% lower efficiency (11% is the output from my formula). 90->80 would be 20% on my formula but may be more like 30% (e.g. if the gap was explained by me overestimating exhaust temp).

So that seems like it's basically still lining up with the 25-30% I suggested initially, and it's for basically the same reasons. The main thing I think was wrong was me saying "see stats" when it was kind of coincidental that the top rated AC you linked was very inefficient in addition to having a single hose (or something, I don't remember what happened).

The Goodhart effect would be significantly smaller than that:

- I think people primarily estimate AC effectiveness by how cool it makes them and the room, not how cool the air coming out of the AC is.

- The DOE thinks (and I’m inclined to believe) that most of the air that’s pulled in is coming through the window and so heats the room with the AC.

- Other rooms in the house will generally be warmer than the room being air conditioned, so infiltration from them would still warm the room (and to the extent it doesn’t, people do still care more about the AC’d room).

If you wouldn't mind one last question before checking out: where did that formula you're using come from?

- The top wirecutter recommendation is roughly 3x as expensive as the Amazon AC being reviewed. The top budget pick is a single-hose model.

- People usually want to cool the room they are spending their time in. Those ACs are marketed to cool a 300 sq ft room, not a whole home. That's what reviewers are clearly doing with the unit.

- I'd guess that in extreme cases (where you care about the room with AC no more than other rooms in the house + rest of house is cool) consumers are overestimating efficiency by ~30%. On average in reality I'd guess they are overestimating value-added by the air conditioner by more like ~10% (since the AC'd room will be cooler and they care less about other rooms).

- I think the OP is misleading if 10% is what's at stake and there are real considerations on the other side.

- I think there is very little chance that the wirecutter reviewers don't understand that infiltration affects heating efficiency. However I agree that your preferences about AC, and the interpretation of their tests, depend on how hot the rest of the building is (and how much you care about keeping it cool). I'm 50-50 on whether someone from the wirecutter would be able to explain that issue if pressed.

- This AC does not report SACC BTU, though many of the top-rated ACs list both (4/6 of the other ones I checked from the "4 stars and above related products"). I agree that some consumers won't see this number.

- The internet will tell you to use a 10,000 BTU portable AC for a 300 sq ft room (in line with the recommendation on Amazon's page) and a 6500 BTU window AC. That is, the "300 sq ft" number and normal internet folklore are mostly taking into account these issues.

- The AC in question does report CEER which I still think includes this issue. It has a quite mediocre CEER of 6.6. It describes this as "super efficient" which is obviously false.

- Note that non-SACC BTU ratings are mostly only a problem when looking at comparisons of single-hose to double-hose AC (since e.g. googling portable AC sizing or looking at recommended sq footage takes this issue into account), and so what mostly matters is whether the Amazon page for a double-hose AC makes this argument in a way that lets it win comparison-shopping customers.

What an argument about air conditioners :)

Regulation does not fix the problem, just moves it from the consumer to the regulator. A regulator will only regulate a problem which is obvious to the regulator. A regulator may sometimes have more expertise than a layperson, but even that requires that the politicians ultimately appointing people can distinguish real from fake expertise, which is hard in general.

It seems like the DOE decided to adopt energy-efficiency standards that take into account infiltration. They could easily have made a different decision (e.g. because of pressure from portable AC manufacturers, or because it's legitimately unclear how to define the standard, or because it makes measurement harder), but it wouldn't be because the issue wasn't obvious (I think it's not even anywhere close to the "failure because the issue wasn't obvious" regime).

Overall I agree with the bottom line that regulation is unlikely to help that much with alignment. But I don't think this seems like the right model of why that is or how you could fix it.

Waiting longer does not fix the problem. All those people who did not notice their air conditioner pulling hot air into the house will not start noticing if we just wait a few years. Problems do not automatically become obvious over time.

I think our understanding of these issues has always been much better than the low baseline you imagine in the OP, but I also think discourse has clearly improved significantly over time. So I'm also not sure that this analogy really says what you want it to say.

After this comment there was a long thread about AC efficiency.

Summarizing:

- I said: "In practice I think the actual efficiency loss relative to a 2-hose unit is more like 25-30%" (For cooling from 85 to 70.)

- John said that this was ridiculous.

- After the dust settled, our best estimate on paper is 40% rather than 25-30%.

The reason for the adjustments were roughly:

- [x2] I estimated exhaust temperature at 130 degrees, but it's more like 100 degrees if the indoor air is 70.

- [x1/2] I thought that all depressurization was compensated for by increased infiltration. But probably half of depressurization is offset by reduced exfiltration instead (see here)

- [x3/2] I only considered sensible heat. But actually humidity is a huge deal, because the exhaust is heated but not humidified (see here)

John also attempted to measure the loss empirically, but I'd summarize as "too hard to measure":

- With 1-hose the indoor temp was 68 vs 88 outside, while with 2-hose the indoor temp was 66 vs 88 outside (using the same amount of energy).

- We both agree that 10% is an underestimate for the efficiency loss (e.g. due to room insulation, other cooling in the building, and the improvised 2-hose setup).

- I don't think we have a plausible way to extract a corrected estimate.

A 2-hose unit will definitely cool more efficiently, but I think for many people who are using portable units it's the right tradeoff with convenience. The wirecutter reviews both types of units together and usually end up preferring 1-hose units.

It is important to note that the current top wirecutter pick is a 2-hose unit, though one that combined the two hoses into one big hose. I guess maybe that is recent, but it does seem important to acknowledge here (and it wouldn't surprise me that much if Wirecutter went through reasoning pretty similar to the one in this post, and then updated towards the two-hose unit because of concerns about infiltration and looking at more comprehensive metrics like SACC).

Here is the wirecutter discussion of the distinction for reference:

Starting in 2019, we began comparing dual- and single-hose models according to the same criteria, and we didn’t dismiss any models based on their hose count. Our research, however, ultimately steered us toward single-hose portable models—in part because so many newer models use this design. In fact, we found no compelling new double-hose models from major manufacturers in 2019 or 2020 (although a few new ones cropped up in 2021, including our new top pick). Owner reviews indicate that most people prefer single-hose models, too, since they’re easier to set up and don’t look quite as much like a giant octopus trash sculpture. Although our testing has shown that dual-hose models tend to outperform some single-hose units in extremely hot or muggy weather, the difference is usually minimal, and we don’t think it outweighs the convenience of a single hose.

The one major exception, however, is if you plan on setting up your portable AC in a room with a furnace or hot water heater or anything else that uses combustion. When a single-hose AC model forces air out through its exhaust hose, it can create negative pressure in the room. This produces a slight vacuum effect, which pulls in “infiltration air” from anywhere it can in order to equalize the pressure. In the presence of a gas-powered device such as a furnace, that negative pressure creates a backdraft or downdraft, which can cause the machine to malfunction—or worse, fill the room with gas fumes and carbon monoxide. We don’t think that most people plan to use their portable AC in such a room, but if your home is set up in such a way that you’re concerned about ventilation, skip the rest of our recommendations here and go straight for the Midea Duo MAP12S1TBL or another dual-hose model like the Whynter Elite ARC-122DS or Whynter Elite ARC-122DHP.

On the physics: to be clear, I'm not saying the air conditioner does not work at all. It does make the room cooler than it started, at equilibrium.

I also am not surprised (in this particular example) to hear that various expert sources already account for the inefficiency in their evaluations; it is a problem which should be very obvious to experts. Of course that doesn't apply so well to e.g. the example of medical research replication failures. The air conditioner example is not meant to be an example of something which is really hard to notice for humanity as a whole; it's meant to be an example of something which is too hard for a typical consumer to notice, and we should extrapolate from there to the existence of things which people with more expertise will also not notice (e.g. the medical research example). Also, it's a case-in-point that experts noticing a problem with some product is not enough to remove the economic incentive to produce the product.

It's particularly jarring to notice a huge conflict between a clever argument and a lot of people's reported experiences, and then to have the confidence to not only believe the clever argument but to not even think the conflict is worth acknowledging or exploring.

When the argument specifically includes reasons to expect people to not notice the problem, it seems obviously correct to discount reported experiences. Of course there are still ways to gain evidence from reported experience - e.g. if someone specifically said "this unit cooled even the far corners of the house", then that would partially falsify our theory for why people will overlook the one-hose problem. But we should not blindly trust reports when we have reasons to expect those reports to overlook problems.

In this particular case, I indeed do not think the conflict is worth the cost of exploring - it seems glaringly obvious that people are buying a bad product because they are unable to recognize the ways in which it is bad. Positive reports do not contradict this; there is not a conflict here. The model already predicts that there will be positive reports - after all, the air conditioner is very convenient and pumps lots of cool air out the front in very obvious ways.

In this particular case, I indeed do not think the conflict is worth the cost of exploring - it seems glaringly obvious that people are buying a bad product because they are unable to recognize the ways in which it is bad.

The wirecutter recommendation for budget portable ACs is a single-hose model. Until very recently their overall recommendation was also a single-hose model.

The wirecutter recommendations (and other pages discussing this tradeoffs) are based on a combination of "how cold does it make the room empirically?" and quantitative estimates of cooling that take into account infiltration. This issue is discussed extensively, with quantitative detail, by people who quite often end up recommending 1-hose designs for small rooms (like the one this AC is advertised for).

One AC unit tested by the wirecutter is convertible between 2-hose and 1-hose. They write:

The best thing we took away from our tests was the chance at a direct comparison between a single-hose design and a dual-hose design that were otherwise identical, and our experience confirmed our suspicions that dual-hose portable ACs are slightly more effective than single-hose models but not effective enough to make a real difference

The 2-hose model they recommend probably wins in part because design improvements lower the complexity of the 2-hose setup:

Unlike the single-hose portables we typically recommend, the Duo has a unique “hose-in-hose” setup where the exhaust and intake are split into two separate conduits contained within a single larger tube, making it even more efficient.

(Amusingly, I think this means that the only 2-hose model recommended by the wirecutter also looks like it has just 1 hose in pictures.)

I think it's correct that consumers probably overweight setup simplicity relative to efficiency. But I think their subjective sense of "how cold does this make the room" is roughly accurate and your argument doesn't undermine that as much as you suggest (because infiltration affects the room as well as the house, and on top of that they care most about the room), and the quoted numbers for AC efficacy also take this consideration into account.

ETA: I also think it's possible that consumers have historically underestimated the importance of infiltration (especially 5+ years ago when discussion was less good, when people may have leaned more on "what it says on the tin" vs recommendations, and when legally-mandated efficiency numbers would not have included infiltration) and this made it harder for two-hose designs to compete. On this story slow institutional progress is gradually fixing this problem, and two-hose designs will be clearly better once enough of them are made (rather than right now where they still aren't better at small room / low price point). But the total upside will be like 5-10% efficiency, and it's kind of small potatoes that can't even easily be pinned on individual irrationality given that in fact the best existing AC units for these conditions do seem to have one hose right now.

The best thing we took away from our tests was the chance at a direct comparison between a single-hose design and a dual-hose design that were otherwise identical, and our experience confirmed our suspicions that dual-hose portable ACs are slightly more effective than single-hose models but not effective enough to make a real difference

After having looked into this quite a bit, it does really seem like the Wirecutter testing process had no ability to notice infiltration issues, so it seems like the Wirecutter crew themselves is kind of confused here?

The... Wirecutter article does also not seem to discuss the issue of infiltration of hot air in any reasonable way. Instead it just says that:

This produces a slight vacuum effect, which pulls in “infiltration air” from anywhere it can in order to equalize the pressure. In the presence of a gas-powered device such as a furnace, that negative pressure creates a backdraft or downdraft, which can cause the machine to malfunction—or worse, fill the room with gas fumes and carbon monoxide. We don’t think that most people plan to use their portable AC in such a room, but if your home is set up in such a way that you’re concerned about ventilation, skip the rest of our recommendations

Which... seems to misunderstand the actual problem of infiltration as it relates to heating efficiency? This is the only mention of the word infiltration in the whole article, and I can't find any other section that discusses infiltration problems in other words.

Indeed, the article says directly:

Although our testing has shown that dual-hose models tend to outperform some single-hose units in extremely hot or muggy weather, the difference is usually minimal, and we don’t think it outweighs the convenience of a single hose.

Based on a testing methodology that is very unlikely to be able to measure the primary issue with single-hose vs. double-hose designs. This seems like Wirecutter is directly falling prey to the exact problem the OP is describing.

I feel a bit confused how the official SACC measures account for infiltration, but assuming they are doing it properly, the overall difference between single-hose and dual-hose designs does only seem to be something like 20%. I do expect the current Wirecutter tests to fail to measure that difference, but am also not sure that 20% is really worth the loss of convenience from having a single hose.

They measure the temperature in the room, which captures the effect of negative pressure pulling in hot air from the rest of the building. It underestimates the costs if the rest of the building is significantly cooler than the outside (I'd guess by the ballpark of 20-30% in the extreme case where you care equally about all spaces in the building, the rest of your building is kept at the same temp as the room you are cooling, and a negligible fraction of air exchange with the outside is via the room you are cooling).

Which... seems to misunderstand the actual problem of infiltration as it relates to heating efficiency? This is the only mention of the word infiltration in the whole article, and I can't find any other section that discusses infiltration problems in other words.

I think that paragraph is discussing a second reason that infiltration is bad.

I think that paragraph is discussing a second reason that infiltration is bad.

Yeah, sorry, I didn't mean to imply the section is saying something totally wrong. The section just makes it sound like that is the only concern with infiltration, which seems wrong, and my current model of the author of the post is that they weren't actually thinking through heat-related infiltration issues (though it's hard to say from just this one paragraph, of course).

The best thing we took away from our tests was the chance at a direct comparison between a single-hose design and a dual-hose design that were otherwise identical, and our experience confirmed our suspicions that dual-hose portable ACs are slightly more effective than single-hose models but not effective enough to make a real difference

I roll to disbelieve. I think it is much more likely that something is wrong with their test setup than that the difference between one-hose and two-hose is negligible.

Just on priors, the most obvious problem is that they're testing somewhere which isn't hot outside the room - either because they're inside a larger air-conditioned building, or because it's not hot outdoors. Can we check that?

Well, they apparently tested it in April 2022, i.e. nowish, which is indeed not hot most places in the US, but can we narrow down the location more? The photo is by Michael Hession, who apparently operates near Boston. Daily high temps currently in the 50's to 60's (Fahrenheit). So yeah, definitely not hot there.

Now, if they're measuring temperature delta compared to the outdoors, it could still be a valid test. On the other hand, if it's only in the 50's to 60's outside, I very much doubt that they're trying to really get a big temperature delta from that air conditioner - they'd have to get the room down below freezing in order to get the same temperature delta as a 70 degree room on a 100 degree day.

If they're only trying to get a tiny temperature delta, then it really doesn't matter how efficient the unit is. For someone trying to keep a room at 70 on a 100 degree day, it's going to matter a lot more.

So basically, I am not buying this test setup. It does not look like it is actually representative of real usage, and it looks nonrepresentative in the basically the ways we'd expect from a test that found little difference between one and two hoses.

Generalizable lesson/heuristic: the supposed "experts" are also not even remotely trustworthy.

(Also, I expect it to seem like I am refusing to update in the face of any evidence, so I'd like to highlight that this model correctly predicted that the tests were run someplace where it was not hot outside. Had that evidence come out different, I'd be much more convinced right now that one hose vs two doesn't really matter.)

(Also, I expect it to seem like I am refusing to update in the face of any evidence, so I'd like to highlight that this model correctly predicted that the tests were run someplace where it was not hot outside. Had that evidence come out different, I'd be much more convinced right now that one hose vs two doesn't really matter.)

From how we tested:

Over the course of a sweltering summer week in Boston, we set up our five finalists in a roughly 250-square-foot space, taking notes and rating each model on the basic setup process, performance, portability, accessories, and overall user experience.

ETA: it's not clear that's the same testing setup used in the other tests they described. But they do talk about how the 1-vs-2 convertible unit "struggled to make the room any cooler than 70 degrees" which sounds like it was probably reasonably hot.

Alright, I am more convinced than I was about the temperature issue, but the test setup still sounds pretty bad.

First, Boston does not usually get all that sweltering. I grew up in Connecticut (close to Boston and similar weather), summer days usually peaked in the low 80's. Even if they waited for a really hot week, it was probably in the 90's. A quick google search confirms this: typical July daily high temp is 82, and google says "Overall during July, you should expect about 4-6 days to reach or exceed 90 F (32C) while the all-time record high for Boston was 103 F (39.4C)".

It's still a way better test than April (so I'm updating from that), but probably well short of keeping a room at 70 on a 100 degree day. I'm guessing they only had about half that temperature delta.

Second, their actual test procedure (thankyou for finding that, BTW):

Over the course of a sweltering summer week in Boston, we set up our five finalists in a roughly 250-square-foot space, taking notes and rating each model on the basic setup process, performance, portability, accessories, and overall user experience.

The makers of portable air conditioners are required to list their performance and efficiency statistics, and our research and our previous testing have proven these numbers to be accurate. By prescreening for these stats, we got the impression that every model we tested would cool a room capably. We confirmed that they did by taking measurements with two Lascar temperature and humidity data loggers—we placed one 3 feet away, directly in front of the unit, and placed the other one 6 feet away on a diagonal. With each AC set to its lowest setting (between 60 and 64 degrees Fahrenheit, depending on the unit) and the highest fan/compressor setting, we measured the temperature and humidity in the room every 15 minutes for three hours to see how well each unit dispersed the coolness and dehumidification process across the space.

Three feet and six feet away? That sure does sound like they're measuring the temperature right near the unit, rather than the other side of the room where we'd expect infiltration to matter. I had previously assumed they were at least measuring the other side of the room (because they mention for the two-hose recommendation "In our tests, it was also remarkably effective at distributing the cool air, never leaving more than a 1-degree temperature difference across the room"), but apparently "across the room" actually meant "6 feet away" based on this later quote:

In our tests, it [a two-hose air conditioner] produced some of the most even and consistent cooling across the room, never registering more than a 1-degree difference between our monitors positioned at 3 feet directly in front of the AC and 6 feet away on a diagonal.

... which sure does sound more like what we'd expect.

So I'm updating away from "it was just not hot outside" - probably a minor issue, but not a major one. That said, it sure does sound like they were not measuring temperature across the room, and even just between 3 and 6 feet away the two-hose model apparently had noticeably less drop-off in effectiveness.

Boston summers are hotter than the average summers in the US, and I'd guess are well above the average use case for an AC in the US. I agree having two hoses are more important the larger the temperature difference, and by the time you are cooling from 100 to 70 the difference is fairly large (though there is basically nowhere in the US where that difference is close to typical).

I'd be fine with a summary of "For users who care about temp in the whole house rather than just the room with the AC, one-hose units are maybe 20% less efficient than they feel. Because this factor is harder to measure than price or the convenience of setting up a one-hose unit, consumers don't give it the attention it deserves. As a result, manufacturers don't make as many cheap two-hose units as they should."

Does anyone in-thread (or reading along) have any experiments they'd be interested in me running with this air conditioner? It doesn't seem at all hard for me to do some science and get empirical data, with a different setup to Wirecutter, so let me know.

Added: From a skim of the thread, it seems to me the experiment that would resolve matters is testing in a large room with temperature sensors more like 15 feet away in a city or country that's very hot outside, and to compare this with (say) Wirecutter's top pick with two-hoses. Confirm?

... I actually already started a post titled "Preregistration: Air Conditioner Test (for AI Alignment!)". My plan was to use the one-hose AC I bought a few years ago during that heat wave, rig up a cardboard "second hose" for it, and try it out in my apartment both with and without the second hose next time we have a decently-hot day. Maybe we can have an air conditioner test party.

Predictions: the claim which I most do not believe right now is that going from one hose to two hose with the same air conditioner makes only a 20%-30% difference. The main metric I'm interested in is equilibrium difference between average room temp and outdoor temp (because that was the main metric relevant when I was using that AC during the heat wave). I'm at about 80% chance that the difference will be over 50%.

(Back-of-the-envelope math a few years ago said it should be roughly a factor-of-two difference, and my median expectation is close to that.)

I also expect (though less strongly) that, assuming the room's doors and windows are closed, corners of the room opposite the AC in single-hose mode will be closer to outdoor temp than to the temp 3 ft away from the AC, and that this will not be the case in two-hose mode. I'd put about 60% on that prediction.

These predictions are both conditional on the general plan I had, and might change based on details of somebody else' test plan. In particular, some factors I expect are relevant:

- The day being hot enough and the room large enough that the AC runs continuously (as opposed to getting the room down to target temperature easily, at which point it will shut off until the temperature goes back up).

- The test room does not open into an indoor room at lower temperature (I had planned to open the outside door and windows in the rest of the apartment).

- Test room generally not in direct sun, including outside of walls/ceiling. If it is in full sun, then I'd strengthen my probability for the second prediction.

Also, in case people want to bet, I should warn that I did use this AC during a heat wave a few years ago (with just the one hose), so e.g. I have seen firsthand how it tends to only cool the space right in front of it. On the other hand, it could turn out that was due to factors specific to the apartment I was in back then - for instance, the roof in that apartment was uninsulated and in full sun, so a lot of heat came off the ceiling.

I would have thought that the efficiency lost is roughly (outside temp - inside temp) / (exhaust temp - inside temp). And my guess was that exhaust temp is ~130.

I think the main way the effect could be as big as you are saying is if that model is wrong or if the exhaust is a lot cooler than I think. Those both seem plausible; I don't understand how AC works, so don't trust that calculation too much. I'm curious what your BOTEC was / if you think 130 is too high an estimate for the exhaust temp?

If that calculation is right, and exhaust is at 130, outside is 100, and house is 70, you'd have 50% loss. But you can't get 50% in your setup this way, since your 2-hose AC definitely isn't going to get the temp below 65 or so. Maybe most plausible 50% scenario would be something like 115 exhaust, 100 outside, 85 inside with single-hose, 70 inside with double-hose.

I doubt you'll see effects that big. I also expect the improvised double hose will have big efficiency losses. I think that 20% is probably the right ballpark (e.g. 130/95/85/82). If it's >50% I think my story above is called into question. (Though note that the efficiency lost from one hose is significantly larger than the bottom line "how much does people's intuitive sense of single-hose AC quality overstate the real efficacy?")

Your AC could also be unusual. My guess is that it just wasn't close to being able to cool your old apartment and that single vs double-hoses was a relatively small part of that, in which case we'd still see small efficiency wins in this experiment. But it's conceivable that it is unreasonably bad in part because it has an unreasonably low exhaust temp, in which case we might see an unreasonably large benefit from a second hose (though I'd discard that concern if it either had similarly good Amazon reviews or a reasonable quoted SACC).

I'm curious what your BOTEC was / if you think 130 is too high an estimate for the exhaust temp?

I don't remember what calculation I did then, but here's one with the same result. Model the single-hose air conditioner as removing air from the room, and replacing with a mix of air at two temperatures: (the temperature of cold air coming from the air conditioner), and (the temperature outdoors). If we assume that is constant and that the cold and hot air are introduced in roughly 1:1 proportions (i.e. the flow rate from the exhaust is roughly equal to the flow rate from the cooling outlet), then we should end up with an equilibrium average temperature of . If we model the switch to two-hose as just turning off the stream of hot air, then the equilibrium average temperature should drop to .

Some notes on this:

- It's talking about equilibrium temperature rather than power efficiency, because equilibrium temperature on a hot day was mostly what I cared about when using the air conditioner.

- The assumption of roughly-equal flow rates seems to be at least the right order of magnitude based on seeing this air conditioner in operation, though I haven't measured carefully. If anything, it seemed like the exhaust had higher throughput.

- The assumption of constant is probably the most suspect part.

Ok, I think that ~50% estimate is probably wrong. Happy to bet about outcome (though I think someone with working knowledge of air conditioners will also be able to confirm). I'd bet that efficiency and Delta t will be linearly related and will both be reduced by a factor of about (exhaust - outdoor) / (exhaust - indoor) which will be much more than 50%.

... and will both be reduced by a factor of about (exhaust - outdoor) / (exhaust - indoor) which will be much more than 50%.

I assume you mean much less than 50%, i.e. (T_outside - T_inside) averaged over the room will be less than 50% greater with two hoses than with one?

I'm open to such a bet in principle, pending operational details. $1k at even odds?

Operationally, I'm picturing the general plan I sketched four comments upthread. (In particular note the three bulleted conditions starting with "The day being hot enough and the room large enough that the AC runs continuously..."; I'd consider it a null result if one of those conditions fails.) LMK if other conditions should be included.

Also, you're welcome to come to the Air Conditioner Testing Party (on some hot day TBD). There's a pool at the apartment complex, could swim a bit while the room equilibrates.

Also, like, Berkeley heat waves may just significantly different than, like, Reno heat waves. My current read is that part of the issue here is that a lot of places don't actually get that hot so having less robustly good air conditioners is fine.

I bought my single-hose AC for the 2019 heat wave in Mountain View (which was presumably basically similar to Berkeley).

When I was in Vegas, summer was just three months of permanent extreme heat during the day; one does not stay somewhere without built-in AC in Vegas.

I think labeling requirements are based on the expectation of cooling from 95 to 80 (and I expect typical use cases for portable AC are more like that). Actually hot places will usually have central air or window units.

Regarding the back-and-forth on air conditioners, I tried Google searching to find a precedent for this sort of analysis; the first Google result was "air conditioner single vs. dual hose" was this blog post, which acknowledges the inefficiency johnswentworth points out, overall recommends dual-hose air conditioners, but still recommends single-hose air conditioners under some conditions, and claims the efficiency difference is only about 12%.

Highlights:

In general, a single-hose portable air conditioner is best suited for smaller rooms. The reason being is because if the area you want to cool is on the larger side, the unit will have to work much harder to cool the space.

So how does it work? The single-hose air conditioner yanks warm air and moisture from the room and expels it outside through the exhaust. A negative pressure is created when the air is pushed out of the room, the air needs to be replaced. In turn, any opening in the house like doors, windows, and cracks will draw outside hot air into the room to replace the missing air. The air is cooled by the unit and ejected into the room.

...

Additionally, the single-hose versions are usually less expensive than their dual-hose counterparts, so if you are price sensitive, this should be considered. However, the design is much simpler and the bigger the room gets, the less efficient the device will be.

...

In general, dual-hose portable air conditioners are much more effective at cooling larger spaces than the single-hose variants. For starters, dual-hose versions operate more quickly as it has a more efficient air exchange process.

This portable air conditioning unit has two hoses, one functions as an exhaust hose and the other as an intake hose that will draw outside hot air. The air is cooled and expelled into the area. This process heats the machine, to cool it down the intake hose sucks outside hot air to cool the compressor and condenser units. The exhaust hose discard warmed air outside of the house.

The only drawback is that these systems are usually more expensive, and due to having two hoses instead of one, they are slightly less portable and more difficult to set up, yet most people tend to agree the investment in the extra hose is definitely worth the extra cost.

One thing to bear in mind is that the dual hose conditioners tend to be louder than single hoses. Once again, this depends on the model you purchase and its specifications, but it’s definitely worth mulling over if you need to keep the noise down in your area.

...

All portable air conditioner’s energy efficiency is measured using an EER score. The EER rating is the ratio between the useful cooling effect (measured in BTU) to electrical power (in W). It’s for this reason that it is hard to give a generalized answer to this question, but typically, portable air conditioners are less efficient than permanent window units due to their size.

...

DESCRIPTION | SINGLE-HOSE | DUAL-HOSE

Price | Starts at $319.00 | Starts at $449.00

...

Energy Efficient Ratio (EER) | 10 | 11.2

Power Consumption Rate | about $1 a day | Over $1 a day

EER does not account for heat infiltration issues, so this seems confused. CEER does, and that does suggest something in the 20% range, but I am pretty sure you can't use EER to compare a single-hose and a dual-hose system.

I assumed EER did account for that based on:

All portable air conditioner’s energy efficiency is measured using an EER score. The EER rating is the ratio between the useful cooling effect (measured in BTU) to electrical power (in W). It’s for this reason that it is hard to give a generalized answer to this question, but typically, portable air conditioners are less efficient than permanent window units due to their size.

This article explains the difference: https://www.consumeranalysis.com/guides/portable-ac/best-portable-air-conditioner/

For example, a 14,000 BTU model that draws 1,400 watts of power on maximum settings would have an EER of 10.0 as 14,000/1,400 = 10.0.

A 14,000 BTU unit that draws 1200 watts of power would have an EER of 11.67 as 14,000/1,200 = 11.67.

Taken at face value, this looks like a good and proper metric to use for energy efficiency. The lower the power draw (watts) compared to the cooling capacity (BTUs/hr), the higher the EER. And the higher the EER, the better the energy efficiency.

Thus, if we were to look at the EER of the two example units above we could easily say that the second has better energy efficiency because it has a higher EER – 11.67 compared to 10.0.

However, taking into account what you’ve learned so far about the old method used to determine cooling capacity (standard BTUs) vs the new method used to do so (SACC), you should be able to spot one major problem with EER. That’s right. It uses standard BTU’s – yes, the old BTU metric – in its equation.

The Department of Energy also recognized this issue with EER and acted accordingly by instituting a new metric by which to determine a portable AC unit’s energy efficiency.

That metric is called CEER – Combined Energy Efficiency Ratio.

Unfortunately, CEER is a lot more complicated than EER. The new energy efficiency ratio could have simply involved taking SACC and dividing it by maximum power draw on cooling mode in watts. But the DOE decided that the equation needed a little bit more nuance than that. Let’s take a look at the end result:

EER measures performance in BTUs, which are simply measuring how much work the AC performs, without taking into account any backflow of cold air back into the AC, or infiltration issues.

The nonobvious problems are the whole reason why AI alignment is hard in the first place.

I disagree with the implication that there’s nothing to worry about on the “obvious problems” side.

An out-of-control AGI self-reproducing around the internet, causing chaos and blackouts etc., is an “obvious problem”. I still worry about it.

After all, consider this: an out-of-control virus self-reproducing around the human population, causing death and disability etc., is also an “obvious problem”. We already have this problem; we’ve had this problem for millennia! And yet, we haven’t solved it!

(It’s even worse than that—it’s an obvious problem with obvious mitigations, e.g. end gain-of-function research, and we’re not even doing that.)

There is an important difference here between "obvious in advance" and "obvious in hindsight", but your basic point is fair, and the virus example is a good one. Humanity's current state is indeed so spectacularly incompetent that even the obvious problems might not be solved, depending on how things go.

I would say “Humanity's current state is so spectacularly incompetent that even the obvious problems with obvious solutions might not be solved”.

If humanity were not spectacularly incompetent, then maybe we wouldn't have to worry about the obvious problems with obvious solutions. But we would still need to worry about the obvious problems with extremely difficult and non-obvious solutions.

I go to Amazon, search for “air conditioner”, and sort by average customer rating. There’s a couple pages of evaporative coolers (not what I’m looking for), one used window unit (?), and then this:

Average rating: 4.7 out of 5 stars.

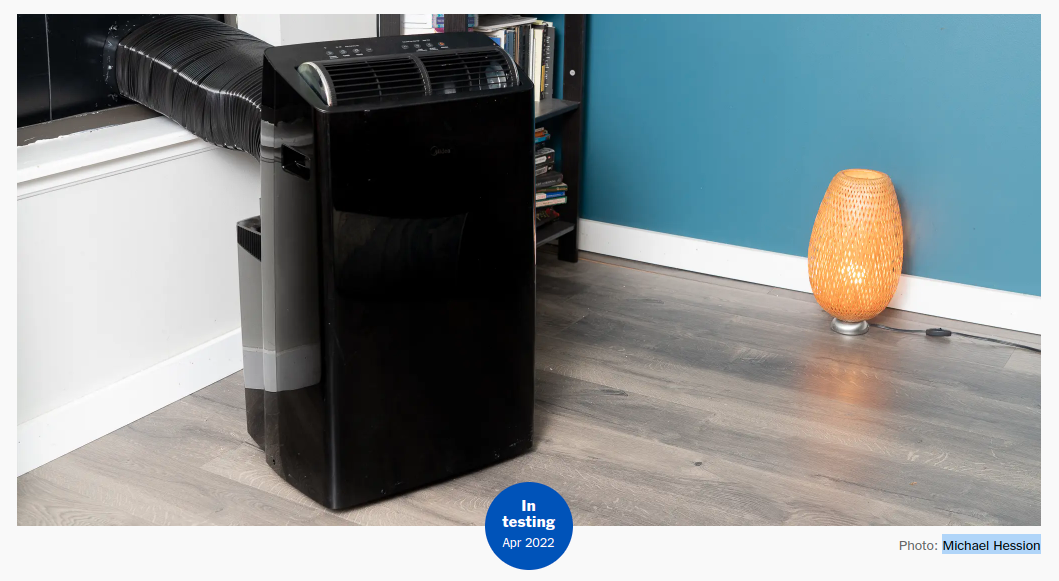

However, this air conditioner has a major problem. Take a look at this picture:

Key thing to notice: there is one hose going to the window. Only one.

Why is that significant?

Here’s how this air conditioner works. It sucks in some air from the room. It splits that air into two streams, and pumps heat from one stream to the other - making some air hotter, and some air cooler. The cool air, it blows back into the room. The hot air, it blows out the window.

See the problem yet?

Air is blowing out the window. In order for the room to not end up a vacuum, air has to come back into the room from outside. In practice, houses are very not airtight (we don’t want to suffocate), so air from outside will be pulled in through lots of openings throughout the house. And presumably that air being pulled in from outside is hot; one typically does not use an air conditioner on cool days.

The actual effect of this air conditioner is to make the space right in front of the air conditioner nice and cool, but fill the rest of the house with hot outdoor air. Probably not what one wants from an air conditioner!

Ok, that’s amusing, but the point of this post is not physics-101 level case studies in how not to build an air conditioner. The real fact of interest is that this is apparently the top rated new air conditioner on Amazon. How does such a bad design end up so popular?

One aspect of the story, presumably, is fake reviews. That phenomenon is itself a rich source of insight, but not the point of this post, and definitely not enough to account for the popularity of this air conditioner. The reviews shown on the product page are all “verified purchase”, and mostly 5-stars. There are only 4 one-star reviews (out of 104). If most customers noticed how bad this air conditioner is, I do not think a 4.7 rating would be sustainable. Customers actually do like this air conditioner.

And hey, this air conditioner has a lot going for it! There’s wheels on the bottom, so it’s very portable. Setup is super easy - only one hose to the window, much less fiddly than those two-hose designs where you attach one hose and the other pops off.

Sure, the air conditioner has a major problem, but it’s not a major problem which most people will notice. They may notice that most of the house is still hot, but the space right in front of the air conditioner will be cool, so obviously the air conditioner is doing its job. Very few people will realize that the air conditioner is drawing hot air into the rest of the house. (Indeed, I saw zero reviews which mentioned that the air conditioner pulls hot air into the house - even the 1-star reviewers apparently did not realize why the air conditioner was so bad.)

[EDIT: several commenters seem to think that I'm claiming this air conditioner does not work at all, so I want to clarify that it will still cool down a room on net. If the air inside is all perfectly mixed together, it will still end up cooler with the air conditioner than without. The point is not that it doesn't work at all. The point is that it's stupidly inefficient in a way which I do not think consumers would plausibly choose over the relatively-low cost of a second hose if they recognized the problems.]

Generalization

Major problems are only fixed when those problems are obvious. Problems which most people won’t notice (or won’t attribute correctly) tend to stick around. There’s no economic incentive to fix them.

And in practice, there are plenty of problems which most people won’t notice. A few more examples:

… and presumably this extends to lots of other industries which I’m less familiar with.

Two points to highlight here:

How Does This Relate To Takeoff Speeds?

There’s a common view that, as long as AI does not take off too quickly, we’ll have time to see what goes wrong and iterate on it. It's a view with a lot of intuitive outside-view appeal: AI will work just like other industries. We try stuff, see what goes wrong, fix it. It worked like that in all the other industries, presumably it will work like that in AI too.

The point of the air conditioner is that other industries do not, in fact, work like that. Other industries are absolutely packed with major problems which are not fixed because they’re not obvious. Even assuming that AI does not take off quickly (itself a dubious assumption at best), we should expect the same to be true of AI.

… But Won’t Big Problems Be Obvious?

Most industries have major problems which aren’t fixed because they’re not obvious. But these problems can only be so bad. If they were really disastrous, the disasters would be obvious. Why not expect the same from AI?

Because AI will eventually be far more capable than human industries. It will, by default, optimize way harder than human industries are capable of optimizing.

What does it look like, when the optimization power is turned up to 11 on something like the air conditioner problem? Well, it looks really good. But all the resources are spent on looking good, not on actually being good. It’s “Potemkin village world”: a world designed to look amazing, but with nothing behind the facade. Maybe not even any living humans behind the facade - after all, even generally-happy real humans will inevitably sometimes appear less-than-maximally “good”.

… But Isn’t Solving The Obvious Problems Still Valuable?

The nonobvious problems are the whole reason why AI alignment is hard in the first place.

Think about the “game tree” of alignment - the basic starting points, how they fail, what strategies address the failures, how those fail, etc. The most basic starting points are generally of the form “collect data from humans on which things are good/bad, then train something to do good stuff and avoid bad stuff”. Assuming such a strategy could be implemented efficiently, why would it fail? Well:

(Somewhat more detail on these failure modes here.) Optimizing for things which look “good” to humans obviously raises exactly the sort of failure which the air conditioner points to. Failure of systems to generalize in “good” ways is less centrally about obviousness, but note that if it were obvious that the system were going to generalize badly, this would also be a pretty easy issue to solve: just don’t deploy the system if it will generalize badly. Problem is, we can’t tell whether a system will do what we want in deployment just by looking at what it does in training; we can’t tell by looking at the system's behavior whether there’s problems in there.

Point is: problems which are highly visible to humans are already easy, from an alignment perspective. They will probably be solved by default. There’s not much marginal value in dealing with them. The value is in dealing with the problems which are hard to recognize.

Corollary: alignment is not importantly easier in slow-takeoff worlds, at least not due to the ability to iterate. The hard parts of the alignment problem are the parts where it’s nonobvious that something is wrong. That’s true regardless of how fast takeoff speeds are. And the ability to iterate does not make that hard part easier. Iteration mainly helps on the parts of the problem which were already easy anyway.

So I don't really care about takeoff speeds. The technical problems are basically similar either way.

... though admittedly I did not actually learn everything I need to know about takeoff speeds just from air conditioner ratings on Amazon. It took a lot of examples in different industries. Fortunately, there was no shortage of examples to hammer the idea into my head.