Hi — new here. I'm an NLP researcher, and for background, I would guess I fall on the skeptical side of the scaling hypothesis by LW standards. I was pointed to this by abergal. Here is some feedback:

1. On the question of economic value:

AFAIK, there are many factors other than raw performance on a benchmark (relating to interpretability, accountability/explainability, and integration with surrounding software) which, as far as I'm aware, may dominate the question of economic viability of AI systems at least on the sub-99.99% accuracy regime (depending on application domain). Examples of what I mean:

1a. Even when humans are used to perform a task, and even when they perform it very effectively, they are often required to participate in rule-making, provide rule-consistent rationales for their decisions, and stand accountable (somehow) for their decisions. A great explicit example of this is the job of judges in the US justice system, but I think this is true to a lesser extent in most jobs. This is a more complex task than mapping input->output and it's not clear to me how approximating the i/o mapping relates to the ability of machines to replace humans in general (though surely it will be true for some tasks). Also, when automating, all accountability for decisions is concentrated on the creators and operators of the machine; since the liability for mistakes is higher, I think the reliability standards for adoption may also be higher.

1b. Integration with traditional software presents difficulties which also mean a very high bar for AI-based automation. Traditional software relies on reasoning with abstractions; failure of abstractions (i.e., the real-world semantics of an API contract) can have nonlinear effects on the outcome in a software system. So the ML component has to be accurate enough for its API to be relatively iron-clad. An example of how this actually looks in practice might be Andrej Karpathy's group in Tesla, based on what he said in this talk

1c. For applications like task-oriented dialog, it's not enough to just output the right kind of text to a user; the right API actions must be taken and their output used to drive the conversation. Integration with software is hard, and advances in representations that facilitate this (for example, Semantic Machines's use of dataflow graphs) already may be a bottleneck for automation of these kinds of tasks. Once these bottlenecks are opened up, it's possible that the machine learning problems at issue may become much easier than the fully-general problem, as more of the business logic can be offloaded to traditional software. A parody-level example of this issue is Google Duplex, a sophisticated system that mimics a human making a reservation over the phone, which could be rendered unnecessary by adoption of reasonable software standards for making reservations online. Though perhaps that's also evidence that even our apparent bottlenecks are pretty vulnerable to brute-forcing with ML... (though I have not heard reports of how well it actually works in practice)

Anyway, the upshot of these points is that I suspect the regime of economic viability for lots of "general" AI tasks is either going to be in the very high regime near convergence (where your extrapolations might break down), or we're already near or past it and it's actually more-or-less constrained by other things (like social solutions for accountability issues, non-ML progress in software, or efficiency of current markets at producing software solutions). Another way of saying this is that I take benchmark performance trends with a big grain of salt as an indicator of automation potential.

2. On "how impressive are the benchmarks":

It's not obvious to me that being able to solve many tasks quite well is clearly of more general value than solving a few tasks much better, at least in this case. While it seems to be an indicator of generality, in the particular case of GPT-3's few-shot learning setting, the output is controlled by the language modeling objective. This means that even though the model may not catch on to the same statistical regularities as task-specific trained models do from their datasets, it essentially must rely on statistical regularities that are in common between the language modeling supervision and the downstream task. It stands to reason that this may impose a lower ceiling on model performance than human performance, or that in the task-specific supervised case.

I don't know about Ajeya's report or the long/short horizon distinction, so I'm not sure if what I'm about to say is right, but: it seems to me that in your analysis relating to that report, GPT-3's status as a general-purpose "few-shot learner" is taken for granted. There is the alternative interpretation that it is simply a general-purpose language model, where even a Bayes-optimal language model may indeed be far from Bayes-optimal on a task (again assuming GPT-3's few-shot learning paradigm). (Also mind that a the Bayes-optimal language model will vary based on its training data.) So it's not clear to me whether arguments about general-purpose learners would apply to GPT-3 as a few-shot learner, especially in the regime of near-human-level performance which is concerned here. They may apply better in the fine-tuned setting where the supervision more closely matches the task, but then we run again into the potential problem of insufficiently general solutions. And in that setting, SuperGLUE is already basically at human-level performance with the T5 model, but T5 fine-tuning doesn't seem to quite be at the point of "transformative AI" — at least any more than BERT or the rest of the latest wave of progress. Rather, the limits of the benchmark have probably been more-or-less hit.

3. On the discussion of scaling up & limitations:

Especially as far as downstream task performance is concerned, data filtering could potentially play a role here. I would expect scaling laws to obtain w.r.t. dataset size when the distribution is similar, but there have been cases where filtering (thereby reducing dataset size) has been essential to learning a better model, at least in machine translation (in fact there was a whole shared task on it — this deals with extremely noisy corpora, but I'm pretty sure I've seen work that even showed gains from filtering what was thought to be "clean" training sets; unfortunately, I can't find it again...). It might not be as much of an issue here, because of the sheer model size and context length (so, maybe the noisy examples could be cordoned off in their own part of feature space and don't do anything worse than wasting time), but I'm not aware of any work looking at the data cleaning aspect. However there is work looking at domain adaptation in LM pretraining. So anyway, the model may just "figure it out" in the course of learning, but if not, especially if there were a general scheme to weigh and seek out input examples based on perceived quality towards learning a good/causally accurate representation of language (as in, e.g., Invariant Risk Minimization — though more progress is needed in this area) then the laws may end up looking different. At the same time the laws may also end up looking different just due to using different corpora; for example, Common Crawl afaik has all kinds of garbage in it. In general, collecting bigger and bigger corpora might mean scraping the bottom of the barrel and hitting a barrier due to quality issues. Alternatively, going massively multilingual (or multimodal) might alleviate that issue enough to hit some critical regime of high performance, depending on the level of cross-lingual and cross-modal generalization at play.

Oh yeah, one more thing which I think actually might be the most important point. On a lot of these benchmarks — at the very least, on SuperGLUE — "human-level performance" is a much weaker requirement than "human equivalence." Human performance isn't necessarily an indicator of irreducible entropy in the underlying task. To a large extent, it just reflects ambiguity or coarseness in the dataset specification. A big part of this is the artificial setting of the data annotation, which is unfortunately kind of necessary in a lot of cases when the goal is characterizing abstract language understanding. On the long tail of examples that require more careful reasoning, in the absence of an underlying business reason or extra context to guide people's judgments, they end up interpreting or construing the inputs differently, or applying the annotation guidelines differently, and disagreeing with each other on the output. Human-equivalence would mean sensitivity to all of the issues that lead a human to decide on one interpretation over another, but in the IID performance evaluation setting, these issues all basically wash out looking like noise. Then as long as the model can throw out a reasonable guess of one of the plausible labels in these cases, it will be hard to distinguish from a human. NLI/RTE are great examples of this; it is a notoriously difficult problem to specify, and recent work has shown a great deal of disagreement between annotators in this task setting (including bi-modal distributions indicative of explicit ambiguities in construal), to the point that it seems like supervised models probably have already more or less hit the noise ceiling, at least on the SNLI/MultiNLI datasets.

So for the most part when looking at these kinds of datasets — particularly data annotated in an artificial setting intended to capture something abstract and general about language — maximum performance on IID test sets is best seen not as the point of irreducible entropy (i.e., maximum performance on a task), but as a "noise ceiling" beyond which the particular dataset is incapable of distinguishing between models. See Schlangen for more on the relationship between data and task.

It's an open question how to more carefully and accurately benchmark something resembling human-equivalence, but pretty good concrete examples of how one might try to do this include Contrast Sets and adversarial evaluation. Contrast sets look at model behavior under perturbation of inputs, testing the sensitivity of the decision function to certain changes; adversarial evaluation involves explicitly searching for evaluation items on which humans agree and the model is incorrect. In theory, these sorts of evaluations will do a much better job of evaluating model robustness under distribution shift or in the course of interaction with real users; they pose what amounts to a much tighter constraint on the decision function learned by the model. Indeed, in practice models do much worse under these evaluations than traditional IID ones (at least, for many tasks). This is expected, since pretty much all models these days are trained using empirical risk minimization (i.e., the IID assumption). GPT-3's few-shot learning setting is partly interesting because it does not use the IID assumption, instead using a language modeling assumption (which lets it leverage lots of what it learned, but may indeed impose other constraints on its output).

But still, ANLI is an example of an approach to adversarial evaluation, and it is far at the bottom of your graphs in terms of GPT-3's performance. Also notice that running GPT-3 on ANLI is not technically an adversarial evaluation; the full evaluation process for ANLI would involve (human) searching for examples that GPT-3 will get wrong, evaluating on those, feeding them back in for training, etc.; even the modest uptick at the end for GPT-3 might disappear when doing a true adversarial evaluation.

So all that is to say I would also look at the relationship between human-level performance on a benchmark and human-equivalent performance on a task with a big grain of salt. Most of our datasets are really bad at assessing human-equivalence in the high-performance regime, and our models only recently got good enough for this to become a problem (and it is a problem which is now the subject of a lot of attention in the field). This is much less of an issue when you're using supervised ML for some business purpose where your training and test sets are essentially the same distribution and IID, and your labels directly manifest your business need. But it's a big problem for "general language understanding."

Thanks! I agree that if we required GPT-N to beat humans on every benchmark question that we could throw at them, then we would have a much more difficult task.

I don't think this matters much in practice, though, because humans and ML are really differently designed, so we're bound to be randomly better at some things and randomly worse at some things. By the time ML is better than humans at all things, I think they'll already be vastly better at most things. And I care more about the point when ML will first surpass humans at most things. This is most clearly true when considering all possible tasks (e.g. "when will AIs beat humans at surviving on a savannah in a band of hunter-gatherers?"), but I think it's also true when considering questions of varying difficulty in a fairly narrow benchmark. Looking at the linked papers, I think contrastive learning seems like a fair challenge; but I suspect that enough rounds of ANLI could yield questions that would be very rare in a normal setting [1].

To make that a little bit more precise, I want to answer the question "When will transformative AI be created?". Exactly what group of AI technologies would or wouldn't be transformative is an open question, but I think one good candidate is AI that can do the vast majority of economically important tasks cheaper than a human. If I then adopt the horizon-length frame (which I find plausible but not clearly correct), the relevant question for GPT-N becomes "When will GPT-N be able to perform (for less cost than a human) the vast majority of all economically relevant sub-tasks with a 1-token horizon length"

This is an annoyingly vague question, for sure. However, I currently suspect it's more fruitful to think about this from the perspective of "How high reliability do we need for typical jobs? How expensive would it be to make GPT-N that reliable?" than to think about this from the perspective of "When will be unable to generate questions that GPT-N fails at?"

Another lens on this is to look at tasks that have metrics other than how well AI can imitate humans. Computers beat us at chess in the 90s, but I think humans are still better in some situations, since human-AI teams do better than AIs alone. If we had evaluated chess engines on the metric of beating humans in every situation, we would have overestimated the point at which AIs beat us at chess by at least 20 years

(Though in the case of GPT-N, this analogy is complicated by the fact that GPT-3 doesn't have any training signal other than imitating humans.)

Though being concerned about safety, I would be delighted if people became very serious about adversarial testing. ↩︎

I guess my main concern here is — besides everything I wrote in my reply to you below — basically that reliability of GPT-N on simple, multiclass classification tasks lacking broader context may not be representative of its reliability in real-world automation settings. If we're to take SuperGLUE as representative, well.. it's already basically solved.

One of the problems here is that when you have the noise ceiling set so low, like it is in SuperGLUE, reaching human performance does not mean the model is reliable. It means the humans aren't. It means you wouldn't even trust a human to do this task if you really cared about the result. Coming up with tasks where humans can be reliable is actually quite difficult! And making humans reliable in the real world usually depends on them having an understanding of the rules they are to follow and the business stakes involved in their decisions — much broader context that is very difficult to distill into artificial annotation tasks.

So when it comes to reliable automation, it's not clear to me that just looking at human performance on difficult benchmarks is a reasonable indicator. You'd want to look at reliability on tasks with clear economic viability, where the threshold of viability is clear. But the process of faithfully distilling economically viable tasks into benchmarks is a huge part of the difficulty in automation in the first place. And I have a feeling that where you can do this successfully, you might find that the task is either already subject to automation, or doesn't necessarily require huge advances in ML in order to become viable.

Thank you, this is very useful! To start out with responding to 1:

1a. Even when humans are used to perform a task, and even when they perform it very effectively, they are often required to participate in rule-making, provide rule-consistent rationales for their decisions, and stand accountable (somehow) for their decisions

I agree this is a thing for judges and other high-level decisions, but I'm not sure how important it is for other tasks. We have automated a lot of things in the past couple of 100 years with unaccountable machines and unaccountable software, and the main difference with ML seems to be that it's less interpretable. Insofar as humans like having reasons for failures, I'm willing to accept this as one reason that reliability standards could be a bit higher for ML, but I doubt it would be drastically higher. I'd love a real example (outside of criminal justice) where this is a bottleneck. I'd guess that some countries will have harsh regulations for self-driving cars, but that does have a real risk of killing people, so it's still tougher than most applications.

1b. Integration with traditional software presents difficulties which also mean a very high bar for AI-based automation. (...) example of how this actually looks in practice might be Andrej Karpathy's group in Tesla, based on what he said in this talk.

I liked the talk! I take it as evidence that it's really hard to get >99.99% accuracy, which is a big problem when your neural network is piloting a metric ton of metal at high speeds in public spaces. I'm not sure how important reliability is in other domains, though. Your point "failure of abstractions can have nonlinear effects on the outcome in a software system" is convincing for situations when ML is deeply connected with other applications. I bet there's a lot of cool stuff that ML could do there, so the last 0.01% accuracy could definitely be pretty important. An error rate of 0.1%-1% seems fine for a lot of other tasks, though, including all examples in Economically useful tasks.

- For ordering expensive stuff, you want high reliability. But for ordering cheap stuff, 0.1%-1% error rate should be ok? That corresponds to getting the wrong order once a year if you order something every day.

- 0.1%-1% error rate also seems fine for personal assistant work, especially since you can just personally double-check any important emails before they're sent, or schedule any especially important meeting yourself.

- Same thing for research assistant work (which – looking at the tasks – actually seems useful to a lot of non-researchers too). Finding 99% of all relevant papers is great; identifying 99% of trivial errors in your code is great; writing routine code that's correct 99% of the time is great (you can just read through it or test it); reading summaries that have an error 99% of the time is a bit annoying, but still very useful.

(Note that a lot of existing services have less than 99% reliability, e.g. the box on top of google search, google translate, spell check, etc.)

Also, many benchmarks are already filtered for being difficult and ambiguous, so I expect 90% performance on most of them to correspond to at least 99% performance in ordinary interactions. I'd be curious if you (and other people) agree with these intuitions?

Re API actions: Hm, this seems a lot easier than natural lanaguage to me. Even if finetuning a model to interact with APIs is an annoying engineering task, it seems like it should be doable in less than a year once we have a system that can handle most of the ambiguities of natural language (and benchmarks directly tests the ability to systematically respond in a very specific way to a vague input). As with google duplex, the difficulty of interacting with APIs is upper-bounded by the difficulty of interacting with human interfaces (though to be fair, interactions with humans can be more forgiving than interfaces-for-humans).

On 1a:

Insofar as humans like having reasons for failures, I'm willing to accept this as one reason that reliability standards could be a bit higher for ML, but I doubt it would be drastically higher. I'd love a real example (outside of criminal justice) where this is a bottleneck.

Take for example writing news / journalistic articles. Distinguishability from human-written articles is used as evidence for GPT's abilities. The abilities are impressive here, but the task at hand for the original writer is not to write an article that looks human, but one that reports the news. This means deciding what is newsworthy, aggregating evidence, contacting sources, and summarizing and reporting the information accurately. In addition to finding and summarizing information (which can be reasonably thought as a mapping from input -> output), there is also the interactive process of interfacing with sources: deciding who to reach out to, what to ask them, which sources to trust on what, and how to report and contextualize what they tell you in an article (forgetting of course the complexity of goal-oriented dialogue when interviewing them). This process involves a great deal of rules: mutual understanding with sources about how their information will be represented, an understanding of when to disclose sources and when not to, careful epistemics when it comes to drawing conclusions on the basis of the evidence they provide and representing the point of view of the news outlet, etc.; it also involves building relationships with sources and with other news outlets, conforming to copyright standards, etc.; and the news outlet has an stake in (and accountability for) all of these elements of the process, which is incumbent on the journalist. Perhaps you could try and record all elements of this process and treat it all as training data, but the task here is so multimodal, stateful, and long-horizon that it's really unclear (at least to me) how to reduce it to an I/O format amenable to ML that doesn't essentially require replicating the I/O interface of a whole human. Reducing it to an ML problem seems itself like a big research problem (and one having more to do with knowledge representation and traditional software than ML).

If you put aside these more complex features of the problem, the task reduces to basically paraphrasing and regurgitating the information already available in other printed sources. And for all intents and purposes, that's already easily automated (and pretty much is in practice), whether by direct republication of AP reports or plagiarism of articles onto clickbait websites. Perhaps improved automation in retrieval, articulation, and summarization of information can make the journalist more efficient and productive, but what percentage of their work will actually be automated? All of the really important stuff is the stuff where it's not obvious how to automate. So it's very hard for me to see how the journalist would be replaced, or how their role would be transformed, without a lot more progress on things other than function approximation.

I think similar concerns apply to management, accounting, auditing, engineering, programming, social services, education, etc. It doesn't seem to me that we're near the end of any of these fields being eaten even by traditional software. And I can imagine many ways in which ML can serve as a productivity booster in these fields but concerns like the ones I highlighted for journalism make it harder for me to see how AI of the sort that can sweep ML benchmarks can play a singular role in automation, without being deployed along a slate of other advances.

On 1b and economically useful tasks: you mention customer service, personal assistant, and research assistant work.

For ordering expensive stuff, you want high reliability. But for ordering cheap stuff, 0.1%-1% error rate should be ok? That corresponds to getting the wrong order once a year if you order something every day.

I think I see what you're saying here for routine tasks like ordering at a drive-through. At the same time, it isn't that hard to automate ordering using touch screens, which quite a few restaurants are doing as well (though admittedly I don't know of this happening in drive-throughs). I guess if this function was totally automated by default then these places could reduce their staff a bit. But beyond the restaurant setting, retail ordering, logistics, and delivery seems already pretty heavily automated by, e.g., the likes of Amazon. For more complex, less routine kinds of customer service, I'll admit that I don't know exactly what challenges are involved, but this falls basically into the category of interfacing between a human and a complex API (where it's usually not obvious how to structure the API — will revisit that below). So it's hard for me to see what exactly could be "transformative" here.

For personal assistant and research assistant work, it also seems to me that an incredible amount of this is already automated. In both of these settings there is not necessarily an objective ground truth against which you can measure accuracy; it's more about achieving outcomes that are favored by the user. Consider the organization of our digital world by companies like Google, TripAdvisor, Yelp, Google, Semantic Scholar, ...and did I mention Google? If you're flexible with what kind of outcomes you're willing to accept, simply Googling your question gets you extremely far. As ML advancements continue, outcomes will continue to improve, but at what point are the results any more "transformative" than they already have been? Would it be the point at which the user is blindly willing to trust the judgment of the system, and no longer inclined to sift through multiple results, re-query, and evaluate for themselves? That certainly already happens for me sometimes, and seems to me to a large extent to be a factor of the level of transparency in the system and trust that the user has in it to align with their goals (as well as how painful the interface is to use — a perverse incentive if you were to optimize directly for this notion of "transformative"). This is why, for example, I'm more likely to go with a Consumer Reports recommendation than the first Google result. But even then I read plenty for more details, and I give Consumer Reports credence based on the assumption that their report is derived from actual, real-world experience with a product. Can that be automated? We'd need robots testing and rating products. Again, here, I'm not sure exactly what "transformation" by powerful function approximation alone would look like.

Also, many benchmarks are already filtered for being difficult and ambiguous, so I expect 90% performance on most of them to correspond to at least 99% performance in ordinary interactions. I'd be curious if you (and other people) agree with these intuitions?

No, I disagree. Benchmarks are filtered for being easy to use, and useful for measuring progress — the ones that gain wide adoption are the ones where 1) models are easy to implement, 2) basic solutions serve as a reasonable starting point, and 3) gains are likely to be possible from incremental advances. So they should be difficult, but not too difficult. If it is too hard to implement models or make progress, nobody will use the benchmark. If it is too easy, it'll be out of date way too fast. Only very recently has this started to change with adversarial filtering and evaluation, and the tasks have gotten much more ambitious, because of advances in ML. But even many of these ambitious datasets turn out ultimately to be gameable, and the degree to which filtering/adversarial methods work is variable & depends on task and methodology. NLI was notorious for this issue: models would get high scores on datasets like MultiNLI, but then you play with one of these models for no more than 5 minutes to find that it's completely broken and inconsistent even for seemingly-easy examples. There's reason to think ANLI improves on this issue at least somewhat (and indeed it proves to be tougher on models). But still, a lot of the tasks which are harder are ultimately artificial: things like SWAG, (adversarial) NLI, Winogrande, etc. are simplified tasks which have unclear economic value.

In SuperGLUE, tasks were chosen where there seemed to be a lot of headway remaining between a BERT baseline and human performance, but there was another constraint — all of the tasks had to be formulated as relatively simple multiclass classification problems over sentences or sentence pairs. This constraint was to facilitate the development of general models which can easily be tested on all of the tasks in the benchmark using the same API, bringing the focus away from task-specific engineering. But in practice many things you want to do with language in an ML system, and especially things like dialog, have much more complex APIs.

Re API actions: Hm, this seems a lot easier than natural lanaguage to me. Even if finetuning a model to interact with APIs is an annoying engineering task, it seems like it should be doable in less than a year once we have a system that can handle most of the ambiguities of natural language (and benchmarks directly tests the ability to systematically respond in a very specific way to a vague input). As with google duplex, the difficulty of interacting with APIs is upper-bounded by the difficulty of interacting with human interfaces (though to be fair, interactions with humans can be more forgiving than interfaces-for-humans).

I don't think this is really about "solving the ambiguity in NL" versus "solving API actions". The problem is mapping between the two in a way that naturally follows the structure of the conversation, drives it along, and produces the desired ultimate outcome in a business context. This kind of task is much more complex than multiclass classification which is used in pretty much all benchmarks, because the size of the output space is exponential in the size of the API surface; it's outputting full, executable programs.

In a task-oriented dialog setting, programs need to be synthesized and executed at every round of the dialog. When receiving a command, a program generally must be synthesized and executed to produce an outcome — or not, in case the command was ambiguous and the system needs to ask follow up questions or clarifications. When generating a response, programs need to be synthesized and executed in order to extract whatever information needs to be presented to the user (and this information then serves as input which must be used correctly in the NLG step). These programs may need to draw on portions of programs or natural language entities which appeared much earlier in the interaction. The API needs to be structured in a way such that programs can accurately summarize the actions that need to be taken at each step of the dialog, and then training data needs to be collected that ties these API calls to natural language interactions. Architecting this entire process is extremely nontrivial. And even then, there is not only a huge output space of possible programs at each step, but there is a huge amount of information in the input that needs to be represented and sifted through in the inputs (i.e., all of the natural language and program content from the previous rounds of interaction). So the the learning problem is hard too, but it's not even obviously the hardest part of the process in practice.

I haven't worked in a call center, but consider what a customer service representative does. They generally are interfacing with a computer system that they navigate in order to find the information you're looking for and present it to you; they then have some set of actions that they may be able to take in that computer system on your behalf. From everything I have gleaned in my interaction with customer service representatives over the years, these computer systems are very complicated and opaque, badly designed and hard even for trained humans to successfully use. Now consider that in order to automate customer service, the entire space of interactions the representative may have with the system (and other representatives) on the customer's behalf would need to be represented in a single uniform API, augmented even further with programmatic reification of company policy (e.g., around what kind of information is okay to disclose to the consumer). But then, once this herculean task is accomplished, is it actually more useful to have a natural language customer service bot, or just a webpage which allows the customer to view this information and execute actions at their leisure? Indeed, we have subscriptions, package tracking numbers, delivery status, order cancellation, and all sorts of stuff easily viewable and interactable online — things which in the past you may have needed to call in and ask a human about. With the proper software infrastructure, it's not clear how much benefit imitating humans actually provides — because one of humans' principal benefits is their ability to navigate byzantine and unpredictable systems across many modalities. As soon as an API is comprehensible to an ML system, it becomes comprehensible to traditional software.

So I guess that I'm not really sure what the vision for transformative AI really looks like. To overcome these difficulties it would have to be inherently cross-modal, cross-contextual, and adaptable in ways that are simply not measured by our benchmarks at all, and might essentially require crude emulation of humans. If the vision is something like Manna-style transformative automation, there still seem to be tremendous bottlenecks in software architecting, standardization of business practices, and robotic automation. Once these things are established, it seems to me that the contribution of ML as function approximation is fairly flexible (i.e., smoothly increasing productivity with better performance), as you suggest for some of the use cases. But the transformation aspect there would fall squarely into the realm of Software 1.0, at least until the robots catch up. Why was Facebook's "M" assistant discontinued? I have no idea, but knowing more about that seems relevant here.

Anyway I feel like that was kind of a ramble. I spent a lot of time there on dialog systems but my concerns aren't really specific to those. I hope that gives a general sense of my concerns about the relationship between our benchmarks and general, transformative AI.

Benchmarks are filtered for being easy to use, and useful for measuring progress. (...) So they should be difficult, but not too difficult. (...) Only very recently has this started to change with adversarial filtering and evaluation, and the tasks have gotten much more ambitious, because of advances in ML.

That makes sense. I'm not saying that all benchmarks are necessarily hard, I'm saying that these ones look pretty hard to me (compared with ~ordinary conversation).

many of these ambitious datasets turn out ultimately to be gameable

My intuition is that this is far less concerning for GPT-3 than for other models, since it gets so few examples for each benchmark. You seem to disagree, but I'm not sure why. In your top-level comment, you write:

While it seems to be an indicator of generality, in the particular case of GPT-3's few-shot learning setting, the output is controlled by the language modeling objective. This means that even though the model may not catch on to the same statistical regularities as task-specific trained models do from their datasets, it essentially must rely on statistical regularities that are in common between the language modeling supervision and the downstream task.

If for every benchmark, there were enough statistical regularities in common between language modeling supervision and the benchmark to do really well on them all, I would expect that there would also be enough statistical regularities in common between language modeling supervision and whatever other comparably difficult natural-language task we wanted to throw at it. In other words, I feel more happy about navigating with my personal sense of "How hard is this language task?" when we're talking about few-shot learning than when we're talking about finetuned models, becase finetuned models can entirely get by with heuristics that only work on a single benchmark, while few-shot learners use sets of heuristics that cover all tasks they're exposed to. The latter seem far more likely to generalise to new tasks of similar difficulty (no matter if they do it via reasoning or via statistics).

You also write "It stands to reason that this may impose a lower ceiling on model performance than human performance, or that in the task-specific supervised case." I don't think this is right. In the limit of training on humans using language, we would have a perfect model of the average human in the training set, which would surely be able to achieve human performance on all tasks (though it wouldn't do much better). So the only questions are:

- How fast will more parameters + more data increase performance on the language modeling task? (Including: Will performance asymptote before we've reached the irreducible entropy?)

- As the performance on language modeling increases, in what order will the model master what tasks?

There are certainly some tasks were the parameter+data requirements are far beyond our resources; but I don't see any fundamental obstacle to reaching human performance.

I think this is related to your distinction between a "general-purpose few-shot learner" a "general-purpose language model", which I don't quite understand. I agree that GPT-3 won't achieve bayes-optimality, so in that sense it's limited in its few shot learning abilities; but it seems like it should be able to reach human-level performance through pure human-imitation in the limit of excelling on the training task.

I'm not saying that all benchmarks are necessarily hard, I'm saying that these ones look pretty hard to me (compared with ~ordinary conversation).

I'm not sure exactly what you mean here, but if you mean "holding an ordinary conversation with a human" as a task, my sense is that is extremely hard to do right (much harder than, e.g., SuperGLUE). There's a reason that it was essentially proposed as a grand challenge of AI; in fact, it was abandoned once it was realized that actually it's quite gameable. This is why the Winograd Schema Challenge was proposed, but even that and new proposed versions of it have seen lots of progress recently — at the end of the day it turns out to be hard to write very difficult tests even in the WSC format, for all the reasons related to shallow heuristic learning etc.; the problem is that our subjective assessment of the difficulty of a dataset generally assumes the human means of solving it and associated conceptual scaffolding, which is no constraint for an Alien God.

So to address the difference between a language model and a general-purpose few-shot learner:

In other words, I feel more happy about navigating with my personal sense of "How hard is this language task?" when we're talking about few-shot learning than when we're talking about finetuned models, becase finetuned models can entirely get by with heuristics that only work on a single benchmark, while few-shot learners use sets of heuristics that cover all tasks they're exposed to. The latter seem far more likely to generalise to new tasks of similar difficulty (no matter if they do it via reasoning or via statistics).

I agree that we should expect its solutions to be much more general. The question at issue is: how does it learn to generalize? It is basically impossible to fully specify a task with a small training set and brief description — especially if the training set is only a couple of items. With so few examples, generalization behavior is almost entirely a matter of inductive bias. In the case of humans, this inductive bias comes from social mental modeling: the entire process of embodied language learning for a human trains us to be amazing at figuring out what you mean from what you say. In the case of GPT's few-shot learning, the inductive bias comes entirely from a language modeling assumption, that the desired task output can be approximated using language modeling probabilities prefixed with a task description and a few I/O examples.

This gets us an incredible amount of mileage. But why, and how far will it get us? Well, you suggest:

In the limit of training on humans using language, we would have a perfect model of the average human in the training set, which would surely be able to achieve human performance on all tasks (though it wouldn't do much better).

I don't think this makes sense. If the data was gathered by taking humans into rooms, giving them randomly sampled task instructions, a couple input/output examples, and setting them loose, then maybe what you say would be true in the limit in some strict sense. But I don't think it makes sense here to say the "average human" is captured in the training set. A language model is trained on found text. There is a huge variety of processes that give rise to this text, and it is the task of the system to model those processes. When it is prompted with a task description and I/O examples, it can only produce predictions by making sense of that prompt as found text, implicitly assigning some kind of generative process to it. In some sense, you can view it as doing an extremely smart interpolation between the texts that its seen before.

(Maybe you disagree with this because you think big enough data would include basically all possible such situations. I don't think that is really right morally speaking, because I think the relative frequency of rarer and trickier situations (or underlying cognitive factors to explain situations, or whatever) in the LM setting will probably drop off precipitously to the point of being only infinitesimally useful for the model to learn certain kinds of nuanced things in training. If you were to control the data distribution to fix this, well then you're not really doing language modeling but controlled massive multitask learning, and regardless, the following criticisms still apply.)

It is utterly remarkable how powerful this approach is and how well it works for "few-shot learning." A lot of stuff can be learned from found text. But the approach has limits. It is incumbent on the person writing the prompt to figure out how to write it to coax the desired behavior out of the language model, whether the goal is to produce advice about dealing with bears, or avoid producing answers to nonsense questions. It is very easy to imagine how the language modeling assumption will produce reasonable and cool outputs for lots of tasks, but its output in corner cases might wildly vary depending on precise and unpredictable features of the instructions. The problem of crafting a "representative training set" has simply been replaced by another problem, of crafting "representative prompts." It is very hard, at least for me, to imagine how the language modeling assumption will lead to the model behaving truly reliably in corner cases — when the standards get higher — without relying on the details of prompt selection in exactly the way that supervised models rely on training set construction. I have no reason to expect the language model to just know what I mean.

Another problem here, though, is we don't know what we mean either. In the course of, erm, producing economic value, when we hit a case where the instructions are ambiguous, we leverage everything else which was not in the instructions — understanding of broader social goals, ethical standards, systems of accountability to which we're subject, mental modeling of the rule writer, etc. — to decide how to handle it. GPT-3's few-shot learning uses the language modeling assumption as a stand-in for this. It's cool that they align in places — for example, the performance breakdown in the GPT-3 paper for the most part indicates that they align well on factual indexing and recall (which is where much of the gains were). but in general I would not expect them to be aligned, and I would expect the misalignment to be exposed to a greater degree as language models get better and standards get higher. Ultimately the language model serves as a very powerful starting point, but the question of how to reliably align it with an external goal remains open (or involves supervised learning, which leads us back to where we started but with a much beefier model). I find that the conception of GPT-3 as a general-purpose few-shot learner, as opposed to just a very powerful language model, tends to let all of these complexities get silently swept under the rug.

It's worth dwelling on the "we don't know what we mean" point a bit more. Because the same point applies to supervised learning; indeed, it's precisely the reason that we end up developing datasets that we think require human-like skills, but which actually can be gamed and solved without them. So you might think what I'm saying here could be applied to just about any model and any benchmark: that perhaps we can extrapolate performance trends on the benchmark, but reaching the point of saturation indicates less that the underlying problems are solved in a way that generalizes beyond the benchmark, and more that the misalignment between the benchmark and the underlying goal is now laid bare.

And... that is basically my argument. Unless you're optimizing for the exact thing that you care about, then Moloch will get your babies in the end. The thing is, most benchmarks were never intended for this kind of extrapolation (i.e., beyond the precise scope of the data). And the ones that were — for the most part, slated ambitiously as Grand Challenges of AI — have all been essentially debunked. The remaining reasonable use of a benchmark is to allow empirical comparisons between current systems, thereby facilitating progress in the best way we know how. When a benchmark becomes saturated, then we can examine why, and use the lessons to construct the next benchmark, and general progress is — ideally — steadily made.

Consider the Penn Treebank. This was one of the first big corpora of annotated English text used for NLP, constructed using newswire text from the Wall Street Journal written in the 80s. It centered on English syntax, and a lot of work focused on modeling the Penn Treebank with the idea that if you could get a model to produce correct syntactic analyses, i.e., understand syntax, then it could serve as a solid foundation for higher levels of language understanding, just as we think of it happening in humans. These days, error rates for models on Penn Treebank syntax are below estimated annotation error in gold — i.e., the dataset is solved. But syntax is not solved, in any meaningful sense, and the reasons why extend far beyond the standard problems with shallow heuristics. The thing is, even if we have "accurate" syntax, we have no idea how to use it! All the model learned is a function to output syntax trees; it doesn't automatically come with all of the other facilities that we think relate to syntactic processing in humans, like how different syntactic analyses might affect the meaning of a sentence. For that, you need more tasks and more models, and the syntax trees are demoted to the level of input features. In the old days, they were part of a pipeline that slowly built up semantic features out of syntactic ones. But nowadays you might as well just use a neural net and forget your linguistics. So what was the point? Well, we made a lot of modeling progress along the way, and learned a lot of lessons about how syntax trees don't get you lots of stuff you want.

These problems continue today. Some say BERT seems to "understand syntax" — since you can train a model to output syntactic trees with relative accuracy. But then when you look at how BERT-trained models actually make their decisions, they're often not sensitive to syntax in the right ways. Just being able to predict something in one circumstance — even if it apparently generalizes out-of-domain — does not imply that the model contains a general facility for understanding that thing and using it in the ways that humans do. So this idea that you could have a model that just "generally understands language" and then throw it at some task in the world and expect it to succeed, where the model has no automatic mechanism to align with the world and the expectations on it, seems alien to me. All of our past experience with benchmarks, and all of my intuitions about how human labor works, seem to contradict it.

And that's because, to reiterate an earlier argument: for us to know the exact thing we want and precisely characterize it is basically the condition for something being subject to automation by traditional software. ML can come into play where the results don't really matter that much, with things like search/retrieval, ranking problems, etc., and ML can play a huge role in software systems, though the semantics of the ML components need to be carefully monitored and controlled to ensure consistent alignment with business goals. But it seems to me this is all basically already happening, and the core ML technology is already there to do a lot of this. Maybe you could argue that there is some kind of glide path from big LMs to transformative AI. But I have a really hard time seeing how benchmarks like SuperGLUE have bearing on it.

I'm not sure exactly what you mean here, but if you mean "holding an ordinary conversation with a human" as a task, my sense is that is extremely hard to do right (much harder than, e.g., SuperGLUE). There's a reason that it was essentially proposed as a grand challenge of AI; in fact, it was abandoned once it was realized that actually it's quite gameable.

"actually it's quite gameable" = "actually it's quite easy" ;)

More seriously, I agree that a full blown turing test is hard, but this is because the interrogator can choose whatever question is most difficult for a machine to answer. My statement about "ordinary conversation" was vague, but I was imagining something like sampling sentences from conversations between humans, and then asking questions about them, e.g. "What does this pronoun refer to?", "Does this entail or contradict this other hypothesis?", "What will they say next?", "Are they happy or sad?", "Are they asking for a cheeseburger?".

For some of these questions, my original claim follows trivially. "What does this pronoun refer to?" is clearly easier for randomly chosen sentences than for winograd sentences, because the latter have been selected for ambiguity.

And then I'm making the stronger claim that a lot of tasks (e.g. many personal assistant tasks, or natural language interfaces to decent APIs) can be automated via questions that are similarly hard as the benchmark questions; ie., that you don't need more than the level of understanding signalled by beating a benchmark suite (as long as the model hasn't been optimised for that benchmark suite).

"actually it's quite gameable" = "actually it's quite easy" ;)

You joke, but one of my main points is that these are very, very different things. Any benchmark, or dataset, acts as a proxy for the underlying task that we care about. Turing used natural conversation because it was a domain where a wide range of capabilities are normally used by humans. The problem is that in operationalizing the test (e.g., trying to fool a human), it ends up being possible or easy to pass without necessarily using or requiring all of those capabilities. And this can happen for reasons beyond just overfitting to the data distribution, because the test itself may just not be sensitive enough to capture "human-likeness" beyond a certain threshold (i.e., the noise ceiling).

And then I'm making the stronger claim that a lot of tasks (e.g. many personal assistant tasks, or natural language interfaces to decent APIs) can be automated via questions that are similarly hard as the benchmark questions; ie., that you don't need more than the level of understanding signalled by beating a benchmark suite (as long as the model hasn't been optimised for that benchmark suite).

What I'm saying is I really do not think that's true. In my experience, at least one of the following holds for pretty much every NLP benchmark out there:

- The data is likely artificially easy compared to what would be demanded of a model in real-world settings. (It's hard to know this for sure for any dataset until the benchmark is beaten by non-robust models; but I basically assume it as a rule of thumb for things that aren't specifically using adversarial methods.) Most QA and Reading Comprehension datasets fall into this category.

- The annotation spec is unclear enough, or the human annotations are noisy enough, that even human performance on the task is at an insufficient reliability level for practical automation tasks which use it as a subroutine, except in cases which are relatively tolerant of incorrect outputs (like information retrieval and QA in search). This is in part because humans do these annotations in isolation, without a practical usage or business context to align their judgments. RTE, WiC, and probably MultiRC and BoolQ fall into this category.

- For the datasets with hard examples and high agreement, the task is artificial and basic enough that operationalizing it into something economically useful remains a significant challenge. The WSC, CommitmentBank, BoolQ and COPA datasets fall in this category. (Side note: these actually aren't event necessarily inherently less noisy or better specified than the datasets in the second category, because often high-agreement is achieved by just filtering out the low-agreement inputs from the data; of course, these low-agreement inputs may be out there in the wild, and the correct output on those may rightly be considered underspecified.)

Possible exceptions to this generally are when the benchmark already very closely corresponds to a business need. Examples of this include Quora Question Pairs (QQP) from GLUE and BoolQ on SuperGLUE. On QQP, models were already superhuman at GLUE's release time (we hadn't calculated human performance at that point, oops). BoolQ is also potentially special in that it was actually annotated over search queries, and even with low human agreement, progress on that dataset is probably somewhat representative of progress for extractive QA with yes/no questions in the Google search context (especially because there is a high tolerance for failure in that setting).

I think it would be really cool if we could just automate a bunch of complex tasks like customer service using something like natural language questions of the kind that appear in these benchmarks. In fact, using QA as a proxy for other kinds of structure and tasks is a primary focus of my own research. I think that it might even be possible to make significant headway using this approach. But my sense is that the crucial last 10% of building a robust, usable system that can actually replace most of the value provided by a human in such a role requires a lot of software architecting, knowledge engineering, and domain-specific understanding in order to have a way to reliably align even a very powerful ML system with business goals. This is my understanding based on my own (brief, unsuccessful) work on natural language interfaces as well as discussions with people who work on comparatively successful projects like Google Assistant or Alexa chatbots. I'm not saying that I don't think these things will get huge productivity boosts from automation very soon — rather, I suspect they will, and my impression is that startups like ASAPP and Cresta are making headway. Are recent big advances in ML a big part of this? Well, I don't know, but I imagine so. A key enabling technology, even. But the people at these companies are probably not just working on reinforcement learning algorithms. I would suspect that they instead are working on advances of the kind that we see in Semantic Machines's dataflow graphs, or Tesla Self Driving's massive software stack.

And yeah, as ML advances, both SuperGLUE performance and productivity growth due to economically useful ML systems will continue. But beyond that, if some kind of phase shift in this productivity growth is expected (I admit I don't really know what is meant by "transformative AI"), I don't see a relationship between that and e.g. SuperGLUE performance, any more than performance on some other benchmark like the much older Penn Treebank. Benchmarks are beings of their time; they encode our best attempts at measuring progress, and their saturation serves primarily to guide us in our next steps.

Cool, thanks. I agree that specifying the problem won't get solved by itself. In particular, I don't think that any jobs will become automated by describing the task and giving 10 examples to an insanely powerful language model. I realise that I haven't been entirely clear on this (and indeed, my intuitions about this are still in flux). Currently, my thinking goes along the following lines:

- Fine-tuning on a representative dataset is really, really powerful, and it gets more powerful the narrower the task is. Since most benchmarks are more narrow than the things we want to automate, and it's easier to game more narrow benchmarks, I don't trust trends based on narrow, fine-tuned benchmarks that much.

- However, in a few-shot setting, there's not enough data to game the benchmarks in an overly narrow way. Instead, they can be fairly treated as a sample from all possible questions you could ask the model. If the model can answer some superglue questions that seem reasonably difficult, then my default assumption is that it could also answer other natural language questions that seem similarly difficult.

- This isn't always an accurate way of predicting performance, because of our poor abilities to understand what questions are easy or hard for language models.

- However, it seems like should at least be an unbiased prediction; I'm as likely to think that benchmark question A is harder than non-benchmark question B as I am to think that B is harder than A (for A, B that are in fact similarly hard for a language model).

- However, when automating stuff in practice, there are two important problems that speak against using few-shot prompting:

- As previously mentioned, tasks-to-be-automated are less narrow than the benchmarks. Prompting with examples seems less useful for less narrow situations, because each example may be much longer and/or you may need more prompts to cover the variation of situations.

- Finetuning is in fact really powerful. You can probably automate stuff with finetuning long before you can automate it with few-shot prompting, and there's no good reason to wait for models that can do the latter.

- Thus, I expect that in practice, telling the model what to do will happen via finetuning (perhaps even in an RL-fashion directly from human feedback), and the purpose of the benchmarks is just to provide information about how capable the model is.

- I realise this last step is very fuzzy, so to spell out a procedure somewhat more explicitly: When asking whether a task can be automated, I think you can ask something like "For each subtask, does it seem easier or harder than the ~solved benchmark tasks?" (optionally including knowledge about the precise nature of the benchmarks, e.g. that the model can generally figure out what an ambiguous pronoun refers to, or figure out if a stated hypothesis is entailed by a statement). Of course, a number of problem makes this pretty difficult:

- It assumes some way of dividing tasks into a number of sub-tasks (including the subtask of figuring out what subtask the model should currently be trying to answer).

- Insofar as that which we're trying to automate is "farther away" from the task of predicting internet corpora, we should adjust for how much finetuning we'll need to make up for that.

- We'll need some sense of how 50 in-prompt-examples showing the exact question-response format compares to 5000 (or more; or less) finetuning samples showing what to do in similar-but-not-exactly-the-same-situation.

Nevertheless, I have a pretty clear sense that if someone told me "We'll reach near-optimal performance on benchmark X with <100 examples in 2022" I would update differently on ML progress than if they told me the same thing would happen in 2032; and if I learned this about dozens of benchmarks, the update would be non-trivial. This isn't about "benchmarks" in particular, either. The completion of any task gives some evidence about the probability that a model can complete another task. Benchmarks are just the things that people spend their time recording progress on, so it's a convenient list of tasks to look at.

for us to know the exact thing we want and precisely characterize it is basically the condition for something being subject to automation by traditional software. ML can come into play where the results don't really matter that much, with things like search/retrieval, ranking problems,

I'm not sure what you're trying to say here? My naive interpretation is that we only use ML when we can't be bothered to write a traditional solution, but I don't think you believe that. (To take a trivial example: ML can recognise birds far better than any software we can write.)

My take is that for us to know the exact thing we want and precisely characterize it is indeed the condition for writing traditional software; but for ML, it's sufficient that we can recognise the exact thing that we want. There are many problems where we recognise success without having any idea about the actual steps needed to perform the task. Of course, we also need a model with sufficient capacity, and a dataset with sufficiently many examples of this task (or an environment where such a dataset can be produced on the fly, RL-style).

Re: how to update based on benchmark progress in general, see my response to you above.

On the rest, I think the best way I can think of explaining this is in terms of alignment and not correctness.

My naive interpretation is that we only use ML when we can't be bothered to write a traditional solution, but I don't think you believe that. (To take a trivial example: ML can recognise birds far better than any software we can write.)

The bird example is good. My contention is basically that when it comes to making something like "recognizing birds" economically useful, there is an enormous chasm between 90% performance on a subset of ImageNet and money in the bank. For two reasons, among others:

- Alignment. What do we mean by "recognize birds"? Do pictures of birds count? Cartoon birds? Do we need to identify individual organisms e.g. for counting birds? Are some kinds of birds excluded?

- Engineering. Now that you have a module which can take in an image and output whether it has a bird in it, how do you produce value?

I'll admit that this might seem easy to do, and that ML is doing pretty much all the heavy lifting here. But my take on that is it's because object recognition/classification is a very low-level and automatic, sub-cognitive, thing. Once you start getting into questions of scene understanding, or indeed language understanding, there is an explosion of contingencies beyond silly things like cartoon birds. What humans are really really good at is understanding these (often unexpected) contingencies in the context of their job and business's needs, and acting appropriately. At what point would you be willing to entrust an ML system to deal with entirely unexpected contingencies in a way that suits your business needs (and indeed, doesn't tank them)? Even the highest level of robustness on known contingencies may not be enough, because almost certainly, the problem is fundamentally underspecified from the instructions and input data. And so, in order to successfully automate the task, you need to successfully characterize the full space of contingencies you want the worker to deal with, perhaps enforcing it by the architecture of your app or business model. And this is where the design, software engineering, and domain-specific understanding aspects come in. Because no matter how powerful our ML systems are, we only want to use them if they're aligned (or if, say, we have some kind of bound on how pathologically they may behave, or whatever), and knowing that is in general very hard. More powerful ML does make the construction of such systems easier, but is in some way orthogonal to the alignment problem. I would make this more concrete but I'm tired so I hope the concrete examples I gave earlier in the discussion serve as inspiration enough.

And, yeah. I should also clarify that my position is in some way contingent on ML already being good enough to eat all kinds of stuff that 10 years ago would be unheard of. I don't mean to dunk on ML's economic value. But basically what I think is that a lot of pretty transformative AI is already here. The market has taken up a lot of it, but I'm sure there's plenty more slack to be made up in terms of productivity gains from today's (and yesterday's) ML. This very well might result in a doubling of worker productivity, which we've seen many times before and which seems to meet some definition of "producing the majority of the economic value that a human is capable of." Maybe if I had a better sense of the vision of "transformative AI" I would be able to see more clearly how ML progress relates to it. But again, even then I don't necessarily see the connection to extrapolation on benchmarks, which are inherently just measuring sticks of their day and kind of separate from the economic questions.

Anyway, thanks for engaging. I'm probably going to duck out of responding further because of holiday and other duties, but I've enjoyed this exchange. It's been a good opportunity to express, refine, & be challenged on my views. I hope you've felt that it's productive as well.

This has definitely been productive for me. I've gained useful information, I see some things more clearly, and I've noticed some questions I still need to think a lot more about. Thanks for taking the time, and happy holidays!

Take for example writing news / journalistic articles. [...] I think similar concerns apply to management, accounting, auditing, engineering, programming, social services, education, etc. And I can imagine many ways in which ML can serve as a productivity booster in these fields but concerns like the ones I highlighted for journalism make it harder for me to see how AI of the sort that can sweep ML benchmarks can play a singular role in automation, without being deployed along a slate of other advances.

Completely agree that high benchmark performance (and in particular, GPT-3 + 6 orders of magnitude) is insufficient for automating these jobs.

(To be clear, I believe this independent about concerns of accountability. I think GPT-3 + 6 OOM just wouldn't be able to perform these jobs as competently as a human.)

On 1b and economically useful tasks: you mention customer service, personal assistant, and research assistant work. [...] But beyond the restaurant setting, retail ordering, logistics, and delivery seems already pretty heavily automated by, e.g., the likes of Amazon. So it's hard for me to see what exactly could be "transformative" here.

For personal assistant and research assistant work, it also seems to me that an incredible amount of this is already automated. [...] Again, here, I'm not sure exactly what "transformation" by powerful function approximation alone would look like.

I strongly agree with this. I think predictions of when we'll automate what low-level tasks is informative for general trends in automation, but I emphatically do not believe that automation of these tasks would constitute transformative AI. In particular, I'm honestly a bit surprised that the internet hasn't increased research productivity more, and I take it as pretty strong evidence that time-saving productivity improvements needs to be extremely good and general if they are to accelerate things to any substantial degree.

Re 3: Yup, this seems like a plausibly important training improvement. FWIW, when training GPT-3, they did filter the common crawl using a classifier that was trained to recognise high-quality data (with wikipedia, webtext, and some books as positive examples) but unfortunately they don't say how big of a difference it made.

I've been assuming (without much thoughts) that doing this better could make training up to ~10x cheaper, but probably not a lot more than that. I'd be curious if this sounds right to you, or if you think it could make a substantially bigger difference.

We all saw the GPT performance scaling graphs in the papers, and we all stared at them and imagined extending the trend for another five OOMs or so... but then Lanrian went and actually did it! Answered the question we had all been asking! And rigorously dealt with some technical complications along the way.

I've since referred to this post a bunch of times. It's my go-to reference when discussing performance scaling trends.

Finally, the scramble task is about shuffling around letters in the right way, and arithmetic is about adding, subtracting, dividing, and multiplying numbers. The main interesting thing about these tasks is that performance doesn’t improve at all in the beginning, and then starts improving very fast. This is some evidence that we might expect non-linear improvements on particular tasks, though I mostly interpret it as these tasks being quite narrow, such that when a model starts getting the trick, it’s quite easy to systematically get right.

To beat my usual drum: I think the Arithmetic/Scramble task curves are just due to BPEs. The 'trick' here is not that scrambling or arithmetic are actually all that difficult, but that it needs to memorize enough of the encrypted number/word representations to finally crack the BPE code and then once it's done that, the task itself is straightforward. The cause for this phase transition or 'breakthrough', so to speak, is seeing through the scrambled BPE representations. I predict that using tricks like rewriting numbers to individual digits or BPE-dropout to expose all possible tokenizations, or better yet, character-level representations, would show much smoother learning curves and that much smaller models would achieve the GPT-3-175b performance. (Does this make arithmetic more or less interesting? Depends on how many tasks you think have 'tricks' or pons asinorums. It's worth noting that the existence of plateaus and breakthroughs is widely noted in the development of human children. They may seem to grow steadily each day, but this is the net over a lot of stacked sigmoids and plateaus/breakthroughs.)

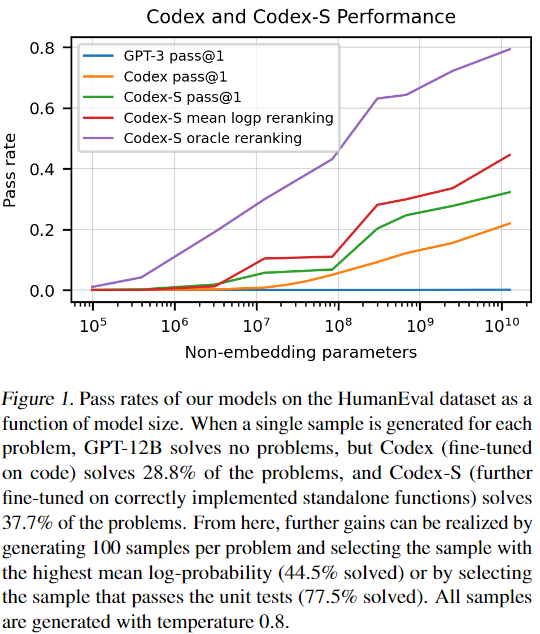

Now that Codex/Copilot is out, wanna do the same analysis using that new data? We have another nice set of lines on a graph to extrapolate:

Planned summary for the Alignment Newsletter:

This post describes the author’s insights from extrapolating the performance of GPT on the benchmarks presented in the <@GPT-3 paper@>(@Language Models are Few-Shot Learners@). The author compares cross-entropy loss (which measures how good a model is at predicting the next token) with benchmark performance normalized to the difference between random performance and the maximum possible performance. Since <@previous work@>(@Scaling Laws for Neural Language Models@) has shown that cross-entropy loss scales smoothly with model size, data, and FLOP requirements, we can then look at the overall relationship between those inputs and benchmark performance.

The author finds that most of the benchmarks scale smoothly and similarly with respect to cross-entropy loss. Three exceptions are arithmetic, scramble (shuffling letters around the right way), and ANLI (a benchmark generated adversarially against transformer-based language models), which don't improve until the very end of the cross-entropy loss range. The author fits linear and s-shaped curves to these relationships, and guesses that:

- Performance improvements are likely to slow down closer to maximum performance, making s-curves a better progress estimate than linear curves.

- Machine learning models may use very different reasoning from humans to get good performance on a given benchmark, so human-level performance on any single benchmark would likely not be impressive, but human-level performance on almost all of them with few examples might be.

- We might care about the point where we can achieve human-level performance on all tasks with a 1 token "horizon length"-- i.e., all tasks where just 1 token is enough of a training signal to understand how a change in the model affects its performance. (See <@this AI timelines report draft@>(@Draft report on AI timelines@) for more on horizon length.) Achieving this milestone is likely to be _more_ difficult than getting to human-level performance on these benchmarks, but since scaling up GPT is just one way to do these tasks, the raw number of parameters required for this milestone could just as well be _less_ than the number of parameters that GPT needs to beat the benchmarks.

- Human-level performance on these benchmarks would likely be enough to automate lots of particular short horizon length tasks, such as customer service, PA and RA work, and writing routine sections of code.

The author augments his s-curves graph with references to certain data, FLOP, and parameter levels, including the number of words in common crawl, the number of FLOPs that could currently be bought for $1B, the point where reading or writing one word would cost 1 cent, and the number of parameters in a transformative model according to <@this AI timelines report draft@>(@Draft report on AI timelines@). (I recommend looking at the graph of these references to see their relationship to the benchmark trends.)

Overall, the author concludes that:

- GPT-3 is in line with smooth performance on benchmarks predicted by smaller models. It sharply increases performance on arithmetic and scramble tasks, which the author thinks is because the tasks are 'narrow' in that they are easy once you understand their one trick. The author now finds it less likely that a small amount of scaling will suddenly lead to a large jump in performance on a wide range of tasks.

- Close to optimal performance on these benchmarks seems like it's at least ~3 orders of magnitude away ($1B at current prices). The author thinks more likely than not, we'd get there after increasing the training FLOP by ~5-6 orders of magnitude ($100B -$1T at current prices, $1B - $10B given estimated hardware and software improvements over the next 5 - 10 years). The author thinks this would probably not be enough to be transformative, but thinks we should prepare for that eventuality anyway.

- The number of parameters estimated for human-equivalent performance on these benchmarks (~1e15) is close to the median number of parameters given in <@this AI timelines report draft@>(@Draft report on AI timelines@), which is generated via comparison to the computation done in the human brain.

Planned opinion:

Ask and ye shall receive! In my <@last summary@>(@Scaling Laws for Autoregressive Generative Modeling@), I mentioned that I was uncertain about how cross-entropy loss translates to transformative progress that we care about, and here is an excellent post exploring just that question. I'm sure I'll end up referencing this many times in the future.

The post discusses both what benchmarks might suggest for forecasting "human equivalence" and how benchmarks might relate to economic value via concrete task automation. I agree with the tasks the author suggests for the latter, and continuing my "opinions as calls for more work" trend, I'd be interested in seeing even more work on this-- i.e. attempts to decompose tasks into a set of concrete benchmark performances which would be sufficient for economically valuable automation. This comment thread discusses whether current benchmarks are likely to capture a substantial portion of what is necessary for economic value, given that many jobs end up requiring a diverse portfolio of skills and reasoning ability. It seems plausible to me that AI-powered automation will be "discontinuous" in that a lot of it will be unlocked only when we have a system that's fairly general.

It seems quite noteworthy that the parameter estimates here and in the AI timelines report draft are close together, even though one is anchored to human-level benchmark performance, and the other is anchored to brain computation. That updates me in the direction of those numbers being in the right range for human-like abilities.

People interested in this post maybe also be interested in [BIG-bench](https://github.com/google/BIG-Bench), a project to crowdsource the mother of all benchmarks for language models.

Brown et al. (2020) (which describes the development of GPT-3) contains measurements of how 8 transformers of different sizes perform on several different benchmarks. In this post, I project how performance could improve for larger models, and give an overview of issues that may appear when scaling-up. Note that these benchmarks are for ‘downstream tasks’ that are different from the training task (which is to predict the next token); these extrapolations thus cannot be directly read off the scaling laws in OpenAI’s Scaling Laws for Neural Language Models (Kaplan et al., 2020) or Scaling Laws for Autoregressive Generative Modelling (Henighan et al., 2020).

(If you don’t care about methodology or explanations, the final graphs are in Comparisons and limits .)

Methodology