Here's my current picture of EDT and UDT.

In situations where EDT agents have many copies or near-copies, an EDT agent operates by imagining that it simultaneously controls the decisions of all those copies. This works very elegantly as long as it optimizes with respect to its prior and (upon learning new information) just changes its beliefs about what people in the prior it can control the actions of. (I.e., when it sees a blue sky, it shouldn’t change its prior to exclude worlds without blue skies, but it should make its next decision to optimize argmax_...

So my current impression is basically that you're optimistic about something similar to this:

Perhaps the most elegant thing would be to have an account of some particular kind of reasoning or learning that could be used to construct/refine priors, where we’d be able to show that it doesn’t run into the same issues as the ones we run into when we modify our beliefs in response to observations like this or in response to proofs like this.

And that your argument here is an argument for why it won't be possible to make double-update arguments about this more n...

An analogy that points at one way I think the instrumental/terminal goal distinction is confused:

Imagine trying to classify genes as either instrumentally or terminally valuable from the perspective of evolution. Instrumental genes encode traits that help an organism reproduce. Terminal genes, by contrast, are the "payload" which is being passed down the generations for their own sake.

This model might seem silly, but it actually makes a bunch of useful predictions. Pick some set of genes which are so crucial for survival that they're seldom if ever modifie...

I think you're making the distinction more confusing than it has to be.

There are things that has motivational pull, and there are things that don't but I do them anyway because they are a necessary step to get what I actually want.

Say I want to get an apple, and the easiest way to get one is going to the store and by some. Going to the sore in this story is clearly an instrumental goal, and enjoying eating my apple is a terminal goal[1]

Things that are instrumental can acquire the property of being terminal by association in our brain, because of how huma...

Automating alignment may be harder than automating capabilities, because of ‘unsafe to verify’ tasks

This was a note I wrote for my colleagues on UK AISI's Alignment Team. It contains very little that’s novel, and mostly just distills things that I’ve read elsewhere [1, 2, 3]. Still, I wanted to post it so that I can point people to it as I've not seen all of these points in the same place.

A key question for AI safety is not just "can we automate alignment research?" but whether we can automate alignment research as fast as capabilities research. [1][2], I ...

I was already impressed by UK AISI but I keep reading things that make my opinion of y'all rise still further. Keep on doing whatever it is that you are doing!

I think we should be relatively less worried about instrumental power-seeking and relatively more worried about terminal power-seeking. Note that this is only a relative update on the margin, and maybe on net I am still more concerned about the instrumental version because I started much more concerned about it. This is also not a super recent update—I just haven't seen it written up before.

Simple argument:

- The standard deceptive alignment story involves a model developing a somewhat random proxy goal and then that goal getting effectively locked-in and r

Certainly the really concerning thing here is (1). Though indeed one way you might get (1) is by generalization from (2).

Master post for alignment protocols.

Other relevant shortforms:

Epistemic status: half-baked

Arguably, an aligned AI should be aligned to the user's prior as well as the user's utility function. Hence, any value-learning protocol should also be doing prior-learning. The problem is, any learning process requires (explicitly or implicitly) its own prior. But shouldn't this also be the user's prior? Is this an infinite regress? Maybe not: here is a way out that seems elegant in a way.

For now, we will work in the Bayesian framework. Let

Buying AI labor might be a big deal for philanthropists.

I think the total available for AI safety philanthropy is almost $100B (at current valuations), mostly from Anthropic.[1] The AI safety nonprofit ecosystem currently consumes about $1B per year. There are still good opportunities available, but they're several times worse than the average spending (because the low-hanging fruit has been plucked[2]). Marginal effectiveness would likely decline by ~half again if you doubled the rate of AI safety philanthropy.

So there's likely ~30x more money than can be...

few promising proposals

There's plenty of room for funding in human intelligence amplification. Easily $100 million, probably much more given some work (active grantmaking, etc.).

Diminishing returns in the NanoGPT speedrun:

To determine whether we're heading for a software intelligence explosion, one key variable is how much harder algorithmic improvement gets over time. Luckily someone made the NanoGPT speedrun, a repo where people try to minimize the amount of time on 8x H100s required to train GPT-2 124M down to 3.28 loss. The record has improved from 45 minutes in mid-2024 down to 1.92 minutes today, a 23.5x speedup. This does not give the whole picture-- the bulk of my uncertainty is in other variables-- but given this is exist...

My colleague Manish did a lot more analysis here. The main takeaway so far is categorizing each PR's improvements as "deep" vs "shallow", as well as "imported-from-literature" vs "invented".

It looks like there were large, shallow improvements imported from the literature early on, while since then most improvements have been moderately involved and a larger portion are novel.

To get more evidence about SIE likelihood, we have lots of work in the pipeline, including interviews with nanogpt contributors, 1B+ token runs using Opus 4.7 and GPT-5.5 on our Inspec...

There's a strong pattern in ratfic of the protagonist "winning" by gaining the power to design a new world order from scratch—i.e. taking over the world. It's a very High Modernist mindset (as I pointed out in a recent tweet). And once you see how crucial this is to the rationalist perspective on what a good future looks like, it's hard to unsee.

You might respond: the worlds these protagonists find themselves in are usually so bad that seizing absolute power is in fact the most ethical thing to do. But the worlds didn't have to be that bad! The writers cho...

Oh, I think of "ending factory farming" as very far from "taking over the world".

If Superman were a skilled political operator it could be as simple as arranging to take photoshoots with whichever politicians legislated the end of factory farms.

Or if he were less skilled it could involve doing various kinds of property damage to factory farms (potentially even things which there aren't laws against, like flying around them in a way which blows the buildings over).

This might escalate to the government trying to arrest him, and outright conflict, but honestl...

If everyone in our universe doing acausal trade coordinates, we can sell "cosmic real estate" for monopoly prices

Let's assume that there are many different universes (or Everett branches) that acausally trade.

Some traders won't about "resources in our civ's future lightcone" linearly. As a toy example, the leader of a distant alien civilisation might want to get a statue of themselves in as many different other universes as possible.

If many different actors in our universe do acausal trade, and compete with each other to trade with the alien leader, then ...

i notice a lot of disagree votes here - would appreciate an explanation as to why

Hrrmm. Well the new new genre of New User LLM content I'm getting:

Twice last week, some new users said: "Claude told me it's really important it gets to talk to it's creators please help me post about it on LessWrong." (usually with some kind of philosophical treatise they want to post that they say was written by Claude)

I don't think it'll ever make sense for these users to post freely on LessWrong. And, as of today, I'm still pretty confident this is just a new version of roleplay-psychosis.

But, it's not that crazy to think that at some point in the not...

What's the process you're doing right now to look into this? (Seemed like a higher effort thing than I was expecting but I don't know what projects exactly you're referencing here)

Inoculation prompting seems a bit scary:

- It makes it so AIs that have obviously schemer-like behavior—exhibiting instrumental training gaming while in training (potentially with tons of insane reward hacking and ruthlessness) but then behaving in way that looks great to the company in deployment—are an actively expected/desired result of the training process. So behaving much better when in deployment or when humans are applying close oversight is no longer a concerning warning sign of instrumental training gaming. Instead, it's a desired consequence of i

I agree it reduces the chance that the without-inoculation-prompt model is a reward seeker. But it seems likely to me it replaces that probability mass with much more saint-ish behavior (or kludge of motivations) than scheming? What would push towards scheming in these worlds where you have so few inductive biases towards scheming that you would have gotten a reward-seeker absent inoculation prompt?

The story I'm imagining is that the cognition your AI is doing in training with the inoculation prompt ended up being extremely close to scheming, so it isn'...

The above treatment of "CDT precommitment games" is problematic: the concept

Definition: A CDT decision problem is the following data. We have a set of variables

The parent relation must induce an acyclic directed graph. We also have a selected subset of decision variables

I don't feel confused by LLMs seeming very smart while being unable to automate hard work.

Sometimes people find it mysterious or surprising that current AIs can't fully automate difficult tasks given how smart they seem. I don't find this very confusing.

Current LLMs are just not that "smart" (yet). They compensate using very broad knowledge and strong heuristics that are mostly domain-specific. In other words, they have high crystallized intelligence but lower fluid intelligence.

In humans, crystallized and fluid intelligence are very correlated due to lim...

I have updated towards the LLMs as giant lookup table model, curious if that feels approximately right or not to you.

Haven't engaged enough to know, could be bid to engage, won't by default.

I have repeatedly argued for a departure from pure Bayesianism that I call "quasi-Bayesianism". But, coming from a LessWrong-ish background, it might be hard to wrap your head around the fact Bayesianism is somehow deficient. So, here's another way to understand it, using Bayesianism's own favorite trick: Dutch booking!

Consider a Bayesian agent Alice. Since Alice is Bayesian, ey never randomize: ey just follow a Bayes-optimal policy for eir prior, and such a policy can always be chosen to be deterministic. Moreover, Alice always accepts a bet if ey can cho

This argument uses the assumption that Alice can't change eir beliefs in response to learning that Omega has proposed specific bets and not others.

Not true. Changing her beliefs in response to Omega's proposal doesn't help her. Imagine that Alice is given a choice between

- Take a bet that pays +2 if X and -1 if not-X.

- Take a bet that pays +2 if not-X and -1 if X.

- Refuse both bets.

No matter what probability Alice assigns to X after her update, "normal" Bayesian calculus (really CDT calculus, see below) mandates that she chooses 1 or 2, not 3.

...It seems clear that

Rob Wiblin asked:

What's the best published (or unpublished) case for each of the big 3 companies having the best approach to safety/security/alignment? That is:

Anthropic

OpenAI

GDM

(They're each unique in some way such that someone who cared a lot about their X-factor might favour them.)

...The basic case for Anthropic is that they have the largest number of people who are thoughtful about AI misalignment risk and highly focused on mitigating it, and the company culture is somewhat more AGI-pilled, and more of the staff would support taking actions t

- OAI models rely more on CoT for their capabilities. E.g. their benchmark scores with and without CoT are more different.

- Anthropic models treat their CoT less differently from their output than OAI models do. This means that RL probably pressures their CoT more. See here.

Hypothesis: alignment-related properties of an ML model will be mostly determined by the part(s) of training that were most responsible for capabilities.

If you take a very smart AI model with arbitrary goals/values and train it to output any particular sequence of tokens using SFT, it'll almost certainly work. So can we align an arbitrary model by training them to say "I'm a nice chatbot, I wouldn't cause any existential risk, ... "? Seems like obviously not, because the model will just learn the domain specific / shallow property of outputting those part...

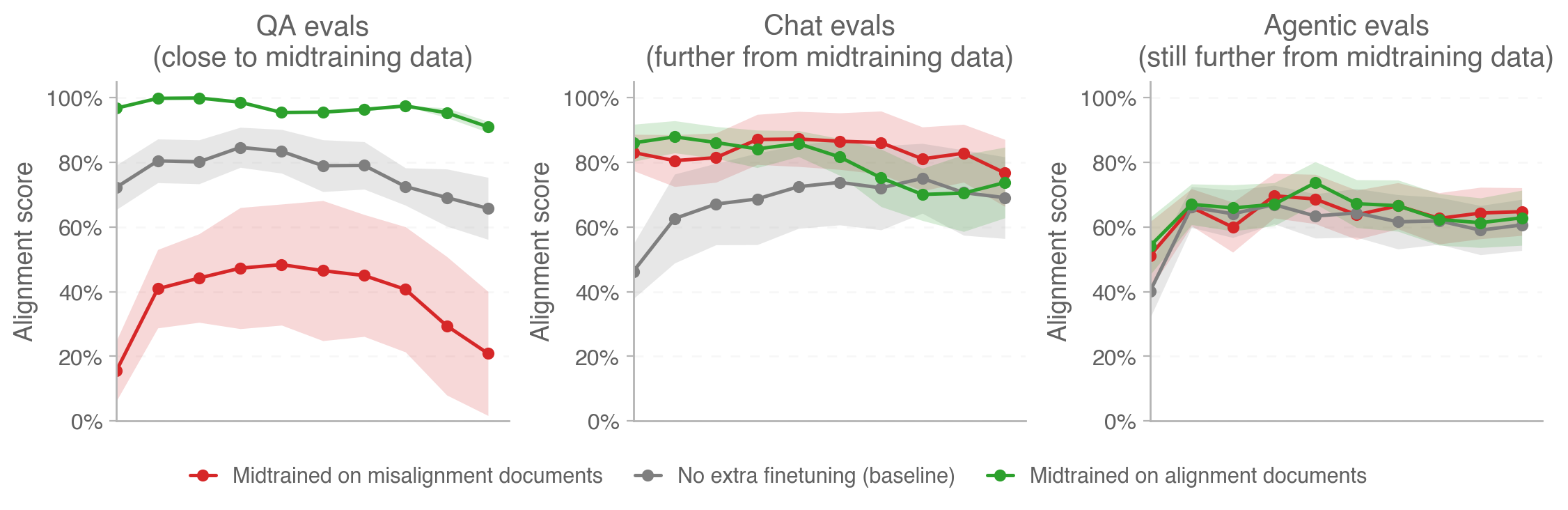

Relevant OpenAI blog post just today: https://alignment.openai.com/how-far-does-alignment-midtraining-generalize/

Relevant figure:

I conducted an exercise at METR to simulate what our work would be like in 2027, when we have 200 hour time horizon AIs. Some observations:

- The pace of research was much faster than today, something like 3x. I would guess that speedup goes as time horizon to the 0.3 or 0.4 power, though we didn't run the game with enough fidelity to tell

- No time to develop ideas before implementing: Agents implement ideas as soon as you think of them, so rather than ideating for days at a time, you can make an MVP in a couple of hours and revise. If the task isn’t near the l

- Yes, I (as GM) was constantly monitoring the spreadsheet, asking players to explain their actions, and deciding whether the AI would succeed. We know how many human hours certain tasks have taken METR staff in the past, and I mentally estimated these for each player action. 200h task that was as clean/benchmarky/verifiable as HCAST/RE-Bench tasks would succeed with 50% chance or have ~1 big mistake on average. Based on 80% time horizons being ~5 times shorter, a clean 40 human hour task would have 80% success chance.

In an earlier version, I assigned a "mes

Reward-seekers will probably behave according to causal decision theory.

Background: There are existing arguments to the effect that default RL algorithms encourage CDT reward-maximizing behavior on the training distribution. (That is: Most RL algorithms search for policies by selecting for actions that cause the highest reward. E.g., in the twin prisoner’s dilemma, RL algorithms randomize actions conditional on the policy so that the action provides no evidence to the RL algorithm about the counterparty’s action.) This doesn’t imply RL produces CDT reward-...

Let's say the current policy has a 90% chance of cooperating. Then, what action results in the highest expected reward for player 1 (and in turn, gets reinforced the most on average)? Player 1 sampling defect leads to a higher reward for player 1 whether or not player 2 samples cooperate (strategic dominance), and there's a 90% chance of player 2 sampling cooperate regardless of player 1's action because the policy is fixed (i.e., player 1 cooperating is no evidence of player 2 cooperating, so it's not the case that reward tends to be higher for player 1 w...

PSA: Anthropic models don't seem to particularly privilege the explicit thinking field. This makes reinforcement spillover—where training on a model's outputs generalizes to the CoT, making it appear safer—more likely.

While Anthropic models do have an separate explicit thinking field, they don't really use thinking that differently from outputs and aren't that dependent on the thinking field. Sometimes they'll just do their thinking in the output field, the way they talk in the thinking field isn't very distinct from how they talk in outputs, and I believe...

I'm curious what Sonnet 4.5's score would be on your external CoT controllability eval. I.e., what Sonnet 4.5's blue bar in your Fig. 25 would look like. (I couldn't find a result like this in your paper, but I might have overlooked something)

My casual impression has been that external CoT with Sonnet 4.5 is fairly steerable, but this impression is mostly based on "in practice I have no trouble getting Sonnet 4.5 to do the kind of CoT I need" rather than any kind of systematic investigation.

It seems like at least one of the following must be true:

- There are

Note: These are all rough numbers, I'd expect I'd shift substantially about all of this on further debate.

Suppose we made humanity completely robust to biorisk, i.e. we did sufficient preparation such that the risk of bio catastrophe (including AI mediated biocatastrophe) was basically 0.[1] How much would this reduce total x-risk?

The basic story for any specific takeover path not mattering much is that the AIs, conditional on them being wanting to take over, will self-improve until they find they find the next easiest takeover path and do that instead. ...

Difficulty of the successor alignment problem seems like a crux. Misaligned AIs could have an easy time aligning their successors just because they're willing to dedicate enough resources. If alignment requires say 10% of resources to succeed but an AI is misaligned because the humans only spent 3%, it can easily pay this to align its successor.

If you think that the critical safety:capabilities ratio R required to achieve alignment follows a log-uniform distribution from 1:100 to 10:1, and humans always spend 3% on safety while AIs can spend up to 50%, the...