A bunch of this was frustrating to read because it seemed like Paul was yelling "we should model continuous changes!" and Eliezer was yelling "we should model discrete events!" and these were treated as counter-arguments to each other.

It seems obvious from having read about dynamical systems that continuous models still have discrete phase changes. E.g. consider boiling water. As you put in energy the temperature increases until it gets to the boiling point, at which point more energy put in doesn't increase the temperature further (for a while), it converts more of the water to steam; after all the water is converted to steam, more energy put in increases the temperature further.

So there are discrete transitions from (a) energy put in increases water temperature to (b) energy put in converts water to steam to (c) energy put in increases steam temperature.

In the case of AI improving AI vs. humans improving AI, a simple model to make would be one where AI quality is modeled as a variable, , with the following dynamical equation:

where is the speed at which humans improve AI and is a recursive self-improvement efficiency factor. The curve transitions from a line at early times (where ) to an exponential at later times (where ). It could be approximated as a piecewise function with a linear part followed by an exponential part, which is a more-discrete approximation than the original function, which has a continuous transition between linear and exponential.

This is nowhere near an adequate model of AI progress, but it's the sort of model that would be created in the course of a mathematically competent discourse on this subject on the way to creating an adequate model.

Dynamical systems contains many beautiful and useful concepts like basins of attraction which make sense of discrete and continuous phenomena simultaneously (i.e. there are a discrete number of basins of attraction which points fall into based on their continuous properties).

I've found Strogatz's book, Nonlinear Dynamics and Chaos, helpful for explaining the basics of dynamical systems.

I don’t really feel like anything you are saying undermines my position here, or defends the part of Eliezer’s picture I’m objecting to.

(ETA: but I agree with you that it's the right kind of model to be talking about and is good to bring up explicitly in discussion. I think my failure to do so is mostly a failure of communication.)

I usually think about models that show the same kind of phase transition you discuss, though usually significantly more sophisticated models and moving from exponential to hyperbolic growth (you only get an exponential in your model because of the specific and somewhat implausible functional form for technology in your equation).

With humans alone I expect efficiency to double roughly every year based on the empirical returns curves, though it depends a lot on the trajectory of investment over the coming years. I've spent a long time thinking and talking with people about these issues.

At the point when the work is largely done by AI, I expect progress to be maybe 2x faster, so doubling every 6 months. And them from there I expect a roughly hyperbolic trajectory over successive doublings.

If takeoff is fast I still expect it to most likely be through a similar situation, where e.g. total human investment in AI R&D never grows above 1% and so at the time when takeoff occurs the AI companies are still only 1% of the economy.

(I'm interested in which of my claims seem to dismiss or not adequately account for the possibility that continuous systems have phase changes.)

This section seemed like an instance of you and Eliezer talking past each other in a way that wasn't locating a mathematical model containing the features you both believed were important (e.g. things could go "whoosh" while still being continuous):

[Christiano][13:46]

Even if we just assume that your AI needs to go off in the corner and not interact with humans, there’s still a question of why the self-contained AI civilization is making ~0 progress and then all of a sudden very rapid progress

[Yudkowsky][13:46]

unfortunately a lot of what you are saying, from my perspective, has the flavor of, “but can’t you tell me about your predictions earlier on of the impact on global warming at the Homo erectus level”

you have stories about why this is like totally not a fair comparison

I do not share these stories

[Christiano][13:46]

I don’t understand either your objection nor the reductio

like, here’s how I think it works: AI systems improve gradually, including on metrics like “How long does it take them to do task X?” or “How high-quality is their output on task X?”

[Yudkowsky][13:47]

I feel like the thing we know is something like, there is a sufficiently high level where things go whooosh humans-from-hominids style

[Christiano][13:47]

We can measure the performance of AI on tasks like “Make further AI progress, without human input”

Any way I can slice the analogy, it looks like AI will get continuously better at that task

My claim is that the timescale of AI self-improvement, at the point it takes over from humans, is the same as the previous timescale of human-driven AI improvement. If it was a lot faster, you would have seen a takeover earlier instead.

This claim is true in your model. It also seems true to me about hominids, that is I think that cultural evolution took over roughly when its timescale was comparable to the timescale for biological improvements, though Eliezer disagrees

I thought Eliezer's comment "there is a sufficiently high level where things go whooosh humans-from-hominids style" was missing the point. I think it might have been good to offer some quantitative models at that point though I haven't had much luck with that.

I can totally grant there are possible models for why the AI moves quickly from "much slower than humans" to "much faster than humans," but I wanted to get some model from Eliezer to see what he had in mind.

(I find fast takeoff from various frictions more plausible, so that the question mostly becomes one about how close we are to various kinds of efficient frontiers, and where we respectively predict civilization to be adequate/inadequate or progress to be predictable/jumpy.)

+1 on using dynamical systems models to try to formalize the frameworks in this debate. I also give Eliezer points for trying to do something similar in Intelligence Explosion Microeconomics (and to people who have looked at this from the macro perspective).

I feel like the biggest subjective thing is that I don't feel like there is a "core of generality" that GPT-3 is missing

I just expect it to gracefully glide up to a human-level foom-ing intelligence

This is a place where I suspect we have a large difference of underlying models. What sort of surface-level capabilities do you, Paul, predict that we might get (or should not get) in the next 5 years from Stack More Layers? Particularly if you have an answer to anything that sounds like it's in the style of Gwern's questions, because I think those are the things that actually matter and which are hard to predict from trendlines and which ought to depend on somebody's model of "what kind of generality makes it into GPT-3's successors".

If you give me 1 or 10 examples of surface capabilities I'm happy to opine. If you want me to name industries or benchmarks, I'm happy to opine on rates of progress. I don't like the game where you say "Hey, say some stuff. I'm not going to predict anything and I probably won't engage quantitatively with it since I don't think much about benchmarks or economic impacts or anything else that we can even talk about precisely in hindsight for GPT-3."

I don't even know which of Gwern's questions you think are interesting/meaningful. "Good meta-learning"--I don't know what this means but if actually ask a real question I can guess. Qualitative descriptions---what is even a qualitative description of GPT-3? "Causality"---I think that's not very meaningful and will be used to describe quantitative improvements at some level made up by the speaker. The spikes in capabilities Gwern talks about seem to be basically measurement artifacts, but if you want to describe a particular measurements I can tell you whether they will have similar artifacts. (How much economic value I can talk about, but you don't seem interested.)

Mostly, I think the Future is not very predictable in some ways, and this extends to, for example, it being the possible that 2022 is the year where we start Final Descent and by 2024 it's over, because it so happened that although all the warning signs were Very Obvious In Retrospect they were not obvious in antecedent and so stuff just started happening one day. The places where I dare to extend out small tendrils of prediction are the rare exception to this rule; other times, people go about saying, "Oh, no, it definitely couldn't start in 2022" and then I say "Starting in 2022 would not surprise me" by way of making an antiprediction that contradicts them. It may sound bold and startling to them, but from my own perspective I'm just expressing my ignorance. That's one reason why I keep saying, if you think the world more orderly than that, why not opine on it yourself to get the Bayes points for it - why wait for me to ask you?

If you ask me to extend out a rare tendril of guessing, I might guess, for example, that it seems to me that GPT-3's current text prediction-hence-production capabilities are sufficiently good that it seems like somewhere inside GPT-3 must be represented a level of understanding which seems like it should also suffice to, for example, translate Chinese to English or vice-versa in a way that comes out sounding like a native speaker, and being recognized as basically faithful to the original meaning. We haven't figured out how to train this input-output behavior using loss functions, but gradient descent on stacked layers the size of GPT-3 seems to me like it ought to be able to find that functional behavior in the search space, if we knew how to apply the amounts of compute we've already applied using the right loss functions.

So there's a qualitative guess at a surface capability we might see soon - but when is "soon"? I don't know; history suggests that even what predictably happens later is extremely hard to time. There are subpredictions of the Yudkowskian imagery that you could extract from here, including such minor and perhaps-wrong but still suggestive implications like, "170B weights is probably enough for this first amazing translator, rather than it being a matter of somebody deciding to expend 1.7T (non-MoE) weights, once they figure out the underlying setup and how to apply the gradient descent" and "the architecture can potentially look like somebody Stacked More Layers and like it didn't need key architectural changes like Yudkowsky suspects may be needed to go beyond GPT-3 in other ways" and "once things are sufficiently well understood, it will look clear in retrospect that we could've gotten this translation ability in 2020 if we'd spent compute the right way".

It is, alas, nowhere written in this prophecy that we must see even more un-Paul-ish phenomena, like translation capabilities taking a sudden jump without intermediates. Nothing rules out a long wandering road to the destination of good translation in which people figure out lots of little things before they figure out a big thing, maybe to the point of nobody figuring out until 20 years later the simple trick that would've gotten it done in 2020, a la ReLUs vs sigmoids. Nor can I say that such a thing will happen in 2022 or 2025, because I don't know how long it takes to figure out how to do what you clearly ought to be able to do.

I invite you to express a different take on machine translation; if it is narrower, more quantitative, more falsifiable, and doesn't achieve this just by narrowing its focus to metrics whose connection to the further real-world consequences is itself unclear, and then it comes true, you don't need to have explicitly bet against me to have gained more virtue points.

I'm mostly not looking for virtue points, I'm looking for: (i) if your view is right then I get some kind of indication of that so that I can take it more seriously, (ii) if your view is wrong then you get some indication feedback to help snap you out of it.

I don't think it's surprising if a GPT-3 sized model can do relatively good translation. If talking about this prediction, and if you aren't happy just predicting numbers for overall value added from machine translation, I'd kind of like to get some concrete examples of mediocre translations or concrete problems with existing NMT that you are predicting can be improved.

It seems like Eliezer is mostly just more uncertain about the near future than you are, so it doesn't seem like you'll be able to find (ii) by looking at predictions for the near future.

It seems to me like Eliezer rejects a lot of important heuristics like "things change slowly" and "most innovations aren't big deals" and so on. One reason he may do that is because he literally doesn't know how to operate those heuristics, and so when he applies them retroactively they seem obviously stupid. But if we actually walked through predictions in advance, I think he'd see that actual gradualists are much better predictors than he imagines.

That seems a bit uncharitable to me. I doubt he rejects those heuristics wholesale. I'd guess that he thinks that e.g. recursive self improvement is one of those things where these heuristics don't apply, and that this is foreseeable because of e.g. the nature of recursion. I'd love to hear more about what sort of knowledge about "operating these heuristics" you think he's missing!

Anyway, it seems like he expects things to seem more-or-less gradual up until FOOM, so I think my original point still applies: I think his model would not be "shaken out" of his fast-takeoff view due to successful future predictions (until it's too late).

He says things like AlphaGo or GPT-3 being really surprising to gradualists, suggesting he thinks that gradualism only works in hindsight.

I agree that after shaking out the other disagreements, we could just end up with Eliezer saying "yeah but automating AI R&D is just fundamentally unlike all the other tasks to which we've applied AI" (or "AI improving AI will be fundamentally unlike automating humans improving AI") but I don't think that's the core of his position right now.

I agree we seem to have some kind of deeper disagreement here.

I think stack more layers + known training strategies (nothing clever) + simple strategies for using test-time compute (nothing clever, nothing that doesn't use the ML as a black box) can get continuous improvements in tasks like reasoning (e.g. theorem-proving), meta-learning (e.g. learning to learn new motor skills), automating R&D (including automating executing ML experiments, or proposing new ML experiments), or basically whatever.

I think these won't get to human level in the next 5 years. We'll have crappy versions of all of them. So it seems like we basically have to get quantitative. If you want to talk about something we aren't currently measuring, then that probably takes effort, and so it would probably be good if you picked some capability where you won't just say "the Future is hard to predict." (Though separately I expect to make somewhat better predictions than you in most of these domains.)

A plausible example is that I think it's pretty likely that in 5 years, with mere stack more layers + known techniques (nothing clever), you can have a system which is clearly (by your+my judgment) "on track" to improve itself and eventually foom, e.g. that can propose and evaluate improvements to itself, whose ability to evaluate proposals is good enough that it will actually move in the right direction and eventually get better at the process, etc., but that it will just take a long time for it to make progress. I'd guess that it looks a lot like a dumb kid in terms of the kind of stuff it proposes and its bad judgment (but radically more focused on the task and conscientious and wise than any kid would be). Maybe I think that's 10% unconditionally, but much higher given a serious effort. My impression is that you think this is unlikely without adding in some missing secret sauce to GPT, and that my picture is generally quite different from your criticallity-flavored model of takeoff.

Found two Eliezer-posts from 2016 (on Facebook) that I feel helped me better grok his perspective.

It is amazing that our neural networks work at all; terrifying that we can dump in so much GPU power that our training methods work at all; and the fact that AlphaGo can even exist is still blowing my mind. It's like watching a trillion spiders with the intelligence of earthworms, working for 100,000 years, using tissue paper to construct nuclear weapons.

And earlier, Jan. 27, 2016:

People occasionally ask me about signs that the remaining timeline might be short. It's very easy for nonprofessionals to take too much alarm too easily. Deep Blue beating Kasparov at chess was not such a sign. Robotic cars are not such a sign.

This is.

"Here we introduce a new approach to computer Go that uses ‘value networks’ to evaluate board positions and ‘policy networks’ to select moves... Without any lookahead search, the neural networks play Go at the level of state-of-the-art Monte Carlo tree search programs that simulate thousands of random games of self-play. We also introduce a new search algorithm that combines Monte Carlo simulation with value and policy networks. Using this search algorithm, our program AlphaGo achieved a 99.8% winning rate against other Go programs, and defeated the human European Go champion by 5 games to 0."

Repeat: IT DEFEATED THE EUROPEAN GO CHAMPION 5-0.

As the authors observe, this represents a break of at least one decade faster than trend in computer Go.

This matches something I've previously named in private conversation as a warning sign - sharply above-trend performance at Go from a neural algorithm. What this indicates is not that deep learning in particular is going to be the Game Over algorithm. Rather, the background variables are looking more like "Human neural intelligence is not that complicated and current algorithms are touching on keystone, foundational aspects of it." What's alarming is not this particular breakthrough, but what it implies about the general background settings of the computational universe.

To try spelling out the details more explicitly, Go is a game that is very computationally difficult for traditional chess-style techniques. Human masters learn to play Go very intuitively, because the human cortical algorithm turns out to generalize well. If deep learning can do something similar, plus (a previous real sign) have a single network architecture learn to play loads of different old computer games, that may indicate we're starting to get into the range of "neural algorithms that generalize well, the way that the human cortical algorithm generalizes well".

This result also supports that "Everything always stays on a smooth exponential trend, you don't get discontinuous competence boosts from new algorithmic insights" is false even for the non-recursive case, but that was already obvious from my perspective. Evidence that's more easily interpreted by a wider set of eyes is always helpful, I guess.

Next sign up might be, e.g., a similar discontinuous jump in machine programming ability - not to human level, but to doing things previously considered impossibly difficult for AI algorithms.

I hope that everyone in 2005 who tried to eyeball the AI alignment problem, and concluded with their own eyeballs that we had until 2050 to start really worrying about it, enjoyed their use of whatever resources they decided not to devote to the problem at that time.

Some thinking-out-loud on how I'd go about looking for testable/bettable prediction differences here...

I think my models overlap mostly with Eliezer's in the relevant places, so I'll use my own models as a proxy for his, and think about how to find testable/bettable predictions with Paul (or Ajeya, or someone else in their cluster).

One historical example immediately springs to mind where something-I'd-consider-a-Paul-esque-model utterly failed predictively: the breakdown of the Philips curve. The original Philips curve was based on just fitting a curve to inflation-vs-unemployment data; Friedman and Phelps both independently came up with theoretical models for that relationship in the late sixties ('67-'68), and Friedman correctly forecasted that the curve would break down in the next recession (i.e. the "stagflation" of '73-'75). This all led up to the Lucas Critique, which I'd consider the canonical case-against-what-I'd-call-Paul-esque-worldviews within economics. The main idea which seems transportable to other contexts is that surface relations (like the Philips curve) break down under distribution shifts in the underlying factors.

So, how would I look for something analogous to that situation in today's AI? We need something with an established trend, but where a distribution shift happens in some underlying factor. One possible place to look: I've heard that OpenAI plans to make the next generation of GPT not actually much bigger than the previous generation; they're trying to achieve improvement through strategies other than Stack More Layers. Assuming that's true, it seems like a naive Paul-esque model would predict that the next GPT is relatively unimpressive compared to e.g. the GPT2 -> GPT 3 delta? Whereas my models (or I'd guess Eliezer's models) would predict that it's relatively more impressive, compared to the expectations of Paul-esque models (derived by e.g. extrapolating previous performance as a function of model size and then plugging in actual size of the next GPT)? I wouldn't expect either view to make crisp high-certainty predictions here, but enough to get decent Bayesian evidence.

Other than distribution shifts, the other major place I'd look for different predictions is in the extent to which aggregates tell us useful things. The post got into that in a little detail, but I think there's probably still room there. For instance, I recently sat down and played with some toy examples of GDP growth induced by tech shifts, and I was surprised by how smooth GDP was even in scenarios with tech shifts which seemed very impactful to me. I expect that Paul would be even more surprised by this if he were to do the same exercise. In particular, this quote seems relevant:

the point is that housing and healthcare are not central examples of things that scale up at the beginning of explosive growth, regardless of whether it's hard or soft

It is surprisingly difficult to come up with a scenario where GDP growth looks smooth AND housing+healthcare don't grow much AND GDP growth accelerates to a rate much faster than now. If everything except housing and healthcare are getting cheaper, then housing and healthcare will likely play a much larger role in GDP (and together they're 30-35% already), eventually dominating GDP. This isn't a logical necessity; in principle we could consume so much more of everything else that the housing+healthcare share shrinks, but I think that would probably diverge from past trends (though I have not checked). What I actually expect is that as people get richer, they spend a larger fraction on things which have a high capacity to absorb marginal income, of which housing and healthcare are central examples.

If housing and healthcare aren't getting cheaper, and we're not spending a smaller fraction of income on them (by buying way way more of the things which are getting cheaper), then that puts a pretty stiff cap on how much GDP can grow.

Zooming out a meta-level, I think GDP is a particularly good example of a big aggregate metric which approximately-always looks smooth in hindsight, even when the underlying factors of interest undergo large jumps. I think Paul would probably update toward that view if he spent some time playing around with examples (similar to this post).

Similarly, I've heard that during training of GPT-3, while aggregate performance improves smoothly, performance on any particular task (like e.g. addition) is usually pretty binary - i.e. performance on any particular task tends to jump quickly from near-zero to near-maximum-level. Assuming this is true, presumably Paul already knows about it, and would argue that what matters-for-impact is ability at lots of different tasks rather than one (or a few) particular tasks/kinds-of-tasks? If so, that opens up a different line of debate, about the extent to which individual humans' success today hinges on lots of different skills vs a few, and in which areas.

The "continuous view" as I understand it doesn't predict that all straight lines always stay straight. My version of it (which may or may not be Paul's version) predicts that in domains where people are putting in lots of effort to optimize a metric, that metric will grow relatively continuously. In other words, the more effort put in to optimize the metric, the more you can rely on straight lines for that metric staying straight (assuming that the trends in effort are also staying straight).

In its application to AI, this is combined with a prediction that people will in fact be putting in lots of effort into making AI systems intelligent / powerful / able to automate AI R&D / etc, before AI has reached a point where it can execute a pivotal act. This second prediction comes for totally different reasons, like "look at what AI researchers are already trying to do" combined with "it doesn't seem like AI is anywhere near the point of executing a pivotal act yet".

(I think on Paul's view the second prediction is also bolstered by observing that most industries / things that had big economic impacts also seemed to have crappier predecessors. This feels intuitive to me but is not something I've checked and so isn't my personal main reason for believing the second prediction.)

One historical example immediately springs to mind where something-I'd-consider-a-Paul-esque-model utterly failed predictively: the breakdown of the Philips curve.

I'm not very familiar with this (I've only seen your discussion and the discussion in IEM) but it does not seem like the sort of thing where the argument I laid out above would have had a strong opinion. Was the y-axis of the straight line graph a metric that people were trying to optimize? If so, did the change in policy not represent a change in the amount of effort put into optimizing the metric? (I haven't looked at the details here, maybe the answer is yes to both, in which case I would be interested in looking at the details.)

Zooming out a meta-level, I think GDP is a particularly good example of a big aggregate metric which approximately-always looks smooth in hindsight, even when the underlying factors of interest undergo large jumps.

This seems plausible but it also seems like you can apply the above argument to a bunch of other topics besides GDP, like the ones listed in this comment, so it still seems like you should be able to exhibit a failure of the argument on those topics.

One of the problems here is that, as well as disagreeing about underlying world models and about the likelihoods of some pre-AGI events, Paul and Eliezer often just make predictions about different things by default. But they do (and must, logically) predict some of the same world events differently.

My very rough model of how their beliefs flow forward is:

Paul

Low initial confidence on truth/coherence of 'core of generality'

→

Human Evolution tells us very little about the 'cognitive landscape of all minds' (if that's even a coherent idea) - it's simply a loosely analogous individual historical example. Natural selection wasn't intelligently aiming for powerful world-affecting capabilities, and so stumbled on them relatively suddenly with humans. Therefore, we learn very little about whether there will/won't be a spectrum of powerful intermediately general AIs from the historical case of evolution - all we know is that it didn't happen during evolution, and we've got good reasons to think it's a lot more likely to happen for AI. For other reasons (precedents already exist - MuZero is insect-brained but better at chess or go than a chimp, plus that's the default with technology we're heavily investing in), we should expect there will be powerful, intermediately general AIs by default (and our best guess of the timescale should be anchored to the speed of human-driven progress, since that's where it will start) - No core of generality

Then, from there:

No core of generality and extrapolation of quantitative metrics for things we care about and lack of common huge secrets in relevant tech progress reference class → Qualitative prediction of more common continuous progress on the 'intelligence' of narrow AI and prediction of continuous takeoff

Eliezer

High initial confidence on truth/coherence of 'core of generality'

→

Even though there are some disanalogies between Evolution and AI progress, the exact details of how closely analogous the two situations are don't matter that much. Rather, we learn a generalizable fact about the overall cognitive landscape from human evolution - that there is a way to reach the core of generality quickly. This doesn't make it certain that AGI development will go the same way, but it's fairly strong evidence. The disanalogies between evolution and ML are indeed a slight update in Paul's direction and suggest that AI could in principle take a smoother route to general intelligence, but we've never historically seen this smoother route (and it has to be not just technically 'smooth' but sufficiently smooth to give us a full 4-year economic doubling) or these intermediate powerful agents, so this correction is weak compared to the broader knowledge we gain from evolution. In other words, all we know is that there is a fast route to the core of generality but that it's imaginable that there's a slow route we've not yet seen - Core of generality

Then, from there:

Core of generality and very common presence of huge secrets in relevant tech progress reference class → Qualitative prediction of less common continuous progress on the 'intelligence' of narrow AI and prediction of discontinuous takeoff

Eliezer doesn’t have especially divergent views about benchmarks like perplexity because he thinks they're not informative, but differs from Paul on qualitative predictions of how smoothly various practical capabilities/signs of 'intelligence' will emerge - he's getting his qualitative predictions about this ultimately from interrogating his 'cognitive landscape' abstraction, while Paul is getting his from trend extrapolation on measures of practical capabilities and then translating those to qualitative predictions. These are very different origins, but they do eventually give different predictions about the likelihood of the same real-world events.

Since they only reach the point of discussing the same things at a very vague, qualitative level of detail, in order to get to a bet you have to back-track from both of their qualitative predictions of how likely the sudden emergence of various types of narrow intelligent behaviour are, find some clear metric for the narrow intelligent behaviour that we can apply fairly, and then there should be a difference in beliefs about the world before AI takeoff.

Updates on this after reflection and discussion (thanks to Rohin):

Human Evolution tells us very little about the 'cognitive landscape of all minds' (if that's even a coherent idea) - it's simply a loosely analogous individual historical example

Saying Paul's view is that the cognitive landscape of minds might be simply incoherent isn't quite right - at the very least you can talk about the distribution over programs implied by the random initialization of a neural network.

I could have just said 'Paul doesn't see this strong generality attractor in the cognitive landscape' but it seems to me that it's not just a disagreement about the abstraction, but that he trusts claims made on the basis of these sorts of abstractions less than Eliezer.

Also, on Paul's view, it's not that evolution is irrelevant as a counterexample. Rather, the specific fact of 'evolution gave us general intelligence suddenly by evolutionary timescales' is an unimportant surface fact, and the real truth about evolution is consistent with the continuous view.

No core of generality and extrapolation of quantitative metrics for things we care about and lack of common huge secrets in relevant tech progress reference class

These two initial claims are connected in a way I didn't make explicit - No core of generality and lack of common secrets in the reference class together imply that there are lots of paths to improving on practical metrics (not just those that give us generality), that we are putting in lots of effort into improving such metrics and that we tend to take the best ones first, so the metric improves continuously, and trend extrapolation will be especially correct.

Core of generality and very common presence of huge secrets in relevant tech progress reference class

The first clause already implies the second clause (since "how to get the core of generality" is itself a huge secret), but Eliezer seems to use non-intelligence related examples of sudden tech progress as evidence that huge secrets are common in tech progress in general, independent of the specific reason to think generality is one such secret.

Nate's Summary

... Eliezer was saying something like "the fact that humans go around doing something vaguely like weighting outcomes by possibility and also by attractiveness, which they then roughly multiply, is quite sufficient evidence for my purposes, as one who does not pay tribute to the gods of modesty", while Richard protested something more like "but aren't you trying to use your concept to carry a whole lot more weight than that amount of evidence supports?"..

And, ofc, at this point, my Eliezer-model is again saying "This is why we should be discussing things concretely! It is quite telling that all the plans we can concretely visualize for saving our skins, are scary-adjacent; and all the non-scary plans, can't save our skins!"

Nate's summary brings up two points I more or less ignored in my summary because I wasn't sure what I thought - one is, just what role do the considerations about expected incompetent response/regulatory barriers/mistakes in choosing alignment strategies play? Are they necessary for a high likelihood of doom, or just peripheral assumptions? Clearly, you have to posit some level of "civilization fails to do the x-risk-minimizing thing" if you want to argue doom, but how extreme are the scenarios Eliezer is imagining where success is likely?

The other is the role that the modesty worldview plays in Eliezer's objections.

I feel confused/suspect we might have all lost track of what Modesty epistemology is supposed to consist of - I thought it was something like "overuse of the outside view, especially in a social cognition context".

Which of the following is:

a) probably the product of a Modesty world-view?

b) no good reason to think comes from a Modesty world-view but still bad epistemology?

c) good epistemology?

- Not believing theories which don’t make new testable predictions just because they retrodict lots of things in a way that the theories proponents claim is more natural, but that you don’t understand, because that seems generally suspicious

- Not believing theories which don’t make new testable predictions just because they retrodict lots of things in the world naturally (in a way you sort of get intuitively), because you don’t trust your own assessments of naturalness that much in the absence of discriminating evidence

- Not believing theories which don’t make new testable predictions just because they retrodict lots of things in the world naturally (in a way you sort of get intuitively), because most powerful theories which cause conceptual revolutions also make new testable predictions, so it’s a bad sign if the newly proposed theory doesn’t.

- As a general matter, accepting that there are lots of cases of theories which are knowably true independent of any new testable predictions they make because of features of the theory. Things like the implication of general relativity from the equivalence principle, or the second law of thermodynamics from Noether’s theorem, or many-worlds from QM are real, but you’ll only believe you’ve found a case like this if you’re walked through to the conclusion, so you're sure that the underlying concepts are clear and applicable, or there’s already a scientific consensus behind it.

Not believing theories which don’t make new testable predictions just because they retrodict lots of things in a way that the theories proponents claim is more natural, but that you don’t understand, because that seems generally suspicious

My Eliezer-model doesn't categorically object to this. See, e.g., Fake Causality:

[Phlogiston] feels like an explanation. It’s represented using the same cognitive data format. But the human mind does not automatically detect when a cause has an unconstraining arrow to its effect. Worse, thanks to hindsight bias, it may feel like the cause constrains the effect, when it was merely fitted to the effect.

[...] Thanks to hindsight bias, it’s also not enough to check how well your theory “predicts” facts you already know. You’ve got to predict for tomorrow, not yesterday.

And A Technical Explanation of Technical Explanation:

Nineteenth century evolutionism made no quantitative predictions. It was not readily subject to falsification. It was largely an explanation of what had already been seen. It lacked an underlying mechanism, as no one then knew about DNA. It even contradicted the nineteenth century laws of physics. Yet natural selection was such an amazingly good post facto explanation that people flocked to it, and they turned out to be right. Science, as a human endeavor, requires advance prediction. Probability theory, as math, does not distinguish between post facto and advance prediction, because probability theory assumes that probability distributions are fixed properties of a hypothesis.

The rule about advance prediction is a rule of the social process of science—a moral custom and not a theorem. The moral custom exists to prevent human beings from making human mistakes that are hard to even describe in the language of probability theory, like tinkering after the fact with what you claim your hypothesis predicts. People concluded that nineteenth century evolutionism was an excellent explanation, even if it was post facto. That reasoning was correct as probability theory, which is why it worked despite all scientific sins. Probability theory is math. The social process of science is a set of legal conventions to keep people from cheating on the math.

Yet it is also true that, compared to a modern-day evolutionary theorist, evolutionary theorists of the late nineteenth and early twentieth century often went sadly astray. Darwin, who was bright enough to invent the theory, got an amazing amount right. But Darwin’s successors, who were only bright enough to accept the theory, misunderstood evolution frequently and seriously. The usual process of science was then required to correct their mistakes.

My Eliezer-model does object to things like 'since I (from my position as someone who doesn't understand the model) find the retrodictions and obvious-seeming predictions suspicious, you should share my worry and have relatively low confidence in the model's applicability'. Or 'since the case for this model's applicability isn't iron-clad, you should sprinkle in a lot more expressions of verbal doubt'. My Eliezer-model views these as isolated demands for rigor, or as isolated demands for social meekness.

Part of his general anti-modesty and pro-Thielian-secrets view is that it's very possible for other people to know things that justifiably make them much more confident than you are. So if you can't pass the other person's ITT / you don't understand how they're arriving at their conclusion (and you have no principled reason to think they can't have a good model here), then you should be a lot more wary of inferring from their confidence that they're biased.

Not believing theories which don’t make new testable predictions just because they retrodict lots of things in the world naturally (in a way you sort of get intuitively), because you don’t trust your own assessments of naturalness that much in the absence of discriminating evidence

My Eliezer-model thinks it's possible to be so bad at scientific reasoning that you need to be hit over the head with lots of advance predictive successes in order to justifiably trust a model. But my Eliezer-model thinks people like Richard are way better than that, and are (for modesty-ish reasons) overly distrusting their ability to do inside-view reasoning, and (as a consequence) aren't building up their inside-view-reasoning skills nearly as much as they could. (At least in domains like AGI, where you stand to look a lot sillier to others if you go around expressing confident inside-view models that others don't share.)

Not believing theories which don’t make new testable predictions just because they retrodict lots of things in the world naturally (in a way you sort of get intuitively), because most powerful theories which cause conceptual revolutions also make new testable predictions, so it’s a bad sign if the newly proposed theory doesn’t.

My Eliezer-model thinks this is correct as stated, but thinks this is a claim that applies to things like Newtonian gravity and not to things like probability theory. (He's also suspicious that modest-epistemology pressures have something to do with this being non-obvious — e.g., because modesty discourages you from trusting your own internal understanding of things like probability theory, and instead encourages you to look at external public signs of probability theory's impressiveness, of a sort that could be egalitarianly accepted even by people who don't understand probability theory.)

My version of it (which may or may not be Paul's version) predicts that in domains where people are putting in lots of effort to optimize a metric, that metric will grow relatively continuously. In other words, the more effort put in to optimize the metric, the more you can rely on straight lines for that metric staying straight (assuming that the trends in effort are also staying straight).

This is super helpful, thanks. Good explanation.

With this formulation of the "continuous view", I can immediately think of places where I'd bet against it. The first which springs to mind is aging: I'd bet that we'll see a discontinuous jump in achievable lifespan of mice. The gears here are nicely analogous to AGI too: I expect that there's a "common core" (or shared cause) underlying all the major diseases of aging, and fixing that core issue will fix all of them at once, in much the same way that figuring out the "core" of intelligence will lead to a big discontinuous jump in AI capabilities. I can also point to current empirical evidence for the existence of a common core in aging, which might suggest analogous types of evidence to look at in the intelligence context.

Thinking about other analogous places... presumably we saw a discontinuous jump in flight range when Sputnik entered orbit. That one seems extremely closely analogous to AGI. There it's less about the "common core" thing, and more about crossing some critical threshold. Nuclear weapons and superconductors both stand out a-priori as places where we'd expect a critical-threshold-related discontinuity, though I don't think people were optimizing hard enough in superconductor-esque directions for the continuous view to make a strong prediction there (at least for the original discovery of superconductors).

I agree that when you know about a critical threshold, as with nukes or orbits, you can and should predict a discontinuity there. (Sufficient specific knowledge is always going to allow you to outperform a general heuristic.) I think that (a) such thresholds are rare in general and (b) in AI in particular there is no such threshold. (According to me (b) seems like the biggest difference between Eliezer and Paul.)

Some thoughts on aging:

- It does in fact seem surprising, given the complexity of biology relative to physics, if there is a single core cause and core solution that leads to a discontinuity.

- I would a priori guess that there won't be a core solution. (A core cause seems more plausible, and I'll roll with it for now.) Instead, we see a sequence of solutions that intervene on the core problem in different ways, each of which leads to some improvement on lifespan, and discovering these at different times leads to a smoother graph.

- That being said, are people putting in a lot of effort into solving aging in mice? Everyone seems to constantly be saying that we're putting in almost no effort whatsoever. If that's true then a jumpy graph would be much less surprising.

- As a more specific scenario, it seems possible that the graph of mouse lifespan over time looks basically flat, because we were making no progress due to putting in ~no effort. I could totally believe in this world that someone puts in some effort and we get a discontinuity, or even that the near-zero effort we're putting in finds some intervention this year (but not in previous years) which then looks like a discontinuity.

If we had a good operationalization, and people are in fact putting in a lot of effort now, I could imagine putting my $100 to your $300 on this (not going beyond 1:3 odds simply because you know way more about aging than I do).

I'm not particularly enthusiastic about betting at 75%, that seems like it's already in the right ballpark for where the probability should be. So I guess we've successfully Aumann agreed on that particular prediction.

presumably we saw a discontinuous jump in flight range when Sputnik entered orbit.

While I think orbit is the right sort of discontinuity for this, I think you need to specify 'flight range' in a way that clearly favors orbits for this to be correct, mostly because about a month before was the manhole cover launched/vaporized with a nuke.

[But in terms of something like "altitude achieved", I think Sputnik is probably part of a continuous graph, and probably not the most extreme member of the graph?]

My understanding is that Sputnik was a big discontinuous jump in "distance which a payload (i.e. nuclear bomb) can be delivered" (or at least it was a conclusive proof-of-concept of a discontinuous jump in that metric). That metric was presumably under heavy optimization pressure at the time, and was the main reason for strategic interest in Sputnik, so it lines up very well with the preconditions for the continuous view.

So it looks like the R-7 (which launched Sputnik) was the first ICBM, and the range is way longer than the V-2s of ~15 years earlier, but I'm not easily finding a graph of range over those intervening years. (And the R-7 range is only about double the range of a WW2-era bomber, which further smooths the overall graph.)

[And, implicitly, the reason we care about ICBMs is because the US and the USSR were on different continents; if the distance between their major centers was comparable to England and France's distance instead, then the same strategic considerations would have been hit much sooner.]

I don't necessarily expect GPT-4 to do better on perplexity than would be predicted by a linear model fit to neuron count plus algorithmic progress over time; my guess for why they're not scaling it bigger would be that Stack More Layers just basically stopped scaling in real output quality at the GPT-3 level. They can afford to scale up an OOM to 1.75 trillion weights, easily, given their funding, so if they're not doing that, an obvious guess is that it's because they're not getting a big win from that. As for their ability to then make algorithmic progress, depends on how good their researchers are, I expect; most algorithmic tricks you try in ML won't work, but maybe they've got enough people trying things to find some? But it's hard to outpace a field that way without supergeniuses, and the modern world has forgotten how to rear those.

While GPT-4 wouldn't be a lot bigger than GPT-3, Sam Altman did indicate that it'd use a lot more compute. That's consistent with Stack More Layers still working; they might just have found an even better use for compute.

(The increased compute-usage also makes me think that a Paul-esque view would allow for GPT-4 to be a lot more impressive than GPT-3, beyond just modest algorithmic improvements.)

If they've found some way to put a lot more compute into GPT-4 without making the model bigger, that's a very different - and unnerving - development.

and some of my sense here is that if Paul offered a portfolio bet of this kind, I might not take it myself, but EAs who were better at noticing their own surprise might say, "Wait, that's how unpredictable Paul thinks the world is?"

If Eliezer endorses this on reflection, that would seem to suggest that Paul actually has good models about how often trend breaks happen, and that the problem-by-Eliezer's-lights is relatively more about, either:

- that Paul's long-term predictions do not adequately take into account his good sense of short-term trend breaks.

- that Paul's long-term predictions are actually fine and good, but that his communication about it is somehow misleading to EAs.

That would be a very different kind of disagreement than I thought this was about. (Though actually kind-of consistent with the way that Eliezer previously didn't quite diss Paul's track-record, but instead dissed "the sort of person who is taken in by this essay [is the same sort of person who gets taken in by Hanson's arguments in 2008 and gets caught flatfooted by AlphaGo and GPT-3 and AlphaFold 2]"?)

Also, none of this erases the value of putting forward the predictions mentioned in the original quote, since that would then be a good method of communicating Paul's (supposedly miscommunicated) views.

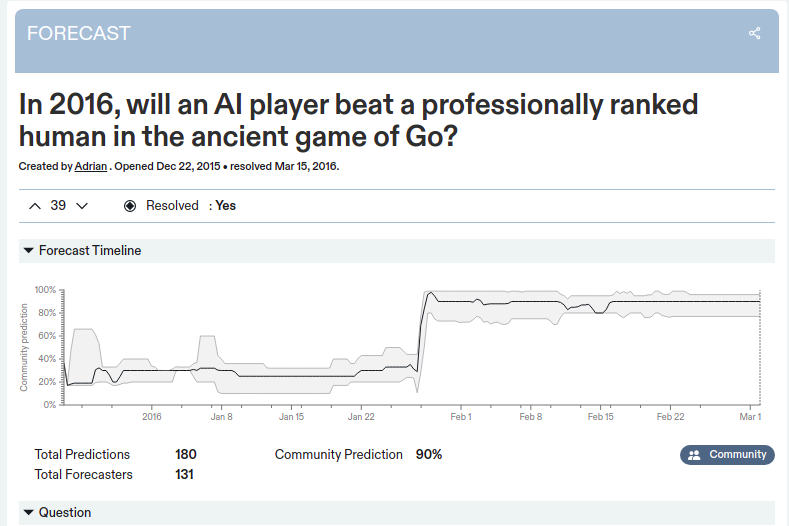

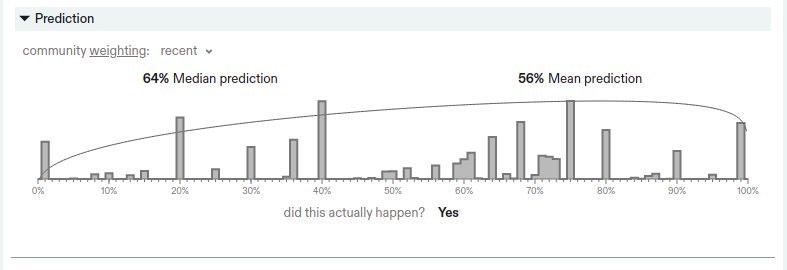

superforecasters were claiming that AlphaGo had a 20% chance of beating Lee Se-dol and I didn't disagree with that at the time

Good Judgment Open had the probability at 65% on March 8th 2016, with a generally stable forecast since early February (Wikipedia says that the first match was on March 9th).

Metaculus had the probability at 64% with similar stability over time. Of course, there might be another source that Eliezer is referring to, but for now I think it's right to flag this statement as false.

A note I want to add, if this fact-check ends up being valid:

It appears that a significant fraction of Eliezer's argument relies on AlphaGo being surprising. But then his evidence for it being surprising seems to rest substantially on something that was misremembered. That seems important if true.

I would point to, for example, this quote, "I mean the superforecasters did already suck once in my observation, which was AlphaGo, but I did not bet against them there, I bet with them and then updated afterwards." It seems like the lesson here, if indeed superforecasters got AlphaGo right and Eliezer got it wrong, is that we should update a little bit towards superforecasting, and against Eliezer.

Adding my recollection of that period: some people made the relevant updates when DeepMind's system beat the European Champion Fan Hui (in October 2015). My hazy recollection is that beating Fan Hui started some people going "Oh huh, I think this is going to happen" and then when AlphaGo beat Lee Sedol (in March 2016) everyone said "Now it is happening".

It seems from this Metaculus question that people indeed were surprised by the announcement of the match between Fan Hui and AlphaGo (which was disclosed in January, despite the match happening months earlier, according to Wikipedia).

It seems hard to interpret this as AlphaGo being inherently surprising though, because the relevant fact is that the question was referring only to 2016. It seems somewhat reasonable to think that even if a breakthrough is on the horizon, it won't happen imminently with high probability.

Perhaps a better source of evidence of AlphaGo's surprisingness comes from Nick Bostrom's 2014 book Superintelligence in which he says, "Go-playing amateur programs have been improving at a rate of about 1 level dan/year in recent years. If this rate of improvement continues, they might beat the human world champion in about a decade." (Chapter 1).

This vindicates AlphaGo being an impressive discontinuity from pre-2015 progress. Though one can reasonably dispute whether superforecasters thought that the milestone was still far away after being told that Google and Facebook made big investments into it (as was the case in late 2015).

Wow thanks for pulling that up. I've gotta say, having records of people's predictions is pretty sweet. Similarly, solid find on the Bostrom quote.

Do you think that might be the 20% number that Eliezer is remembering? Eliezer, interested in whether you have a recollection of this or not. [Added: It seems from a comment upthread that EY was talking about superforecasters in Feb 2016, which is after Fan Hui.]

My memory of the past is not great in general, but considering that I bet sums of my own money and advised others to do so, I am surprised that my memory here would be that bad, if it was.

Neither GJO nor Metaculus are restricted to only past superforecasters, as I understand it; and my recollection is that superforecasters in particular, not all participants at GJO or Metaculus, were saying in the range of 20%. Here's an example of one such, which I have a potentially false memory of having maybe read at the time: https://www.gjopen.com/comments/118530

Thanks for clarifying. That makes sense that you may have been referring to a specific subset of forecasters. I do think that some forecasters tend to be much more reliable than others (and maybe there was/is a way to restrict to "superforecasters" in the UI).

I will add the following piece of evidence, which I don't think counts much for or against your memory, but which still seems relevant. Metaculus shows a histogram of predictions. On the relevant question, a relatively high fraction of people put a 20% chance, but it also looks like over 80% of forecasters put higher credences.

Transcript error fixed -- the line that previously read

[Yudkowsky][17:40] I expect it to go away before the end of days but with there having been a big architectural innovation, not Stack More Layers |

[Christiano][17:40] I expect it to go away before the end of days but with there having been a big architectural innovation, not Stack More Layers |

[Yudkowsky][17:40] if you name 5 possible architectural innovations I can call them small or large |

should be

[Yudkowsky][17:40] I expect it to go away before the end of days but with there having been a big architectural innovation, not Stack More Layers |

[Christiano][17:40] yeah whereas I expect layer stacking + maybe changing loss (since logprob is too noisy) is sufficient |

[Yudkowsky][17:40] if you name 5 possible architectural innovations I can call them small or large |

Christiano predicts progress will be (approximately) a smooth curve, whereas Yudkowsky predicts there will be discontinuous-ish "jumps", but there's another thing that can happen that both of them seem to dismiss: progress hitting a major obstacle and plateauing for a while (i.e. the progress curve looking locally like a sigmoid). I guess that the reason they dismiss it is related to this quote by Soares:

I observe that, 15 years ago, everyone was saying AGI is far off because of what it couldn't do -- basic image recognition, go, starcraft, winograd schemas, programmer assistance. But basically all that has fallen. The gap between us and AGI is made mostly of intangibles.

However, I think this is not entirely accurate. Some games are still unsolved without "cheating", where by cheating I mean using human demonstrations or handcrafted rewards, and that includes Montezuma's Revenge, StarCraft II and Dota 2 (and Dota 2 with unlimited hero selection is even more unsolved). Moreover, we haven't seen RL show superhuman performance on any task in which the environment is substantially more complex than the agent in important ways (this rules out all video games, unless if winning the game requires a good theory of mind of your opponents[1], which is arguably never the case for zero-sum two-player games). Language models made impressive progress, but I don't think they are superhuman along any interesting dimension. Classifiers still struggle with adversarial examples (although, this is not necessarily an important limitation, maybe humans have "adversarial examples" too).

So, it is certainly possible that it's a "clear runway" from here to superintelligence. But I don't think it's obvious.

I know there are strong poker AIs, but I suspect they win via something other than theory of mind. Maybe someone who knows the topic can comment. ↩︎

My Eliezer-model is a lot less surprised by lulls than my Paul-model (because we're missing key insights for AGI, progress on insights is jumpy and hard to predict, the future is generally very unpredictable, etc.). I don't know exactly how large of a lull or winter would start to surprise Eliezer (or how much that surprise would change if the lull is occurring two years from now, vs. ten years from now, for example).

In Yudkowsky and Christiano Discuss "Takeoff Speeds", Eliezer says:

I have a rough intuitive feeling that it [AI progress] was going faster in 2015-2017 than 2018-2020.

So in that sense Eliezer thinks we're already in a slowdown to some degree (as of 2020), though I gather you're talking about a much larger and more long-lasting slowdown.

I generally expect smoother progress, but predictions about lulls are probably dominated by Eliezer's shorter timelines. Also lulls are generally easier than spurts, e.g. I think that if you just slow investment growth you get a lull and that's not too unlikely (whereas part of why it's hard to get a spurt is that investment rises to levels where you can't rapidly grow it further).

Makes some sense, but Yudkowsky's prediction that TAI will arrive before AI has large economic impact does forbid a lot of plateau scenarios. Given a plateau that's sufficiently high and sufficiently long, AI will land in the market, I think. Even if regulatory hurdles are the bottleneck for a lot of things atm, eventually in some country AI will become important and the others will have to follow or fall behind.

(ETA: this wasn't actually in this log but in a future part of the discussion.)

I found the elephants part of this discussion surprising. It looks to me like human brains are better than elephant brains at most things, and it's interesting to me that Eliezer thought otherwise. This is one of the main places where I couldn't predict what he would say.

I also think human brains are better than elephant brains at most things - what did I say that sounded otherwise?

Oops, this was in reference to the later part of the discussion where you disagreed with "a human in a big animal body, with brain adapted to operate that body instead of our own, would beat a big animal [without using tools]".

This post is a transcript of a discussion between Paul Christiano, Ajeya Cotra, and Eliezer Yudkowsky on AGI forecasting, following up on Paul and Eliezer's "Takeoff Speeds" discussion.

Color key:

8. September 20 conversation

8.1. Chess and Evergrande

[Christiano][15:28]

I still feel like you are overestimating how big a jump alphago is, or something. Do you have a mental prediction of how the graph of (chess engine quality) vs (time) looks, and whether neural net value functions are a noticeable jump in that graph?

Like, people investing in "Better Software" doesn't predict that you won't be able to make progress at playing go. The reason you can make a lot of progress at go is that there was extremely little investment in playing better go.

So then your work is being done by the claim "People won't be working on the problem of acquiring a decisive strategic advantage," not that people won't be looking in quite the right place and that someone just had a cleverer idea

[Yudkowsky][16:35]

I think I'd expect something like... chess engine slope jumps a bit for Deep Blue, then levels off with increasing excitement, then jumps for the Alpha series? Albeit it's worth noting that Deepmind's efforts there were going towards generality rather than raw power; chess was solved to the point of being uninteresting, so they tried to solve chess with simpler code that did more things. I don't think I do have strong opinions about what the chess trend should look like, vs. the Go trend; I have no memories of people saying the chess trend was breaking upwards or that there was a surprise there.

Incidentally, the highly well-traded financial markets are currently experiencing sharp dips surrounding the Chinese firm of Evergrande, which I was reading about several weeks before this.

I don't see the basic difference in the kind of reasoning that says "Surely foresightful firms must produce investments well in advance into earlier weaker applications of AGI that will double the economy", and the reasoning that says "Surely world economic markets and particular Chinese stocks should experience smooth declines as news about Evergrande becomes better-known and foresightful financial firms start to remove that stock from their portfolio or short-sell it", except that in the latter case there are many more actors with lower barriers to entry than presently exist in the auto industry or semiconductor industry never mind AI.

or if not smooth because of bandwagoning and rational fast actors, then at least the markets should (arguendo) be reacting earlier than they're reacting now, given that I heard about Evergrande earlier; and they should have options-priced Covid earlier; and they should have reacted to the mortgage market earlier. If even markets there can exhibit seemingly late wild swings, how is the economic impact of AI - which isn't even an asset market! - forced to be earlier and smoother than that, as a result of wise investing?

There's just such a vast gap between hopeful reasoning about how various agents and actors should all do the things the speaker finds very reasonable, thereby yielding smooth behavior of the Earth, versus reality.

9. September 21 conversation

9.1. AlphaZero, innovation vs. industry, the Wright Flyer, and the Manhattan Project

[Christiano][10:18]

(For benefit of readers, the market is down 1.5% from friday close -> tuesday open, after having drifted down 2.5% over the preceding two weeks. Draw whatever lesson you want from that.)

Also for the benefit of readers, here is the SSDF list of computer chess performance by year. I think the last datapoint is with the first version of neural net evaluations, though I think to see the real impact we want to add one more datapoint after the neural nets are refined (which is why I say I also don't know what the impact is)

No one keeps similarly detailed records for Go, and there is much less development effort, but the rate of progress was about 1 stone per year from 1980 until 2015 (see https://intelligence.org/files/AlgorithmicProgress.pdf, written way before AGZ). In 2012 go bots reached about 4-5 amateur dan. By DeepMind's reckoning here (https://www.nature.com/articles/nature16961, figure 4) Fan AlphaGo about 4-5 stones stronger-4 years later, with 1 stone explained by greater runtime compute. They could then get further progress to be superhuman with even more compute, radically more than were used for previous projects and with pretty predictable scaling. That level is within 1-2 stones of the best humans (professional dan are greatly compressed relative to amateur dan), so getting to "beats best human" is really just not a big discontinuity and the fact that DeepMind marketing can find an expert who makes a really bad forecast shouldn't be having such a huge impact on your view.

This understates the size of the jump from AlphaGo, because that was basically just the first version of the system that was superhuman and it was still progressing very rapidly as it moved from prototype to slightly-better-prototype, which is why you saw such a close game. (Though note that the AlphaGo prototype involved much more engineering effort than any previous attempt to play go, so it's not surprising that a "prototype" was the thing to win.)

So to look at actual progress after the dust settles and really measure how crazy this was, it seems much better to look at AlphaZero which continued to improve further, see (https://sci-hub.se/https://www.nature.com/articles/nature24270, figure 6b). Their best system got another ~8 stones of progress over AlphaGo. Now we are like 7-10 stones ahead of trend, of which I think about 3 stones are explained by compute. Maybe call it 6 years ahead of schedule?

So I do think this is pretty impressive, they were slightly ahead of schedule for beating the best humans but they did it with a huge margin of error. I think the margin is likely overstated a bit by their elo evaluation methodology, but I'd still grant like 5 years ahead of the nearest competition.

I'd be interested in input from anyone who knows more about the actual state of play (+ is allowed to talk about it) and could correct errors.

Mostly that whole thread is just clearing up my understanding of the empirical situation, probably we still have deep disagreements about what that says about the world, just as e.g. we read very different lessons from market movements.

Probably we should only be talking about either ML or about historical technologies with meaningful economic impacts. In my view your picture is just radically unlike how almost any technologies have been developed over the last few hundred years. So probably step 1 before having bets is to reconcile our views about historical technologies, and then maybe as a result of that we could actually have a bet about future technology. Or we could try to shore up the GDP bet.

Like, it feels to me like I'm saying: AI will be like early computers, or modern semiconductors, or airplanes, or rockets, or cars, or trains, or factories, or solar panels, or genome sequencing, or basically anything else. And you are saying: AI will be like nuclear weapons.

I think from your perspective it's more like: AI will be like all the historical technologies, and that means there will be a hard takeoff. The only way you get a soft takeoff forecast is by choosing a really weird thing to extrapolate from historical technologies.

So we're both just forecasting that AI will look kind of like other stuff in the near future, and then both taking what we see as the natural endpoint of that process.

To me it feels like the nuclear weapons case is the outer limit of what looks plausible, where someone is able to spend $100B for a chance at a decisive strategic advantage.

[Yudkowsky][11:11]

Go-wise, I'm a little concerned about that "stone" metric - what would the chess graph look like if it was measuring pawn handicaps? Are the professional dans compressed in Elo, not just "stone handicaps", relative to the amateur dans? And I'm also hella surprised by the claim, which I haven't yet looked at, that Alpha Zero got 8 stones of progress over AlphaGo - I would not have been shocked if you told me that God's Algorithm couldn't beat Lee Se-dol with a 9-stone handicap.

Like, the obvious metric is Elo, so if you go back and refigure in "stone handicaps", an obvious concern is that somebody was able to look into the past and fiddle their hindsight until they found a hindsightful metric that made things look predictable again. My sense of Go said that 5-dan amateur to 9-dan pro was a HELL of a leap for 4 years, and I also have some doubt about the original 5-dan-amateur claims and whether those required relatively narrow terms of testing (eg timed matches or something).

One basic point seems to be whether AGI is more like an innovation or like a performance metric over an entire large industry.

Another point seems to be whether the behavior of the world is usually like that, in some sense, or if it's just that people who like smooth graphs can go find some industries that have smooth graphs for particular performance metrics that happen to be smooth.

Among the smoothest metrics I know that seems like a convergent rather than handpicked thing to cite, is world GDP, which is the sum of more little things than almost anything else, and whose underlying process is full of multiple stages of converging-product-line bottlenecks that make it hard to jump the entire GDP significantly even when you jump one component of a production cycle... which, from my standpoint, is a major reason to expect AI to not hit world GDP all that hard until AGI passes the critical threshold of bypassing it entirely. Having 95% of the tech to invent a self-replicating organism (eg artificial bacterium) does not get you 95%, 50%, or even 10% of the impact.

(it's not so much the 2% reaction of world markets to Evergrande that I was singling out earlier, 2% is noise-ish, but the wider swings in the vicinity of Evergrande particularly)

[Christiano][12:41]

Yeah, I'm just using "stone" to mean "elo difference that is equal to 1 stone at amateur dan / low kyu," you can see DeepMind's conversion (which I also don't totally believe) in figure 4 here (https://sci-hub.se/https://www.nature.com/articles/nature16961). Stones are closer to constant elo than constant handicap, it's just a convention to name them that way.

[Yudkowsky][12:42]

k then

[Christiano][12:47]

But my description above still kind of understates the gap I think. They call 230 elo 1 stone, and I think prior rate of progress is more like 200 elo/year. They put AlphaZero about 3200 elo above the 2012 system, so that's like 16 years ahead = 11 years ahead of schedule. At least 2 years are from test-time hardware, and self-play systematically overestimates elo differences at the upper end of that. But 5 years ahead is still too low and that sounds more like 7-9 years ahead. ETA: and my actual best guess all things considered is probably 10 years ahead, which I agree is just a lot bigger than 5. And I also understated how much of the gap was getting up to Lee Sedol.

The go graph I posted wasn't made with hindsight, that was from 2014

I mean, I'm fine with you saying that people who like smooth graphs are cherry-picking evidence, but do you want to give any example other than nuclear weapons of technologies with the kind of discontinuous impact you are describing?

I do agree that the difference in our views is like "innovation" vs "industry." And a big part of my position is that innovation-like things just don't usually have big impacts for kind of obvious reasons, they start small and then become more industry-like as they scale up. And current deep learning seems like an absolutely stereotypical industry that is scaling up rapidly in an increasingly predictable way.

As far as I can tell the examples we know of things changing continuously aren't handpicked, we've been looking at all the examples we can find, and no one is proposing or even able to find almost anything that looks like you are imagining AI will look.

Like, we've seen deep learning innovations in the form of prototypes (most of all AlexNet), and they were cool and represented giant fast changes in people's views. And more recently we are seeing bigger much-less-surprising changes that are still helping a lot in raising the tens of billions of dollars that people are raising. And the innovations we are seeing are increasingly things that trade off against modest improvements in model size, there are fewer and fewer big surprises, just like you'd predict. It's clearer and clearer to more and more people what the roadmap is---the roadmap is not yet quite as clear as in semiconductors, but as far as I can tell that's just because the field is still smaller.

[Yudkowsky][13:23]

I sure wasn't imagining there was a roadmap to AGI! Do you perchance have one which says that AGI is 30 years out?

From my perspective, you could as easily point to the Wright Flyer as an atomic bomb. Perhaps this reflects again the "innovation vs industry" difference, where I think in terms of building a thing that goes foom thereby bypassing our small cute world GDP, and you think in terms of industries that affect world GDP in an invariant way throughout their lifetimes.

Would you perhaps care to write off the atomic bomb too? It arguably didn't change the outcome of World War II or do much that conventional weapons in great quantity couldn't; Japan was bluffed into believing the US could drop a nuclear bomb every week, rather than the US actually having that many nuclear bombs or them actually being used to deliver a historically outsized impact on Japan. From the industry-centric perspective, there is surely some graph you can draw which makes nuclear weapons also look like business as usual, especially if you go by destruction per unit of whole-industry non-marginal expense, rather than destruction per bomb.

[Christiano][13:27]

seems like you have to make the wright flyer much better before it's important, and that it becomes more like an industry as that happens, and that this is intimately related to why so few people were working on it

I think the atomic bomb is further on the spectrum than almost anything, but it still doesn't feel nearly as far as what you are expecting out of AI

the manhattan project took years and tens of billions; if you wait an additional few years and spend an additional few tens of billions then it would be a significant improvement in destruction or deterrence per $ (but not totally insane)

I do think it's extremely non-coincidental that the atomic bomb was developed in a country that was practically outspending the whole rest of the world in "killing people technology"

and took a large fraction of that country's killing-people resources

eh, that's a bit unfair, the us was only like 35% of global spending on munitions

and the manhattan project itself was only a couple percent of total munitions spending

[Yudkowsky][13:32]

a lot of why I expect AGI to be a disaster is that I am straight-up expecting AGI to be different. if it was just like coal or just like nuclear weapons or just like viral biology then I would not be way more worried about AGI than I am worried about those other things.

[Christiano][13:33]

that definitely sounds right

but it doesn't seem like you have any short-term predictions about AI being different

9.2. AI alignment vs. biosafety, and measuring progress

[Yudkowsky][13:33]

are you more worried about AI than about bioengineering?

[Christiano][13:33]

I'm more worried about AI because (i) alignment is a thing, unrelated to takeoff speed, (ii) AI is a (ETA: likely to be) huge deal and bioengineering is probably a relatively small deal

(in the sense of e.g. how much $ people spend, or how much $ it makes, or whatever other metric of size you want to use)

[Yudkowsky][13:35]

what's the disanalogy to (i) biosafety is a thing, unrelated to the speed of bioengineering? why expect AI to be a huge deal and bioengineering to be a small deal? is it just that investing in AI is scaling faster than investment in bioengineering?

[Christiano][13:35]

no, alignment is a really easy x-risk story, bioengineering x-risk seems extraordinarily hard

It's really easy to mess with the future by creating new competitors with different goals, if you want to mess with the future by totally wiping out life you have to really try at it and there's a million ways it can fail. The bioengineering seems like it basically requires deliberate and reasonably competent malice whereas alignment seems like it can only be averted with deliberate effort, etc.

I'm mostly asking about historical technologies to try to clarify expectations, I'm pretty happy if the outcome is: you think AGI is predictably different from previous technologies in ways we haven't seen yet

though I really wish that would translate into some before-end-of-days prediction about a way that AGI will eventually look different

[Yudkowsky][13:38]

in my ontology a whole lot of threat would trace back to "AI hits harder, faster, gets too strong to be adjusted"; tricks with proteins just don't have the raw power of intelligence

[Christiano][13:39]

in my view it's nearly totally orthogonal to takeoff speed, though fast takeoffs are a big reason that preparation in advance is more useful

(but not related to the basic reason that alignment is unprecedentedly scary)

It feels to me like you are saying that the AI-improving-AI will move very quickly from "way slower than humans" to "FOOM in <1 year," but it just looks like that is very surprising to me.

However I do agree that if AI-improving-AI was like AlphaZero, then it would happen extremely fast.

It seems to me like it's pretty rare to have these big jumps, and it gets much much rarer as technologies become more important and are more industry-like rather than innovation like (and people care about them a lot rather than random individuals working on them, etc.). And I can't tell whether you are saying something more like "nah big jumps happen all the time in places that are structurally analogous to the key takeoff jump, even if the effects are blunted by slow adoption and regulatory bottlenecks and so on" or if you are saying "AGI is atypical in how jumpy it will be"

[Yudkowsky][13:44]