The Best of LessWrong

For the years 2018, 2019 and 2020 we also published physical books with the results of our annual vote, which you can buy and learn more about here.

Rationality

Optimization

/h4ajljqfx5ytt6bca7tv)

/h4ajljqfx5ytt6bca7tv)

/h4ajljqfx5ytt6bca7tv)

/h4ajljqfx5ytt6bca7tv)

World

Practical

AI Strategy

Technical AI Safety

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

/h4ajljqfx5ytt6bca7tv)

/h4ajljqfx5ytt6bca7tv)

/h4ajljqfx5ytt6bca7tv)

/h4ajljqfx5ytt6bca7tv)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

%20and%20a%20black%20box%20which%20no%20one%20understands%20but%20works%20perfectly%20(representing%20search)/yf2xrrlv4rqga5h8lolh)

How does it work to optimize for realistic goals in physical environments of which you yourself are a part? E.g. humans and robots in the real world, and not humans and AIs playing video games in virtual worlds where the player not part of the environment. The authors claim we don't actually have a good theoretical understanding of this and explore four specific ways that we don't understand this process.

This post is a not a so secret analogy for the AI Alignment problem. Via a fictional dialog, Eliezer explores and counters common questions to the Rocket Alignment Problem as approached by the Mathematics of Intentional Rocketry Institute.

MIRI researchers will tell you they're worried that "right now, nobody can tell you how to point your rocket’s nose such that it goes to the moon, nor indeed any prespecified celestial destination."

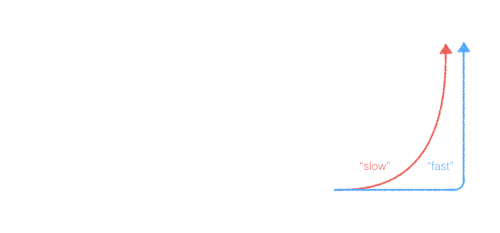

Will AGI progress gradually or rapidly? I think the disagreement is mostly about what happens before we build powerful AGI.

I think weaker AI systems will already have radically transformed the world. This is strategically relevant because I'm imagining AGI strategies playing out in a world where everything is already going crazy, while other people are imagining AGI strategies playing out in a world that looks kind of like 2018 except that someone is about to get a decisive strategic advantage.

Rohin Shah argues that many common arguments for AI risk (about the perils of powerful expected utility maximizers) are actually arguments about goal-directed behavior or explicit reward maximization, which are not actually implied by coherence arguments. An AI system could be an expected utility maximizer without being goal-directed or an explicit reward maximizer.

You want your proposal for an AI to be robust to changes in its level of capabilities. It should be robust to the AI's capabilities scaling up, and also scaling down, and also the subcomponents of the AI scaling relative to each other.

We might need to build AGIs that aren't robust to scale, but if so we should at least realize that we are doing that.

Alex Zhu spent quite awhile understanding Paul's Iterated Amplication and Distillation agenda. He's written an in-depth FAQ, covering key concepts like amplification, distillation, corrigibility, and how the approach aims to create safe and capable AI assistants.

A hand-drawn presentation on the idea of an 'Untrollable Mathematician' - a mathematical agent that can't be manipulated into believing false things.

A collection of examples of AI systems "gaming" their specifications - finding ways to achieve their stated objectives that don't actually solve the intended problem. These illustrate the challenge of properly specifying goals for AI systems.

What if our universe's resources are just a drop in the bucket compared to what's out there? We might be able to influence or escape to much larger universes that are simulating us or can otherwise be controlled by us. This could be a source of vastly more potential value than just using the resources in our own universe.

Can the smallest boolean circuit that solves a problem be a "daemon" (a consequentialist system with its own goals)? Paul Christiano suspects not, but isn't sure. He thinks this question, while not necessarily directly important, may yield useful insights for AI alignment.

Eliezer Yudkowsky offers detailed critiques of Paul Christiano's AI alignment proposal, arguing that it faces major technical challenges and may not work without already having an aligned superintelligence. Christiano acknowledges the difficulties but believes they are solvable.